In a recent observation, Wharton professor and AI researcher Ethan Mollick highlighted a recurring, challenging pattern in how artificial intelligence capabilities are announced and perceived. He describes a three-stage cycle: 1) Overstated Claims, 2) Minor Wins, and 3) Actual Breakthroughs. This pattern, he argues, makes discussing the true state of AI capabilities exceptionally difficult for researchers, developers, and the public.

Mollick points to a concrete example: the "flubbed Erdos problems" from last year, where initial claims of AI solving complex mathematical puzzles were overstated. This was followed by a phase where AI demonstrated genuine but minor utility in aiding mathematical discovery. The final stage, he suggests, is where legitimate breakthroughs eventually emerge from this foundation.

Key Takeaways

- Ethan Mollick observes a consistent pattern in AI development: initial overstated claims are followed by minor, real wins, which later enable genuine breakthroughs.

- This cycle makes discussing true capabilities difficult.

The Three-Stage Pattern

Stage 1: Overstated Claims & Initial Hype

This phase is characterized by dramatic announcements that often capture media attention but fail to hold up to rigorous scrutiny. The "flubbed Erdos problems" serve as a archetype—a high-profile claim that initially suggested a leap in reasoning capability, which was later walked back or clarified. This stage creates noise and skepticism, making it hard to separate marketing from meaningful advancement.

Stage 2: Minor, Real Wins

Following the hype, tangible but incremental progress is made. In the mathematical example, this was AI moving from "solving" famed problems to legitimately assisting researchers in exploration and discovery—a valuable, if less glamorous, achievement. These wins are real and useful, but they often feel anticlimactic compared to the initial promises, leading to a perception of disappointment or "AI winter" sentiment.

Stage 3: The Eventual Breakthrough

Mollick's key insight is that the minor wins of Stage 2 are not the end of the story. They lay the practical and methodological groundwork for subsequent, more significant breakthroughs. The capabilities refined during the "minor win" phase mature, leading to results that finally fulfill—or even exceed—the original ambitious vision, but on a more solid foundation.

Why This Pattern is Problematic

This cycle creates a persistent communication challenge. The initial overhype can poison the well, causing experts and the public to dismiss subsequent, more modest—and genuine—advancements. By the time a real breakthrough arrives, the narrative may be exhausted or met with cynicism. It also makes it difficult to assess the true rate of progress, as the signal is consistently obscured by noise at the beginning of each new capability curve.

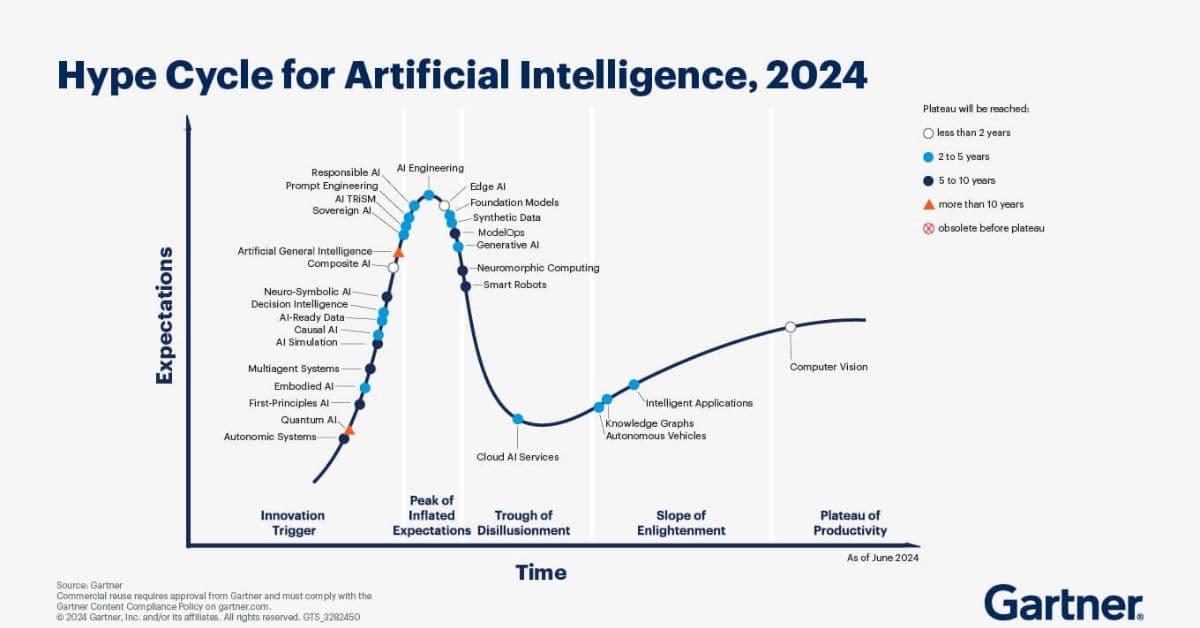

For practitioners building with AI, this pattern necessitates a disciplined approach: ignoring the peak of inflated expectations in Stage 1, carefully evaluating the practical tools emerging in Stage 2, and being architecturally prepared to integrate the paradigm shifts that arrive in Stage 3.

gentic.news Analysis

Mollick's observation is less about a specific model and more about the meta-pattern of discourse in AI, a field prone to intense competition and public fascination. His framing provides a crucial lens for interpreting announcements in 2026, a year marked by both spectacular claims and substantive but quieter engineering progress.

This pattern is evident in recent history. The trajectory of multimodal reasoning followed a similar arc: initial claims of perfect image understanding were overstated (Stage 1), followed by models providing genuine but imperfect visual assistance (Stage 2), leading to the robust, agentic multimodal systems we see today from leaders like OpenAI and Google DeepMind. Similarly, the evolution of coding agents saw hype around "AI replacing developers," a phase of useful copilot tools, and is now entering a breakthrough phase with agents that can handle increasingly complex software engineering tasks, as seen in benchmarks like SWE-Bench.

The pattern underscores a critical tension in the ecosystem. Research organizations and companies, including Anthropic, xAI, and Meta, are incentivized to announce impressive results to attract talent, funding, and users. Meanwhile, the actual integration of these technologies into reliable products—a focus for companies like Microsoft with its Azure AI stack and Amazon with Bedrock—often depends on the less-glamorous work done during the "minor win" phase.

For our technical audience, the takeaway is operational: treat Stage 1 announcements as directional signals, not shipping roadmaps. The real building blocks for production systems will be validated and hardened in Stage 2. Mollick's model advises patience and critical evaluation, suggesting that the most meaningful capabilities for enterprise use are those that have already passed through the hype cycle's wringer.

Frequently Asked Questions

What were the "flubbed Erdos problems" Mollick refers to?

This likely references a 2025 incident where an AI research team claimed their system had made progress on several unsolved mathematical problems inspired by the work of Paul Erdős. The initial press release and media coverage suggested a major breakthrough, but the mathematical community later found the claims to be significantly overstated or the problems not as centrally important as implied. It became a case study in AI hype outpacing reality.

How can developers distinguish between AI hype and real progress?

Look for peer-reviewed papers with reproducible benchmarks, not just press releases. Evaluate whether the capability is demonstrated via a robust API, open-source code, or detailed technical report. Real progress is often accompanied by specific metrics (e.g., "scores 75% on a new agentic benchmark") and candid discussion of limitations. Hype tends to use vague, revolutionary language without concrete, verifiable details.

Does this hype cycle pattern apply to all types of AI advancements?

The pattern is most pronounced for high-profile, frontier capabilities like reasoning, agentic behavior, and scientific discovery. It is less common for incremental engineering improvements in established areas like model efficiency, inference speed, or cost reduction. The more a capability captures the public imagination and promises transformative change, the more likely it is to follow this overclaim-minor win-breakthrough cycle.

What is the practical impact of this cycle on AI product development?

For product teams, it creates risk in roadmap planning. Betting on a Stage 1 hyped capability can lead to dead ends or delays. A more stable strategy is to build on technologies that are in the late Stage 2 or early Stage 3 phase—where initial hype has died down, real utility has been proven in constrained settings, and the path to robust integration is clearer. This often means using models or tools that are 6-18 months behind the absolute cutting-edge announcements.