What Happened

A new research paper published on arXiv introduces Evolving Demonstration Optimization for Chain-of-Thought Feature Transformation, a framework designed to improve how Large Language Models (LLMs) perform feature transformation tasks. Feature transformation is a fundamental data-centric AI process that involves modifying or creating new features from existing data to improve the performance of downstream predictive models.

The core problem the researchers address is the challenge of discovering effective feature transformations from the vast space of possible feature-operator combinations. Traditional approaches rely on discrete search algorithms or latent generation methods, which often suffer from sample inefficiency, generate invalid candidates, or produce redundant transformations with limited coverage.

While LLMs offer strong priors for generating valid transformations, current LLM-based methods typically use static demonstrations in their prompts, leading to limited diversity, redundant outputs, and weak alignment with specific downstream objectives.

Technical Details

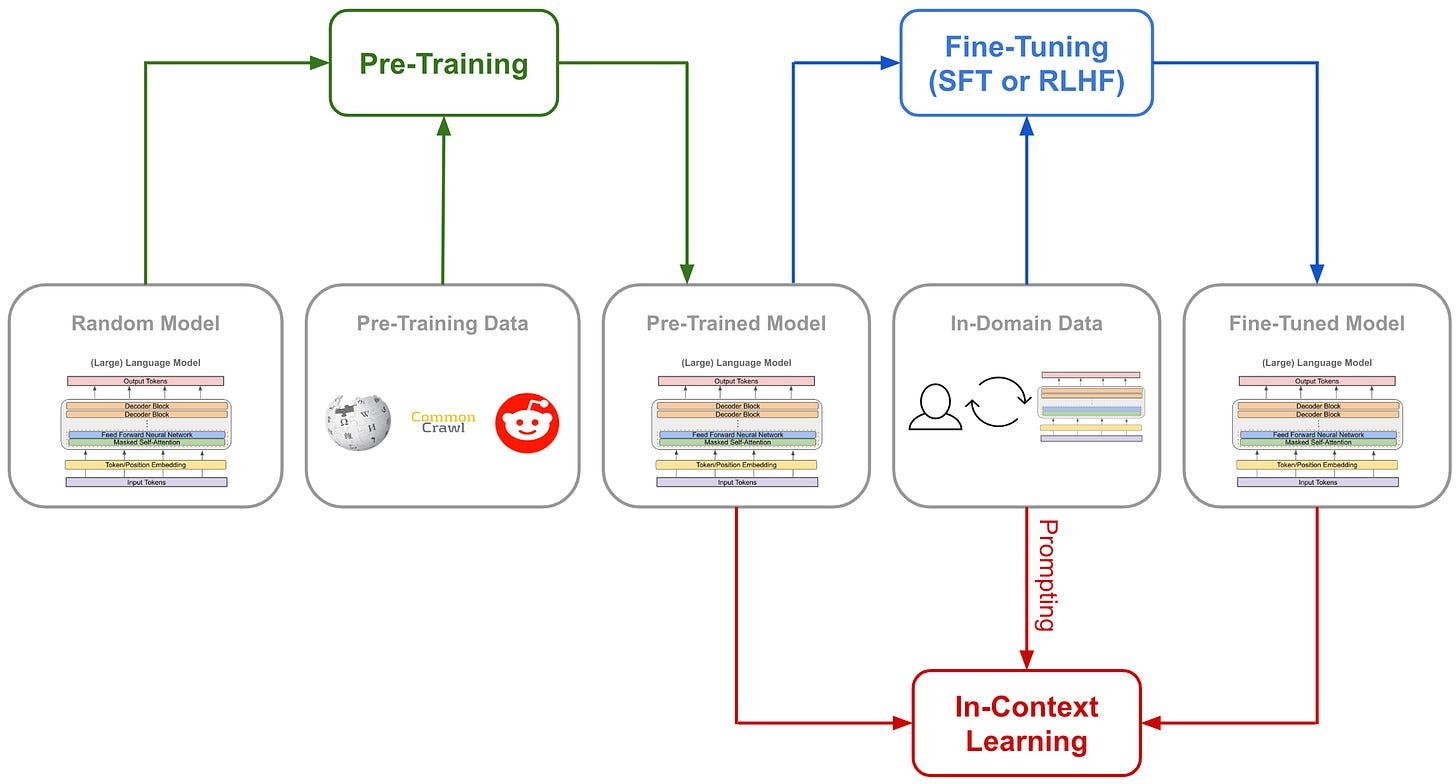

The proposed framework creates a closed-loop system that optimizes the context data (prompts and examples) provided to an LLM for feature transformation tasks. Here's how it works:

Reinforcement Learning Exploration: The system starts by using reinforcement learning to explore high-performing sequences of feature transformations. These sequences represent "trajectories" of transformation steps that have proven effective.

Experience Library Construction: Successful transformation trajectories are stored in an experience library that is continuously updated. Each trajectory is verified against downstream task performance metrics.

Diversity-Aware Context Selection: When prompting an LLM for new transformations, the system uses a selector that chooses demonstration examples from the experience library based on both performance and diversity considerations.

Chain-of-Thought Guidance: The selected demonstrations are presented to the LLM in a chain-of-thought format, showing not just the final transformations but the reasoning process behind them.

Continuous Evolution: As new successful transformations are discovered, they're added to the experience library, creating an evolving system that improves over time.

Key innovation: Instead of using fixed, hand-crafted examples in prompts, this framework dynamically selects and evolves demonstration examples based on what actually works for specific tasks and datasets.

The researchers tested their approach on diverse tabular benchmarks and found that it:

- Outperforms both classical feature transformation methods and existing LLM-based approaches

- Produces more stable results compared to one-shot generation methods

- Generalizes well across both API-based LLMs (like GPT-4) and open-source models

- Remains robust across different downstream evaluators and metrics

Retail & Luxury Implications

While the paper doesn't specifically mention retail applications, the technology has clear potential implications for data science teams in retail and luxury sectors:

Customer Analytics Enhancement: Feature transformation is crucial for building effective customer segmentation models, churn prediction systems, and lifetime value calculations. Retailers often work with complex customer data spanning transaction history, browsing behavior, demographic information, and engagement metrics. This framework could help data scientists discover non-obvious feature combinations that better predict customer behavior.

Inventory and Demand Forecasting: Time-series data for inventory management involves numerous potential transformations (lag features, rolling averages, seasonality adjustments, etc.). An evolving demonstration system could help identify the most effective transformation sequences for specific product categories or regions.

Personalization Systems: Recommendation engines and personalization algorithms rely on feature engineering to represent user preferences and item characteristics. This approach could optimize the feature transformations that feed into these systems, potentially improving recommendation quality.

Pricing Optimization: Dynamic pricing models benefit from carefully engineered features that capture market conditions, competitor pricing, inventory levels, and customer price sensitivity. The framework could help discover more effective pricing features.

Implementation Considerations: Retail data science teams would need to adapt this research framework to their specific data environments. The approach requires:

- A reinforcement learning component to explore transformation sequences

- Infrastructure to maintain and query the experience library

- Integration with existing ML pipelines and feature stores

- Careful validation to ensure transformations maintain business interpretability

The paper demonstrates promising results on benchmark datasets, but real-world retail applications would require additional testing with proprietary data and consideration of computational costs versus potential performance gains.