The Innovation

ZorBA (Zeroth-order Federated Fine-tuning with Heterogeneous Block Activation) is a novel federated learning framework specifically designed for fine-tuning large language models (LLMs) across distributed, privacy-sensitive environments. The core innovation addresses two critical bottlenecks in federated learning for LLMs: excessive memory (VRAM) requirements on client devices and high communication overhead between clients and a central server.

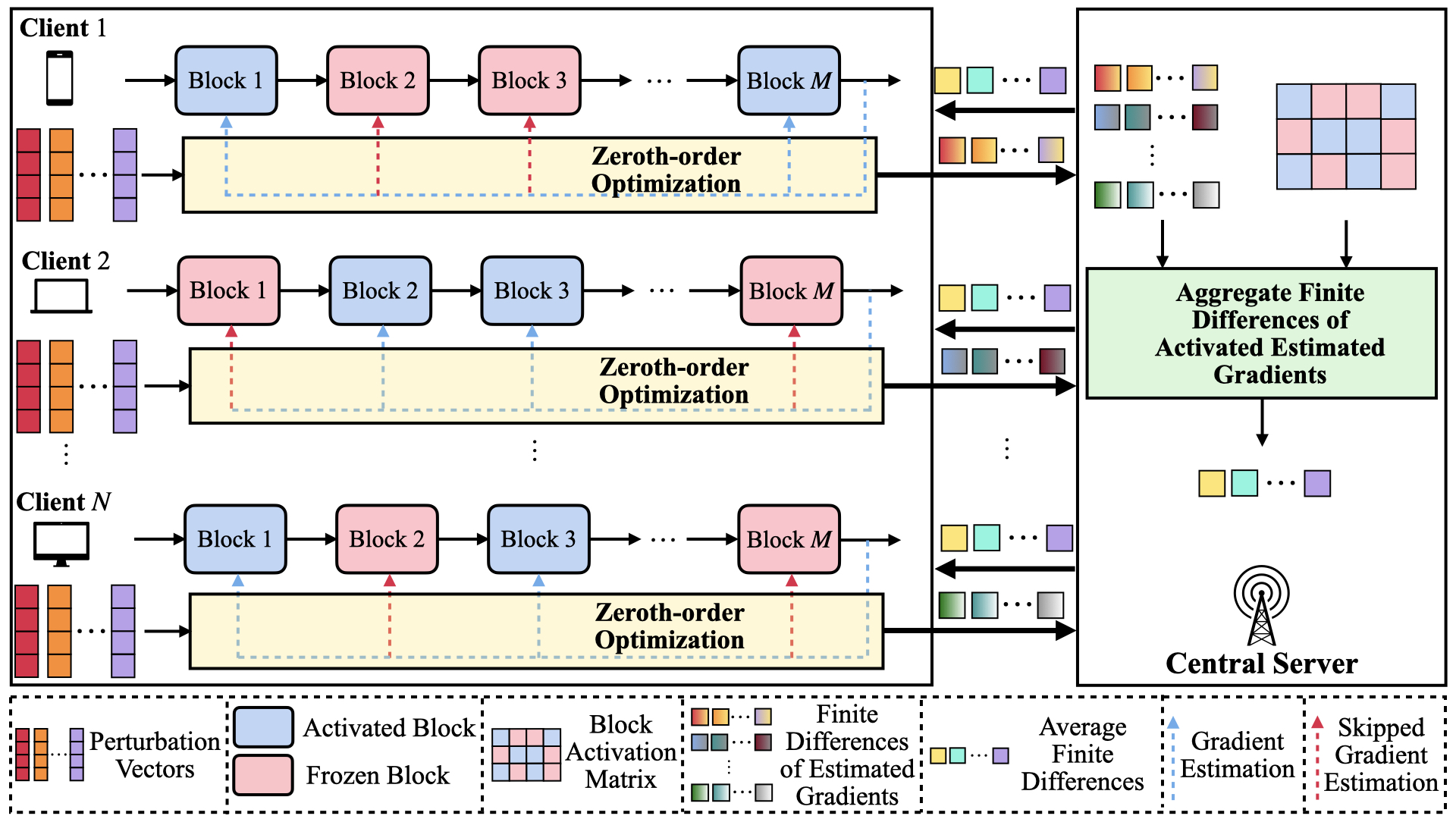

The method employs zeroth-order optimization, which estimates gradients using only forward passes of the model, eliminating the need to store full gradient matrices locally. This dramatically reduces VRAM usage. Furthermore, ZorBA introduces a heterogeneous block activation mechanism. Instead of each client fine-tuning the entire LLM, the central server intelligently allocates different subsets of the model's transformer blocks to different clients. For example, Client A might fine-tune blocks 1-5, while Client B fine-tunes blocks 6-10, based on their data characteristics and computational capacity. The server then aggregates these specialized updates. To minimize communication, the framework uses shared random seeds and finite difference gradient estimates, sending only compact vectors instead of massive model weights.

Theoretical analysis and experiments show ZorBA reduces VRAM usage by up to 62.41% compared to standard federated fine-tuning baselines, while maintaining model performance and significantly cutting communication costs. It formulates and solves an optimization problem to decide which blocks to activate for which clients, balancing convergence speed and resource usage.

Why This Matters for Retail & Luxury

For luxury retail, data is both the most valuable asset and the most sensitive liability. Client purchase histories, personal preferences, styling notes, and conversation logs are siloed across flagship stores, regional offices, e-commerce platforms, and wholesale partners. Centralizing this data for AI training is often legally impossible (due to GDPR, CCPA) and strategically risky.

ZorBA's federated approach directly enables several high-impact use cases:

- Privacy-Preserving Clienteling AI: Fine-tune a shared LLM on real client interactions and purchase data from hundreds of boutiques worldwide without ever moving the raw data from the store's CRM or client book. The resulting model powers hyper-personalized outreach, product recommendations, and conversation assistants for sales associates.

- Cross-Region Trend Modeling: Train a model to predict emerging style trends by learning from localized sales and social data in Milan, Paris, Tokyo, and New York simultaneously, while keeping each region's competitive insights confidential.

- Supplier & Partner Collaboration: Collaboratively improve supply chain or sustainability forecasting models with material suppliers or manufacturing partners by learning from their operational data, without requiring them to share proprietary information.

- Unified Customer Intelligence: Create a global view of customer preferences and lifetime value by federated learning across all touchpoints (e-commerce, mobile app, in-store), even when these systems are managed by different entities or in different jurisdictions.

The primary beneficiaries are the CRM, Clienteling, and Data Science teams, who gain the ability to deploy sophisticated LLM-powered personalization while the Legal and Compliance teams maintain rigorous data governance.

Business Impact & Expected Uplift

The direct impact is operational and strategic, paving the way for revenue-generating AI applications that were previously blocked by privacy constraints.

- Cost Reduction & Efficiency: The 62.41% reduction in VRAM usage translates directly to lower infrastructure costs. Fine-tuning a large model (e.g., Llama 2 7B) typically requires high-end GPUs (e.g., A100 with 40-80GB VRAM). ZorBA could enable this process on more accessible hardware (e.g., a single RTX 4090 with 24GB VRAM) at each location, democratizing access. Reduced communication overhead also cuts cloud egress costs.

- Revenue Uplift (Indirect but Significant): The ultimate value is enabling personalized AI applications. While ZorBA itself is an enabling technology, the applications it unlocks have proven benchmarks. For example, Boston Consulting Group (BCG) research indicates that personalization can drive a 10-30% uplift in revenue for luxury retailers. A federated clienteling model that improves recommendation relevance could capture a portion of this uplift by increasing conversion rates and average order value.

- Risk Mitigation Value: Avoiding data centralization eliminates massive potential GDPR fines (up to 4% of global turnover) and protects brand reputation from data breach scandals. This is a critical, albeit non-financial, impact.

- Time to Value: The initial setup and integration phase is measured in quarters (2-3). However, once the federated infrastructure is established, incremental fine-tuning cycles for new models or use cases can be deployed in weeks.

Implementation Approach

Implementing ZorBA is a Medium-to-High complexity project, requiring specialized machine learning engineering expertise.

- Technical Requirements:

- Data: Access to decentralized datasets (e.g., store-level CRM exports, anonymized interaction logs). Data must be formatted consistently for the target task (e.g., client query and response pairs for a chat model).

- Infrastructure: A central orchestration server and compute nodes (clients) at each participating location (store server, regional cloud instance). Clients need GPUs, but requirements are reduced.

- Team Skills: Machine Learning Engineers with expertise in federated learning frameworks (like Flower or NVIDIA FLARE), PyTorch, and LLM fine-tuning. DevOps skills for secure deployment.

- Integration Points: The system would integrate at the data layer with local CRM/Clienteling tools (e.g., Salesforce, Clientela) to access training data. The fine-tuned model would then be served via an API to downstream applications like associate-facing apps or marketing automation platforms.

- Estimated Effort: 6-9 months for a pilot involving 3-5 stores or regions. This includes architecture design, development of the ZorBA adaptation, secure deployment, initial fine-tuning, and integration with one pilot application (e.g., a recommendation widget). Scaling globally would be an ongoing program.

Governance & Risk Assessment

This approach is fundamentally aligned with strong data governance but introduces new technical risks.

- Data Privacy & Compliance: This is ZorBA's primary strength. It operates on a principle of data minimization and localization. Personal data never leaves its original jurisdiction or system, aligning perfectly with GDPR's "privacy by design" principle and cross-border data transfer restrictions. However, governance must ensure the training data on each client is lawfully processed and that the aggregated model cannot be reverse-engineered to reveal individual data.

- Model Bias & Fairness: Federated learning can amplify bias if data distributions across clients are skewed. For example, if only boutiques in wealthy neighborhoods participate, the model may become biased toward high-net-worth preferences. Proactive measures are needed: auditing client data for representativeness, using fairness-aware aggregation techniques at the server, and continuous monitoring of model outputs across different client segments.

- Maturity Level: Research / Prototype. ZorBA is a novel academic framework (arXiv preprint, not peer-reviewed). While its components (federated learning, zeroth-order optimization) are established, this specific integration for LLMs is at the cutting edge. It is not available as a commercial off-the-shelf product.

- Strategic Recommendation: Luxury brands should adopt a phased experimental approach. This is not yet a "buy and deploy" technology. The recommendation is to:

- Pilot: Partner with a research lab or specialized AI vendor to implement a ZorBA-inspired proof-of-concept on a non-critical, anonymized dataset (e.g., product description generation using data from different departments).

- Build Internal Competency: Task a central AI/ML team with deeply understanding federated learning and its implications.

- Strategic Planning: Identify 1-2 high-value, data-sensitive use cases (e.g., global client sentiment analysis from store notes) where federated learning is the only viable path. Begin architectural planning for a secure federated infrastructure.

For luxury houses, the long-term strategic imperative of leveraging collective data without compromising privacy makes federated learning essential. ZorBA represents a meaningful step towards making this technically feasible for the most powerful AI models.