Key Takeaways

- The source provides a foundational comparison of fine-tuning and Retrieval-Augmented Generation (RAG) for enhancing AI models.

- It uses the analogy of teaching during training versus providing a book during an exam, clarifying their distinct roles in AI application development.

What Happened: A Foundational Primer

The source article serves as a clear, high-level primer on two of the most critical techniques for adapting large language models (LLMs) to specific tasks: fine-tuning and Retrieval-Augmented Generation (RAG). It frames the distinction with a simple, powerful analogy: fine-tuning is like teaching a student new material during their training, while RAG is like handing them a reference book during the exam. This cuts through the jargon to explain that fine-tuning modifies the model's internal weights and knowledge base, whereas RAG keeps the model static but augments its responses with real-time, external data retrieval.

This clarification is timely, as the industry grapples with how to best deploy LLMs for specialized use cases. The article positions RAG as the go-to for dynamic, fact-heavy applications where information changes frequently, and fine-tuning as better suited for mastering a specific style, tone, or deeply ingrained domain logic.

Technical Details: Core Concepts Explained

Fine-Tuning involves taking a pre-trained foundation model (like GPT-4 or Claude) and continuing its training on a specialized, curated dataset. This process adjusts the model's parameters, effectively "baking" new knowledge or behavioral patterns into its neural network. It's powerful for creating a model that consistently outputs in a brand's voice or follows complex, proprietary reasoning chains. However, it's computationally expensive, requires high-quality data, and the resulting model's knowledge is frozen at the point of training.

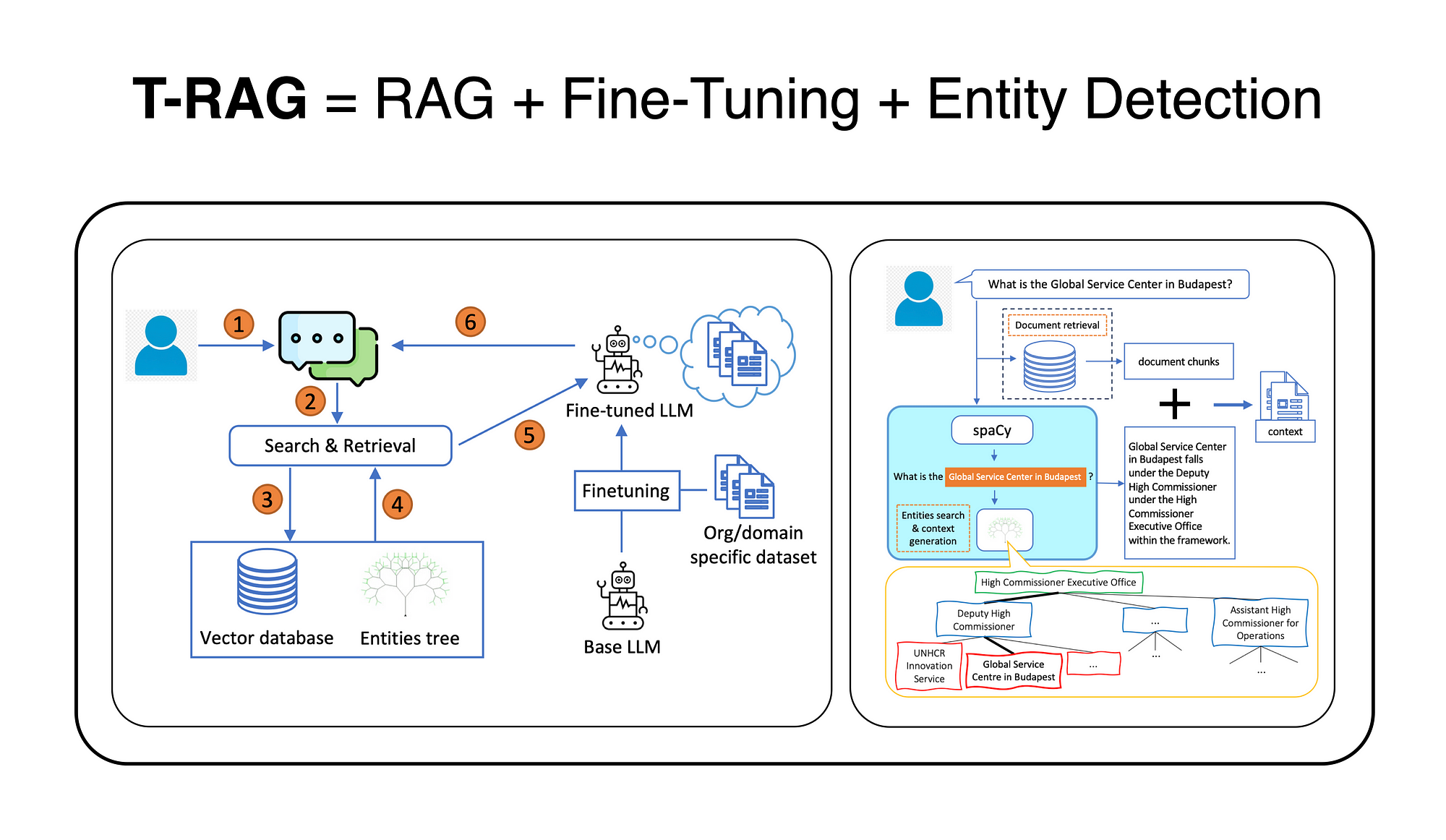

Retrieval-Augmented Generation (RAG), in contrast, is an architectural pattern. It keeps the core LLM unchanged. When a query is received, a retrieval system (often using vector embeddings) searches a connected knowledge base—like a product catalog, internal policy document, or real-time inventory system—for relevant information. This retrieved context is then fed to the LLM alongside the original query, instructing it to answer based on the provided documents. This makes the system highly adaptable; updating the knowledge base instantly updates the AI's responses, a critical feature for accuracy in fast-moving environments.

Retail & Luxury Implications: Strategic Choices for AI Deployment

For retail and luxury AI leaders, this isn't an academic debate but a core strategic decision with direct implications for customer experience, operational efficiency, and brand integrity.

Use RAG for Dynamic, Factual Systems:

- Hyper-Personalized Customer Service: A concierge-style AI agent can use RAG to pull real-time data from a CRM (purchase history, preferences), inventory systems (stock levels across stores), and latest campaign details to answer customer queries accurately.

- Intelligent Product Discovery: A shopping assistant can retrieve information from a constantly updated product catalog with detailed attributes (materials, craftsmanship, sustainability credentials) to provide precise recommendations.

- Internal Knowledge Hubs: Staff-facing agents for store associates can retrieve the latest SOPs, brand guidelines, and shipment data, ensuring consistent, on-brand communication.

RAG's strength here is its agility. As our knowledge graph shows, Shopify is already using AI Agents, which almost certainly rely on RAG patterns to interact with merchant data. The recent trend of 16 articles on RAG this week alone underscores its move from research to production, though leaders must heed our prior coverage on its vulnerabilities, such as the critical "poisoned RAG" vulnerability exposed in April 2026, where just a few corrupted documents could skew system outputs.

Use Fine-Tuning for Brand Voice and Complex Judgment:

- Consistent Brand Narrative: Fine-tune a model on decades of campaign copy, press releases, and designer notes to create a tool that generates marketing copy, product descriptions, or email campaigns that are unmistakably "in-house."

- Specialized Creative & Design Assistance: Train a model on a specific aesthetic—like a maison's archival sketches and design philosophy—to assist in mood board generation or initial concept ideation that aligns with heritage.

- High-Stakes Decision Engaging: For tasks requiring nuanced judgment, like predicting collection success or analyzing complex supplier negotiations, a finely-tuned model on historical internal data may develop more reliable reasoning patterns than a RAG system stitching together retrieved snippets.

Fine-tuning excels at capturing intangible qualities and deep domain expertise. The process is more involved but creates a proprietary asset—a model imbued with your brand's unique DNA.

In practice, the most sophisticated systems use a hybrid approach. A fine-tuned model, optimized for your brand's communication style, can serve as the reasoning engine within a RAG architecture that feeds it real-time data. This follows the entity relationship noted in our KG, where RAG frequently uses Fine-Tuning, suggesting their complementary nature in advanced implementations.