After four years using GitHub Copilot, Cursor, and Claude Code, one developer reports building faster but experiencing cognitive atrophy and rate-limit panic. The account, published on Dev.to, traces the evolution from Copilot's latency frustrations through Cursor's multi-line edits to Claude Code's autonomous agents and their hidden costs.

Key facts

- Developer used AI coding tools for four years.

- Copilot lacked multi-line edits and had latency issues.

- Cursor offered multi-line edits and faster suggestions.

- Claude Code introduced terminal-based autonomous agents.

- Rate limits caused workflow stoppage and panic.

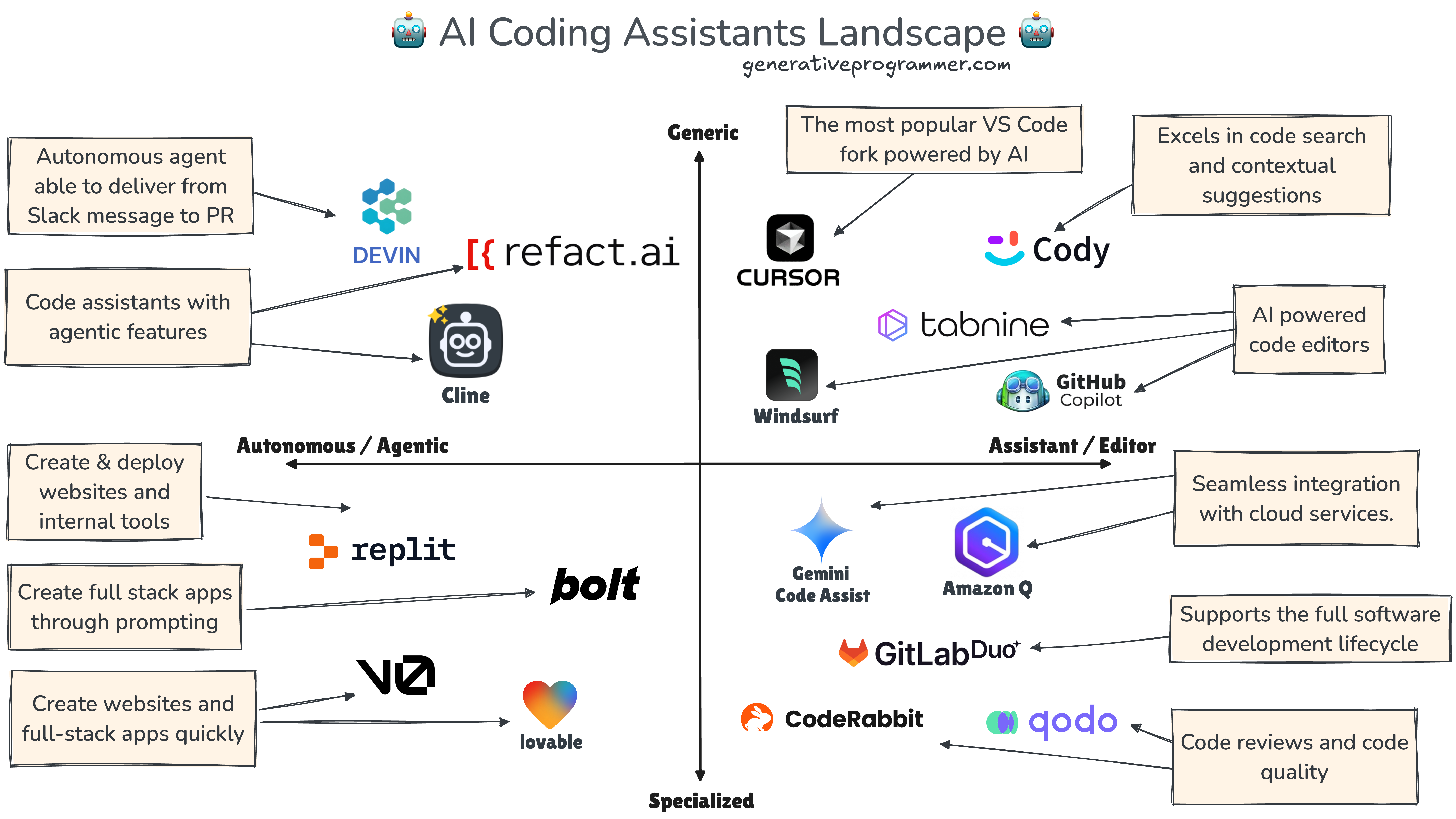

A developer's four-year chronicle of AI-assisted coding, published on Dev.to, reveals a pattern familiar to many in the field: speed gains come with measurable cognitive tradeoffs. The author traces the journey from GitHub Copilot's early days — with its single-line suggestions and frustrating latency — through Cursor's multi-line edits and faster suggestions, to Claude Code's terminal-based autonomous agents.

The account crystallizes around a specific failure mode: "The day I couldn't work was the day it really hit me. I'd burned through my Claude Code messages for the day, stared at the rate-limit screen, and just... stopped." [According to the Dev.to post] This moment of tool dependency echoes a broader pattern reported across the industry.

The post notes that Cursor displaced Copilot rapidly — "Cursor ate Copilot's lunch in months" — because it solved specific pain points: multi-line edits, faster suggestions, and the first experience of an AI agent building and debugging code autonomously. Claude Code then raised the bar further with terminal-native agentic workflows, but introduced new constraints: rate limits, context consumption, and token costs.

The unique take here is the cognitive dimension. The author explicitly describes reaching for the AI agent before thinking through problems, skimming code that would have been read line by line, and feeling panic when the tool is unavailable. This is not a complaint about productivity — the author acknowledges shipping more and completing tasks that would have been impossible four years ago. It is an observation about habit formation and skill atrophy that few vendor blog posts will highlight.

The rate-limit trap

Claude Code rate limits were doubled across Pro, Max, Team, and Enterprise plans on May 6, 2026 [per prior gentic.news reporting], but the developer's experience suggests even doubled limits don't solve the psychological dependency. The moment of hitting a rate limit and being unable to work reveals how deeply the tool is integrated into the workflow — and how fragile that integration is.

Cognitive bill coming due

The developer's most pointed observation: "I pay for it with cognition and my cash." This frames AI-assisted coding not as a pure efficiency gain but as a trade with ongoing costs. The cognition part — reduced problem-solving practice, skimming habits, loss of comfort with deep focus — is the harder cost to measure but potentially the more significant one over a career.

Two lessons the author draws: innovate fast or be displaced (Cursor vs. Copilot), and keep instincts active because "the day you stop noticing the tradeoff is the day the tradeoff wins."

What to watch

Watch for developer surveys quantifying cognitive skill retention vs. tool dependency over multi-year periods, and whether Anthropic or Cursor introduce features that explicitly train or preserve developer reasoning skills rather than bypassing them.