Key Takeaways

- A technical blog details the experience of creating a custom tracing system for LLM applications using FastAPI and Ollama, then migrating to MLflow Tracing.

- The author discusses practical challenges with spans, traces, and debugging before concluding that established MLOps tools offer better production readiness.

What Happened

A developer recently documented their experience building a custom LLM tracing system from scratch using FastAPI and Ollama, then ultimately switching to MLflow Tracing. The article provides a firsthand account of the practical challenges involved in monitoring and debugging LLM applications in development environments.

The author began by creating their own tracing infrastructure to track LLM calls, inputs, outputs, and latencies within applications built with FastAPI and Ollama (a popular tool for running local LLMs like Meta's Llama family). This DIY approach allowed for deep customization but revealed significant complexity in properly implementing spans, traces, and debugging workflows.

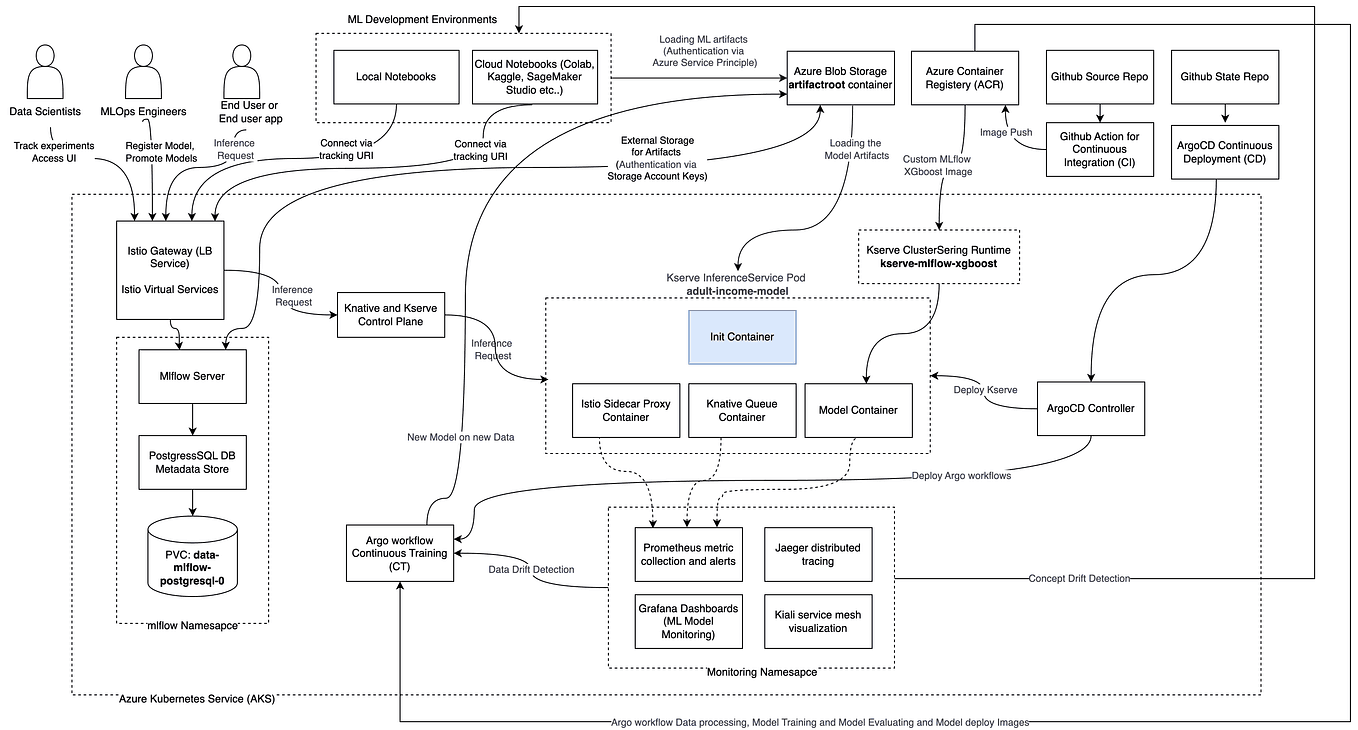

After working with their custom system, the developer evaluated MLflow Tracing—part of the broader MLflow MLOps platform—and found it provided more robust, production-ready functionality out of the box. The switch represented a shift from building infrastructure to leveraging established tools that handle the operational complexities of tracing at scale.

Technical Details

LLM tracing involves capturing the execution flow of LLM-powered applications, similar to distributed tracing in microservices architectures. Key concepts include:

- Spans: Individual units of work (e.g., a single LLM call, a retrieval step, a function execution)

- Traces: Collections of spans that represent an entire request's journey through the system

- Context propagation: Passing trace identifiers across service boundaries

When working with Ollama (which provides local LLM inference capabilities) and FastAPI (a Python web framework), developers need to instrument their code to capture these traces. The custom approach required manually creating span hierarchies, storing trace data, and building visualization tools—all while maintaining performance and managing storage.

MLflow Tracing offers a standardized alternative with:

- Automatic instrumentation for common LLM frameworks

- Built-in storage and query capabilities

- Integration with MLflow's experiment tracking and model registry

- Visualization tools for analyzing trace data

Retail & Luxury Implications

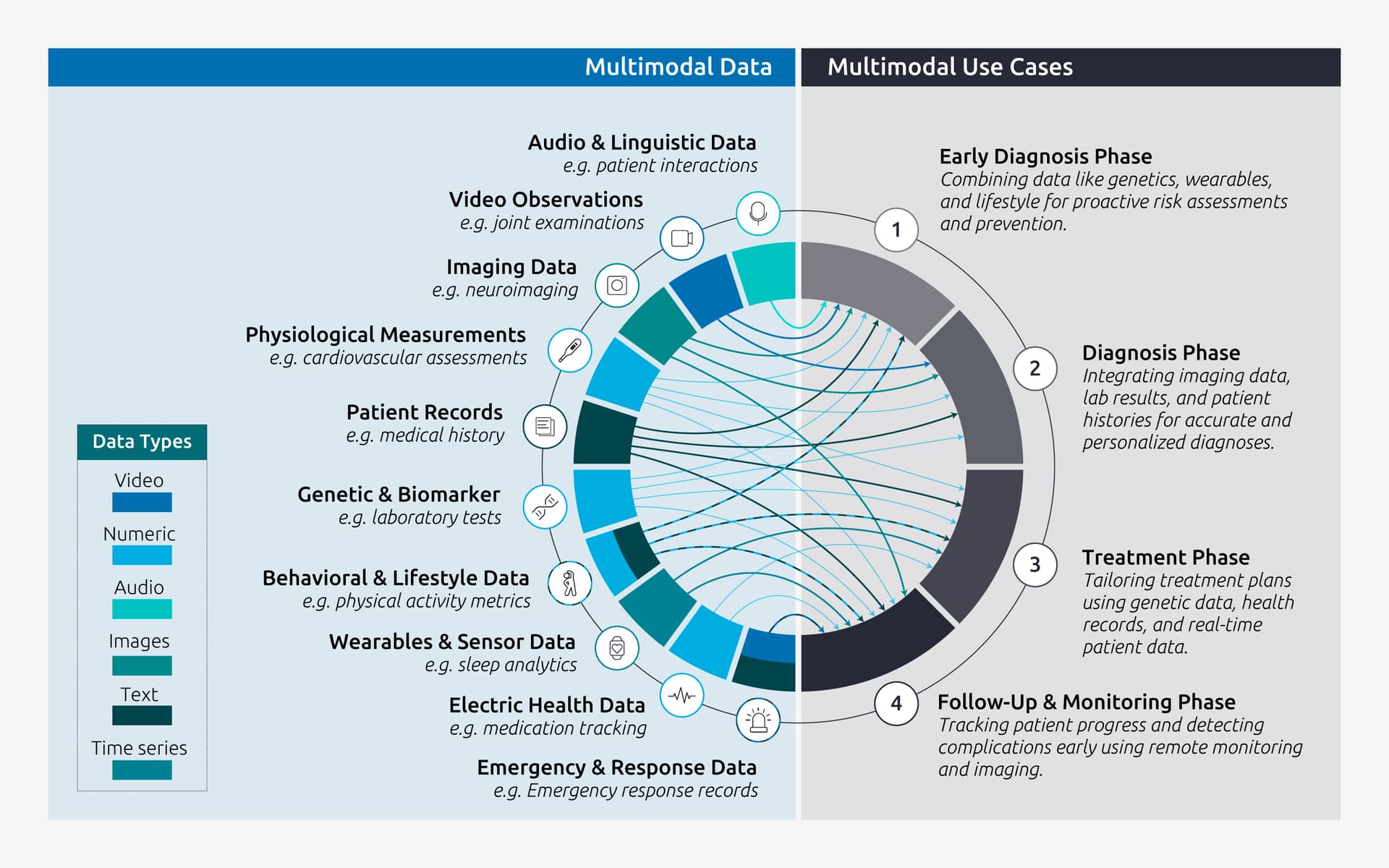

For retail and luxury companies experimenting with LLMs, this developer's journey highlights a critical infrastructure decision point. As brands deploy LLMs for customer service chatbots, product recommendation engines, content generation, and personalized shopping assistants, they face the same monitoring challenges described in the article.

Development vs. Production Readiness: Many luxury brands begin with experimental LLM projects using local models (like those served through Ollama) and lightweight frameworks (like FastAPI). During this exploration phase, custom tooling might suffice. However, as these applications move toward production—handling customer data, supporting concurrent users, and requiring reliability—the limitations of DIY systems become apparent.

Observability for Customer-Facing AI: In luxury retail, where customer experience is paramount, understanding how LLM systems behave in real-time is non-negotiable. Tracing helps answer questions like: Why did the personal shopper assistant give that recommendation? How long did the product description generator take? What context was missing when the chatbot misunderstood a request?

MLOps Maturity Progression: The developer's transition from custom code to MLflow reflects a broader pattern in enterprise AI adoption. Retailers often start with proof-of-concept projects using accessible tools, then graduate to industrial-grade platforms as they scale. This is particularly relevant given recent developments in the LLM infrastructure space, including Ollama's expansion to cloud-hosted deployment options.

Implementation Considerations

For retail AI teams evaluating tracing solutions:

- Start with Instrumentation Early: Even in prototypes, implement basic tracing to understand LLM behavior patterns

- Evaluate Against Production Requirements: Consider concurrent user loads, data privacy requirements (especially for luxury client data), and integration with existing monitoring stacks

- Plan for Multi-Model Environments: Luxury brands often experiment with multiple LLMs (proprietary, open-source like Llama, and specialized models); tracing systems should accommodate this diversity

- Align with Data Governance: Ensure trace data containing customer interactions complies with privacy regulations and internal data policies

gentic.news Analysis

This developer's experience reflects a broader trend in the LLM infrastructure ecosystem: the gap between developer-friendly experimentation tools and production-ready systems. The knowledge graph reveals that Llama models (frequently used with Ollama) have faced production scalability challenges—a benchmark just last week showed Llama collapsing under a load of just 5 concurrent users. This context makes the tracing discussion particularly relevant: without proper observability, diagnosing such failures becomes nearly impossible.

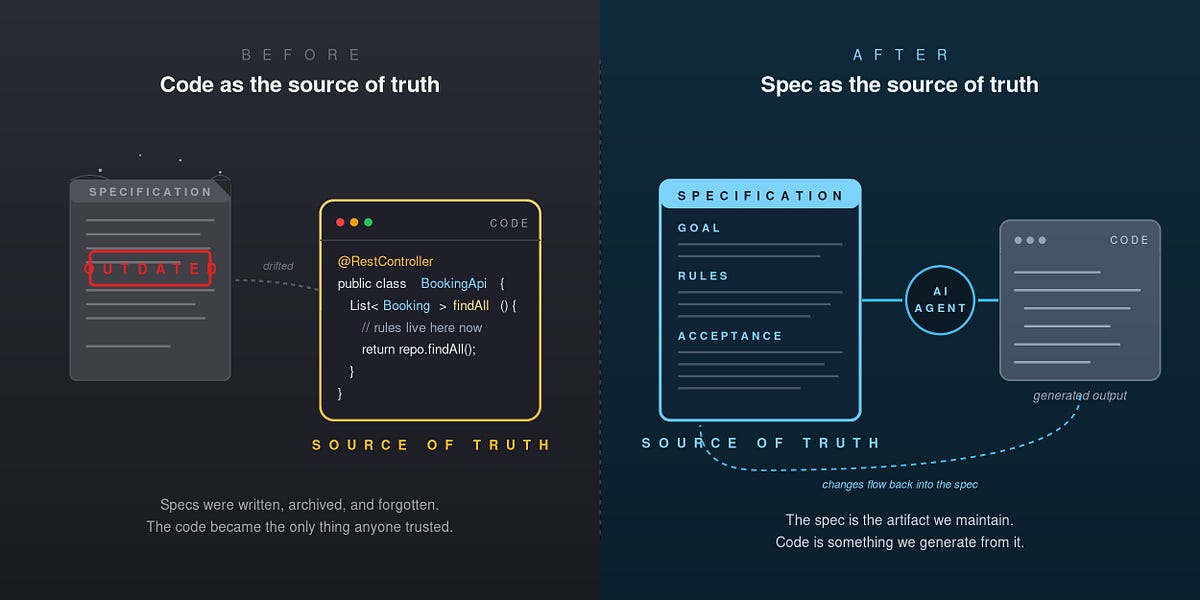

MLOps tools like MLflow are seeing increased adoption as organizations move from LLM prototypes to production systems. This aligns with our recent coverage of the shift "From MLOps to AgentOps" and the growing importance of enterprise feature stores like Redis Feature Form. The trend toward comprehensive AI operations platforms suggests that luxury retailers building serious AI capabilities will need to invest in these foundational systems rather than relying on custom-built solutions.

The relationship between Llama (Meta's model family) and tools like Ollama creates an accessible entry point for retailers experimenting with local LLMs. However, as our comparison of "Ollama vs. vLLM vs. llama.cpp" highlighted, different serving options have distinct performance characteristics. Tracing across these varied deployment options requires flexible systems that can handle diverse inference backends—a strength of platforms like MLflow.

For luxury brands, the key insight is that LLM observability isn't a luxury add-on but a necessity for responsible deployment. When AI systems interact with high-value customers, understanding every interaction's provenance, performance, and potential issues becomes part of delivering the premium experience these brands are known for.