The Innovation

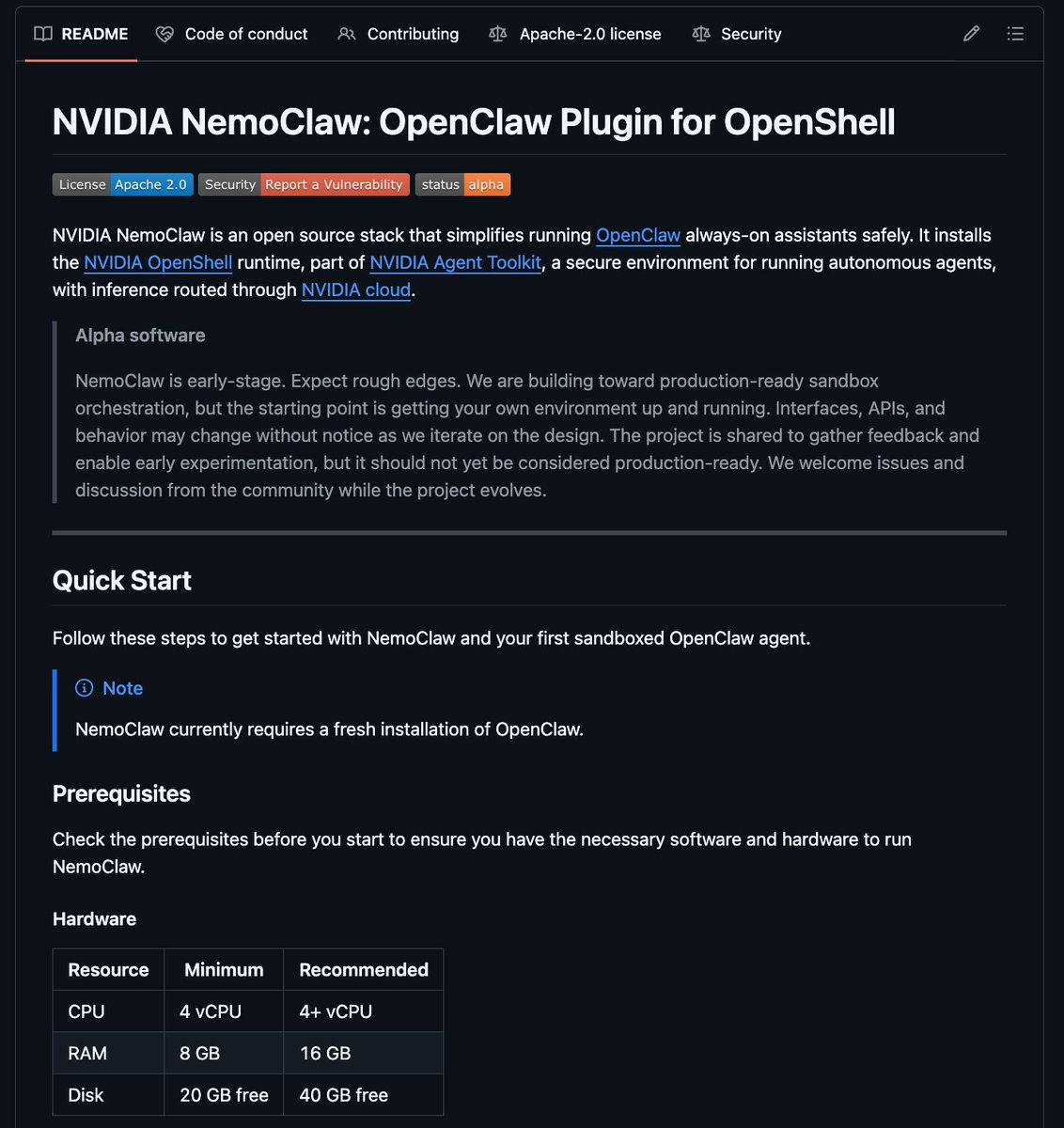

NVIDIA has introduced a new capability within its NeMo Evaluator framework called the "nel-assistant agent skill." This tool addresses a major bottleneck in deploying Large Language Model (LLM)-based applications: the complex, time-consuming process of evaluation and testing. Traditionally, developers have had to manually write lengthy and intricate YAML configuration files to define test criteria, expected outputs, and safety guardrails for conversational AI agents. This new skill allows developers to configure, run, and monitor these evaluations using natural language instructions directly within their coding environment, such as Cursor IDE, or via API.

Built on the established NeMo Evaluator library, the tool automates the creation of production-ready evaluation pipelines. Developers can simply describe what they want to test (e.g., "assess the assistant's ability to handle product return requests politely and accurately") and the system generates the necessary evaluation logic. This significantly reduces configuration overhead from hours or days to minutes, enabling rapid iteration and continuous monitoring of AI agent performance in real-world scenarios.

Why This Matters for Retail & Luxury

For luxury brands, conversational AI is no longer a novelty but a critical component of the digital client experience. The primary applications are:

- AI-Powered Clienteling Assistants: Virtual stylists that provide personalized product recommendations, style advice, and brand storytelling through chat interfaces on websites, apps, or messaging platforms (WhatsApp, WeChat).

- High-Touch Customer Service Chatbots: Handling intricate inquiries about product care, store appointments, order status, and returns with the brand's signature tone of voice.

- Internal Knowledge Assistants: Helping store associates and call center agents quickly access information on inventory, client history, or product details.

The challenge has been ensuring these agents are consistently accurate, brand-aligned, and safe before and after launch. A hallucination about product materials or a tone-deaf response can damage brand equity. This NVIDIA tool directly empowers the Digital, E-commerce, and CRM teams to rigorously and continuously test these agents, ensuring they meet the high standards expected by luxury clientele.

Business Impact & Expected Uplift

Implementing robust, automated evaluation for conversational AI drives several key business metrics:

- Increased Conversion & AOV: A well-tested, reliable virtual stylist can effectively cross-sell and upsell. Industry benchmarks from retail AI deployments suggest that effective conversational assistants can drive a 5-15% increase in average order value (AOV) and improve conversion rates on assisted journeys by 10-30% (sources: Gartner, Retail TouchPoints).

- Reduced Operational Cost: Automating the evaluation cycle reduces the manual QA burden on engineering and product teams. More importantly, by catching errors and biases pre-launch, it minimizes the volume of customer service escalations and costly brand reputation incidents.

- Enhanced Client Loyalty: Consistent, high-quality AI interactions build trust and digital engagement, supporting client retention.

- Time to Value: The core promise of this tool is acceleration. By cutting evaluation setup time from days to minutes, brands can shorten the development cycle for new AI features from quarters to weeks, allowing for faster testing of new use cases (e.g., a chatbot for a new fragrance launch).

Implementation Approach

- Technical Requirements: The skill is part of the NVIDIA NeMo framework, which typically requires GPU-accelerated infrastructure (on-premise, cloud, or via NVIDIA DGX Cloud). Teams need familiarity with Python and LLM ops. The primary data needed is a corpus of example dialogues, brand guidelines, and product catalogs to define test cases.

- Complexity Level: Medium. While the evaluation configuration is simplified, integrating the NeMo Evaluator into a full MLOps pipeline and connecting it to live agent endpoints requires engineering effort. It is not a standalone SaaS product but a developer tool.

- Integration Points: The evaluation system must connect to:

- The LLM Agent Endpoint (e.g., a custom GPT, Claude API, or in-house model powering the chatbot).

- Data Sources like the Product Information Management (PIM) system for factual checks and the Customer Data Platform (CDP) for personalized context (with appropriate privacy gates).

- Monitoring/Dashboarding tools (e.g., Datadog, Grafana) to stream evaluation results.

- Estimated Effort: For a team with existing LLM applications, integrating a basic evaluation pipeline could take 4-8 weeks. Building a comprehensive, automated CI/CD pipeline for AI agents with rigorous safety and quality tests is a quarter-long initiative.

Governance & Risk Assessment

- Data Privacy: Evaluation runs may process synthetic or anonymized customer interaction data. It is crucial to ensure no personally identifiable information (PII) is used in testing without consent and that all data governance policies (GDPR, CCPA) are strictly followed. Evaluations should run in secure, controlled environments.

- Model Bias & Brand Safety: This is the tool's greatest value for luxury. Evaluation criteria must be meticulously designed to detect:

- Cultural & Tone Insensitivity: Ensuring language is appropriate across global markets.

- Body Type & Diversity Bias: Preventing the assistant from making exclusionary recommendations.

- Factual Hallucinations: Critical for product details (e.g., "this bag is calfskin" when it's lambskin).

- Brand Voice Deviation: Maintaining a consistent, luxurious tone.

- Maturity Level: Production-ready for technical teams. The underlying NeMo framework is mature, and this skill is a productivity enhancement on top of it. It is proven within the AI/ML engineering community but requires skilled implementation.

- Honest Assessment: This is not a plug-and-play solution for business users. It is a powerful enabler for engineering teams already on the journey of deploying LLM agents. For a luxury brand with no in-house LLM ops capability, partnering with a specialized AI vendor that uses such evaluation frameworks internally is the recommended path. The technology is ready, but the organizational readiness is key.

.jpg)