A single X post from @kimmonismus claims the new Gemini Flash model achieves 92% of GPT-5.5's coding performance at 15-20x lower inference cost. The rumor, if true, would represent a dramatic efficiency leap over OpenAI's current flagship.

Key facts

- 92% of GPT-5.5 coding/reasoning performance claimed

- 15-20x lower inference cost than GPT-5.5

- Sub-200ms latency for most queries

- Source: single X post from @kimmonismus

- No official confirmation from Google

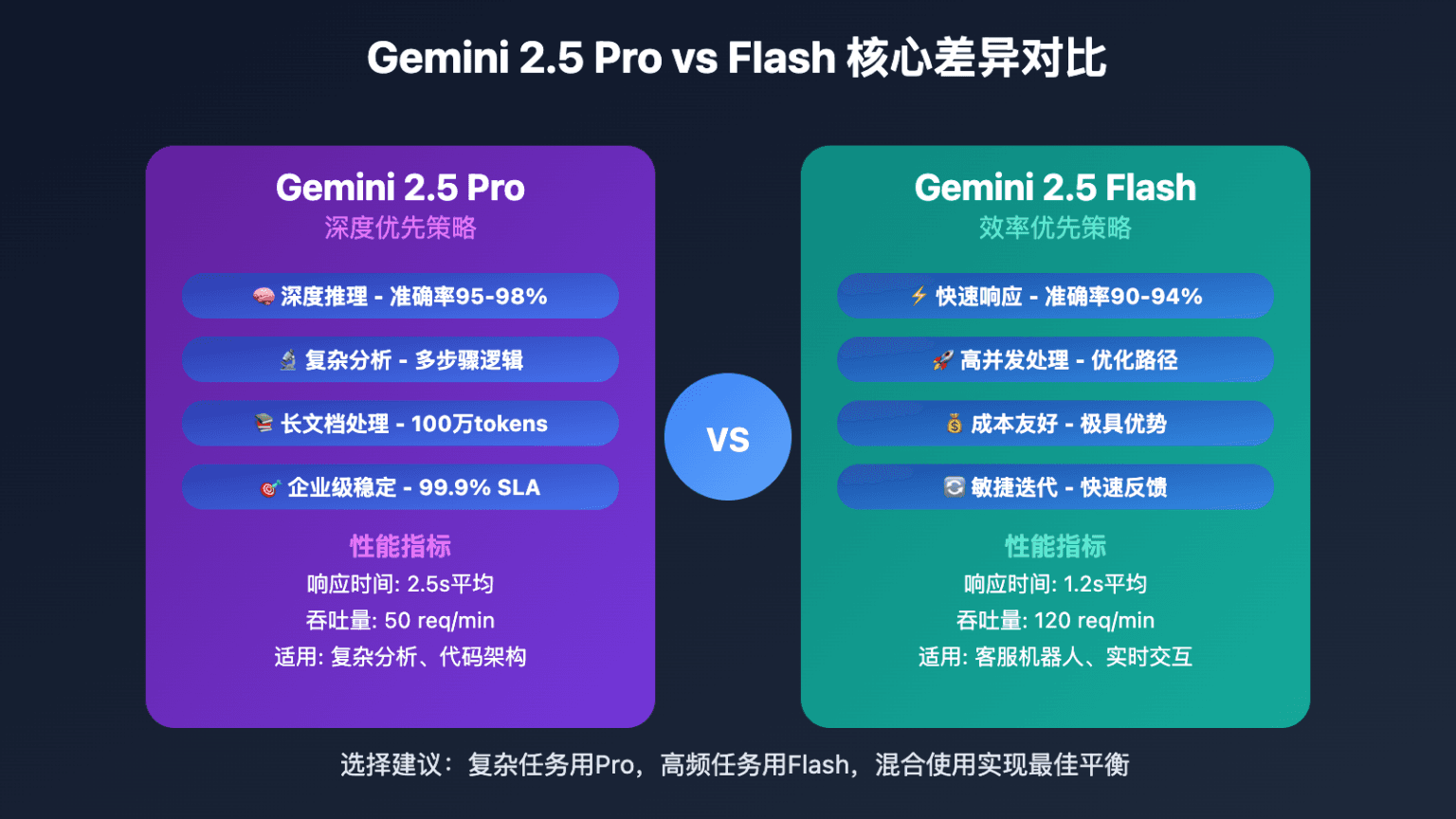

A single X post by @kimmonismus has ignited speculation about an upcoming Gemini Flash model that allegedly delivers 92% of GPT-5.5's coding and reasoning performance at 15-20x lower inference cost, with sub-200ms latency for most queries [According to @kimmonismus]. The claim, if accurate, would mark a significant shift in the cost-performance frontier for frontier models.

Google has not confirmed any details, and the source remains a single unverified post. However, the numbers align with broader industry trends: model compression techniques (e.g., distillation, quantization) have been narrowing the gap between large and small models, and Google's Gemini architecture has previously shown strong efficiency gains in smaller variants.

The rumored 92% figure is particularly striking because it suggests a near-competitive coding ability at a fraction of the cost. For comparison, GPT-5.5 is widely considered the current leader in coding benchmarks, with SWE-Bench scores near 80%. If Gemini Flash can match 92% of that capability, it would challenge the assumption that only massive models can handle complex reasoning tasks.

However, the lack of specific benchmark names or methodologies makes the claim difficult to evaluate. The 15-20x cost advantage is also vague — it could refer to API pricing, training cost, or inference compute, each of which has different implications.

The efficiency race

This rumor fits a pattern: Anthropic's Claude 3.5 Haiku and OpenAI's GPT-4o mini have already demonstrated that smaller models can deliver competitive performance at lower cost. If Google can push that ratio further, it could reshape enterprise deployment decisions, where cost per token is a key constraint.

What's missing

The post does not specify which benchmarks were used, how the 92% figure was measured, or when the model would be released. Without official confirmation or independent validation, the claim should be treated with skepticism.

What to watch

Watch for any official announcement from Google at the upcoming Google I/O or a blog post. Also monitor independent benchmark evaluations (e.g., SWE-Bench, HumanEval) for Gemini Flash results. If the model appears on the LMSYS Chatbot Arena leaderboard, that would provide early validation or refutation.