GitHub has released a new developer tool, internally referred to as "Caveman," which is claimed to reduce AI inference costs by up to 75%. The announcement was made via a tweet from developer Gurisingh, who stated the tool is being "mass-released" and that many developers are "sleeping on it."

Key Takeaways

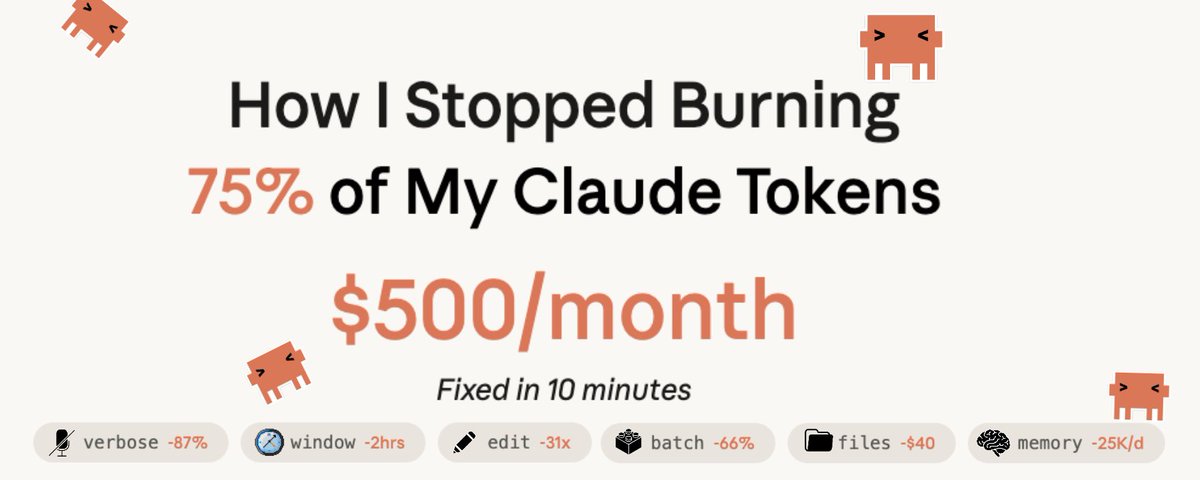

- GitHub has released a new tool named 'Caveman' designed to reduce AI inference costs by up to 75% for developers.

- The announcement, made via a developer's tweet, suggests a focus on optimizing resource usage for AI-powered applications.

What Happened

On April 15, 2026, developer Gurisingh tweeted that GitHub had released a tool designed to significantly cut the costs associated with running AI models. The tool's codename, "Caveman," was mentioned, along with the bold claim of a 75% reduction in costs. The tweet's tone suggests this is a substantial, under-the-radar release from GitHub's engineering teams aimed at the practical economics of AI development.

Context

As AI integration into software development becomes standard, the operational cost of inference—running trained models to generate code, text, or other outputs—has become a major bottleneck. Developers and companies building with large language models (LLMs) from providers like OpenAI, Anthropic, or via self-hosted open-source models face escalating bills. Tools that optimize model inference, such as through better quantization, caching, pruning, or request batching, have become critical for scaling AI applications profitably.

GitHub, owned by Microsoft, is a central platform for software development and has been deeply integrating AI through its Copilot suite. A cost-optimization tool aligns with its strategy to lower barriers for developers building AI-powered features. While official documentation or a product page for "Caveman" was not linked in the source tweet, such a release would fit into GitHub's existing ecosystem of developer tools.

What We Don't Know Yet

The source is a single tweet, so many technical and commercial details are absent:

- Official Name & Availability: Is "Caveman" the final product name or an internal codename? Is it a standalone product, a feature within GitHub Actions, or part of GitHub Copilot?

- Technical Mechanism: How does it achieve the claimed 75% reduction? Possible methods include model distillation, dynamic scaling, intelligent caching of common queries, or integration with cheaper hardware providers.

- Supported Models & Providers: Does it work with any AI API (OpenAI, Anthropic, Google, Mistral) or only with GitHub's own models? Does it optimize self-hosted models?

- Pricing: Is "Caveman" a free tool, a paid add-on, or included in existing GitHub subscription tiers?

gentic.news Analysis

This move is a logical and aggressive play by GitHub to own the infrastructure layer of AI-powered development. If the 75% cost reduction holds in practice, it would dramatically alter the unit economics for startups and enterprises building on LLMs. GitHub, backed by Microsoft's Azure cloud and AI stack, is uniquely positioned to offer such optimization by potentially leveraging Azure's proprietary inference hardware (like Maia chips) or deep discounts on bulk model access.

This follows a clear trend of platform providers moving to reduce the "runtime tax" of AI. In late 2025, we covered Vercel's launch of "AI SDK Optimize," which aimed to cut latency and cost for frontend AI features. GitHub's entry suggests a focus on the broader development lifecycle. By reducing the cost of running AI, GitHub makes its core product—Copilot—and the ecosystem built around it more defensible. It also directly counters efforts from cloud rivals like AWS with its Inferentia chips or Google's Vertex AI optimization tools.

The success of "Caveman" will hinge on its ease of integration and transparency. Developers are wary of vendor lock-in; if this tool only works seamlessly within the GitHub/Microsoft ecosystem, it may see limited adoption from teams using multi-cloud or other CI/CD platforms. However, given GitHub's market dominance, even a partially effective tool could become a de facto standard, further cementing its role as the hub for modern, AI-assisted software engineering.

Frequently Asked Questions

What is GitHub Caveman?

GitHub Caveman appears to be a newly released developer tool from GitHub focused on optimizing AI inference costs. Based on the initial announcement, it claims to reduce these costs by up to 75%, though specific technical details and official documentation are not yet available from the primary source.

How does Caveman reduce AI costs?

The exact technical mechanism is not specified in the announcement. Typically, such cost reductions are achieved through methods like model quantization (reducing numerical precision), pruning (removing unnecessary model weights), efficient request batching, caching of frequent or similar queries, or leveraging cheaper, specialized hardware for inference.

Is GitHub Caveman free to use?

Pricing details were not included in the initial tweet. The tool could be a free feature to enhance the GitHub platform's value, a paid add-on service, or bundled into existing paid plans like GitHub Copilot for Business. Official pricing will need to be confirmed from GitHub's product announcements.

Which AI models does Caveman work with?

The compatibility list is unknown. It could be optimized specifically for GitHub Copilot's underlying models, support a range of popular third-party APIs (OpenAI GPT, Anthropic Claude), or work with open-source models deployed by developers. Its broad utility will depend on this compatibility.