Google has open-sourced TimesFM, a 200-million parameter foundation model designed for time series forecasting that operates in a zero-shot manner—requiring no training or fine-tuning on a user's specific data. The model, which the company has also integrated as an official product feature within BigQuery, aims to eliminate the weeks of custom pipeline development, feature engineering, and hyperparameter tuning typically associated with building forecasting models.

What's New

TimesFM is a decoder-only foundation model pretrained on a massive, real-world corpus of time series data. Its core promise is plug-and-play forecasting: users provide historical sequential data with timestamps, and the model returns quantile forecasts without any additional training. The model is explicitly built for common business forecasting tasks like predicting sales trends, energy consumption, and demand signals.

Technical Details

The model architecture is relatively lean for a foundation model, with 200 million parameters and a 16,000-token context window. This context window determines how much historical data the model can consider when making a prediction. A key built-in feature is its ability to produce quantile forecasts, which provide a range of probable outcomes (e.g., the 10th, 50th, and 90th percentiles) rather than a single point estimate, giving practitioners a measure of prediction uncertainty.

The most significant technical claim is its zero-shot capability. According to the announcement, the model's performance stems from its pretraining on a vast and diverse dataset of real-world time series, allowing it to generalize to unseen data distributions without task-specific fine-tuning.

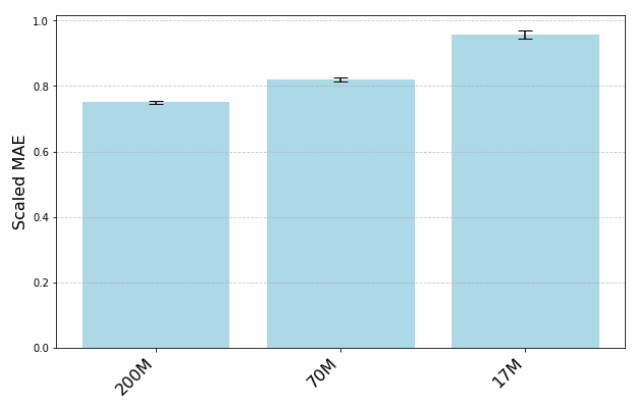

How It Compares

The approach positions TimesFM against two existing paradigms:

- Traditional Statistical Models (e.g., ARIMA, Prophet): These often require manual parameter tuning and struggle with complex, multivariate patterns.

- Custom Deep Learning Models: These can capture complex patterns but require significant labeled data, computational resources, and ML engineering expertise to train and maintain.

TimesFM attempts to offer the pattern-recognition power of deep learning with the accessibility of a pretrained, general-purpose tool. Its integration into BigQuery suggests Google is targeting an enterprise audience that already manages data within its cloud ecosystem, offering forecasting as a direct SQL function.

What to Watch

The primary unknown is benchmark performance. The source tweet does not cite specific metrics on standard time series datasets (e.g., M4, M5, or ETTh). The success of a zero-shot foundation model hinges on its out-of-the-box accuracy across diverse domains compared to fine-tuned baselines. Practitioners should test it against their existing pipelines to validate its "no pipeline drama" claim.

Another consideration is the 16k context window. For very long-range forecasts or high-frequency data, this may be a limiting factor, requiring careful feature windowing or aggregation.

gentic.news Analysis

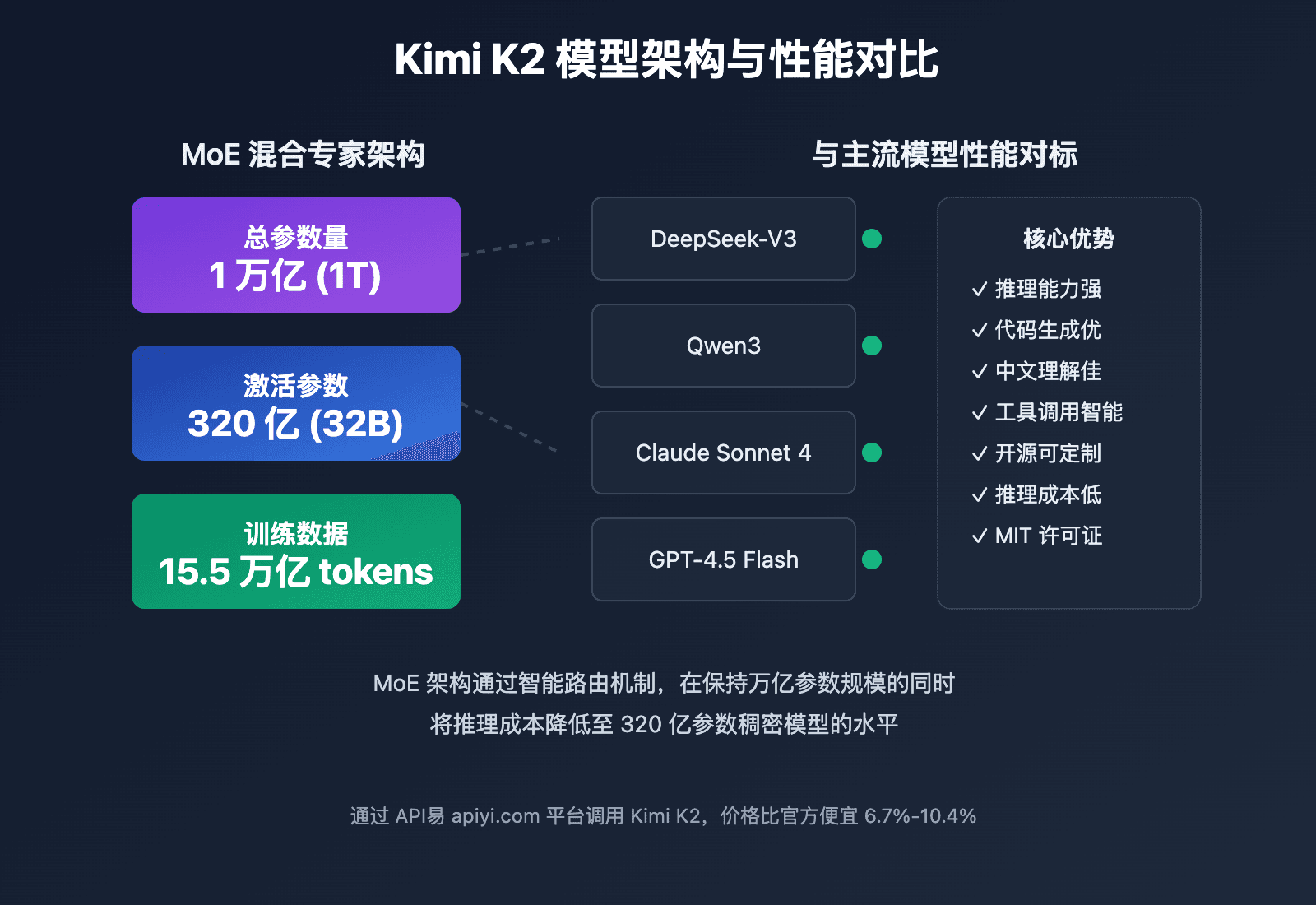

This release is a direct shot across the bow of the burgeoning MLOps-for-time-series startup ecosystem. Companies like Gretel.ai (trending 📈 in synthetic data) and Nixtla (makers of StatsForecast) have built businesses around simplifying time series forecasting. Google's move to offer a capable, zero-shot model for free commoditizes a significant portion of that custom pipeline work. It follows Google's broader pattern of using foundation models to vertically integrate AI capabilities into its cloud data products, as seen with Codey for BigQuery SQL generation and Duet AI across Workspace.

The strategy is clear: lower the barrier to advanced AI within BigQuery to increase platform lock-in. If an analyst can generate a reliable forecast with a SQL function call, the incentive to export data to a specialized service diminishes. This aligns with our previous coverage of Snowflake's acquisition of Myst AI in late 2024—a clear signal that cloud data platforms view native forecasting as a critical feature. The open-source release of TimesFM serves a dual purpose: it drives adoption and research while putting pressure on competitors who cannot match Google's scale in pretraining data aggregation.

For practitioners, the immediate takeaway is to experiment with TimesFM for prototyping and baseline generation. Its zero-shot nature makes it an excellent tool for rapid feasibility studies. However, for production systems with strict accuracy requirements, a head-to-head comparison with fine-tuned models remains essential. The true test will be its performance on the yet-to-be-published benchmarks.

Frequently Asked Questions

What is TimesFM?

TimesFM is a 200-million parameter, decoder-only foundation model developed by Google for time series forecasting. It is pretrained on a large corpus of real-world sequential data and is designed to make predictions on new data without any further training or fine-tuning (zero-shot).

How do I use Google TimesFM?

You can use TimesFM in two ways. First, it is available as an open-source model, which you can likely access via GitHub or a model repository to run independently. Second, it is integrated as a product feature within Google BigQuery, where it can presumably be invoked as a function on your data stored in the platform.

What is a zero-shot time series model?

A zero-shot time series model is one that has been pretrained on a broad dataset and can make forecasts on entirely new, unseen time series data without requiring any additional training or parameter updates. You provide the historical data, and it immediately returns a prediction based on patterns learned during its initial pretraining phase.

What is the context window for TimesFM?

TimesFM has a context window of 16,000 tokens. This means it can consider up to 16,000 data points (or aggregated tokens representing data points) from your historical sequence when generating a forecast. Input data longer than this window must be truncated or downsampled.