Google has publicly released TimesFM (Time Series Foundation Model), a model designed for zero-shot forecasting across diverse real-world datasets. Announced via a social media post from a Google Research account, the model is positioned as a drop-in solution that requires no further training on a user's specific data.

The core claim is that TimesFM, trained on a massive corpus of 100 billion real-world time points, can produce forecasts "out of the box" for applications ranging from traffic and weather to demand forecasting. The model is described as 100% open-source.

What Happened

Google Research has open-sourced TimesFM, a foundation model for time series data. The announcement highlights several key features:

- Zero Training Required: The model is presented as not needing any dataset-specific fine-tuning or training. Users can theoretically provide their time series data and receive predictions immediately.

- Massive Pretraining Corpus: The model was trained on 100 billion real-world time points, suggesting a broad and diverse dataset intended to capture universal temporal patterns.

- Broad Applicability: It is stated to work across domains including traffic, weather, and demand forecasting.

- Open-Source Release: The model and presumably associated code are available under an open-source license.

Context & Technical Implications

Time series forecasting is a critical task in industries like finance, logistics, retail, and infrastructure management. Traditional approaches, including statistical models (e.g., ARIMA, Prophet) and machine learning models, typically require significant feature engineering and training on the target dataset. The promise of a foundation model is to bypass this need for per-dataset training, similar to how large language models (LLMs) can perform tasks without task-specific fine-tuning.

The claim of "zero training required" points to a strong zero-shot or few-shot inference capability. For this to work reliably, the model's pretraining on 100B points must have covered a sufficiently wide distribution of time series types, frequencies (hourly, daily, etc.), and noise characteristics to generalize to unseen data.

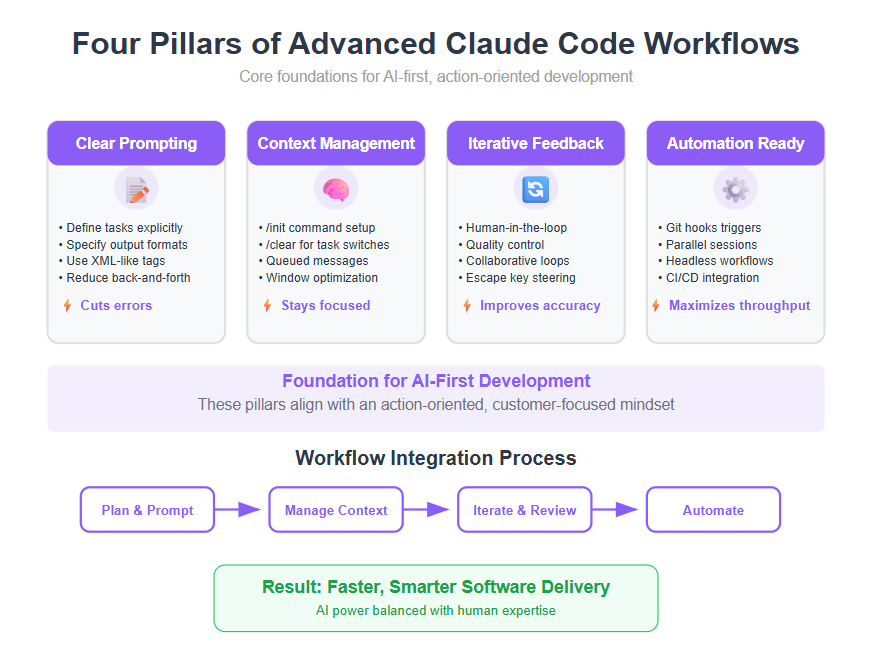

A key technical challenge for such a model is handling variable forecast horizons and context lengths. The announcement does not specify architectural details, but successful time series foundation models typically employ transformer or attention-based architectures adapted for sequential numerical data, possibly with patching or embedding strategies to handle long sequences.

What's Missing & What to Look For

The social media announcement is a high-level summary. Practitioners will need to examine the open-source repository for critical details:

- Architecture: The specific model architecture (e.g., decoder-only transformer, T5-style, etc.).

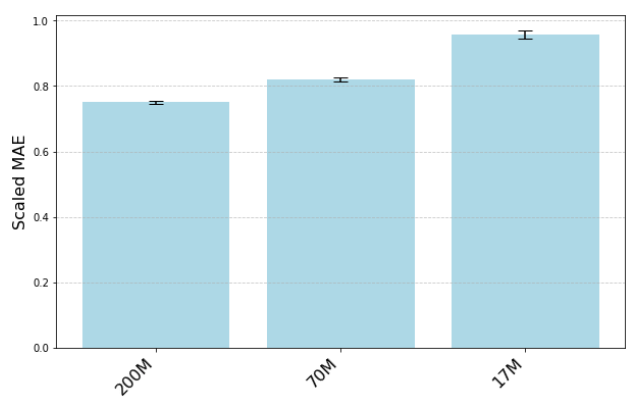

- Benchmarks: Quantitative performance on standard time series benchmarks (e.g., M4, M5, ETTh, Traffic) compared to established baselines and state-of-the-art models.

- Limitations: The effective context window length, supported data frequencies, and any constraints on input formatting.

- Inference Cost: The computational and memory requirements for running the model.

If the model delivers on its promise, it could significantly lower the barrier to entry for high-quality forecasting, moving it from a specialized data science task to more of an API-like service. However, the real test will be its performance on niche or highly noisy datasets outside its pretraining distribution.

gentic.news Analysis

This move by Google follows a clear industry trend of applying the "foundation model" paradigm beyond language and images to structured, scientific, and temporal data. It represents a direct challenge to both academic time series research and commercial forecasting platforms. Historically, time series modeling has been fragmented, with models highly tailored to specific data characteristics. TimesFM attempts to unify this under a single, generalist model.

This release also aligns with Google's broader strategy of open-sourcing foundational AI research to establish standards and attract developer mindshare, a pattern seen with TensorFlow, BERT, and T5. By releasing TimesFM, Google is positioning itself at the center of the time series ecosystem. However, the success of this strategy depends entirely on the model's empirical performance. The time series community is notoriously benchmark-driven; TimesFM will need to post strong numbers on competitive leaderboards to be adopted over fine-tuned specialist models.

Furthermore, this development intersects with the growing enterprise demand for operational forecasting in supply chain, energy, and retail. If TimesFM works as advertised, it could disrupt a segment currently served by specialized SaaS platforms and consulting services. The key question for practitioners is whether the convenience of zero-shot inference outweighs any potential performance drop compared to a finely-tuned model on a critical business metric.

Frequently Asked Questions

What is TimesFM?

TimesFM is an open-source foundation model for time series forecasting developed by Google Research. It is a single model trained on 100 billion real-world time points designed to make predictions on new time series data without requiring any additional training or fine-tuning on that specific dataset.

How do I use Google's TimesFM model?

Based on the announcement, you should be able to use TimesFM by providing your time series data to the model, which will then generate forecasts. The exact method—likely through a Python API or a command-line tool—will be detailed in the open-source repository. The process is touted as a simple "drop in your data and get predictions instantly."

What are the limitations of zero-shot time series forecasting?

Zero-shot forecasting models like TimesFM may struggle with data that is highly anomalous, follows patterns not well-represented in the 100B-point training corpus, or has very long-range dependencies that exceed the model's context window. Their performance must be validated on a case-by-case basis against traditional methods, especially for business-critical forecasts where accuracy margins are slim.

How does TimesFM compare to other time series models like ARIMA or Prophet?

Traditional models like ARIMA or Facebook's Prophet are statistical methods that are fitted directly to your data. TimesFM, in contrast, is a large pretrained neural network that applies patterns learned from a vast corpus to your data without fitting. This makes TimesFM potentially much faster to deploy but raises questions about its ultimate accuracy and adaptability compared to a model trained exclusively on your specific dataset's history.