Nvidia's $20 billion Groq acquihire in December 2025 signaled that inference workloads are reshaping the AI chip market. For startups vying for a slice of Nvidia's pie, it's now or never.

Key facts

- Nvidia acquired Groq for $20 billion in December 2025.

- Lumai targets 1 exaOPS in 10kW power budget by 2029.

- AWS uses Trainium for prefill, Cerebras for decode.

- Intel partners with SambaNova for decode reference design.

- Lumai runs Llama 3.1 8B and 70B models today.

AI adoption is reaching an inflection point as the focus shifts from training new models to serving them. Compared to training, inference is a much more diverse workload, presenting an opportunity for chip startups to carve out a niche. Large batch inference requires a different mix of compute, memory, and bandwidth than an AI assistant or code agent. [According to The Register]

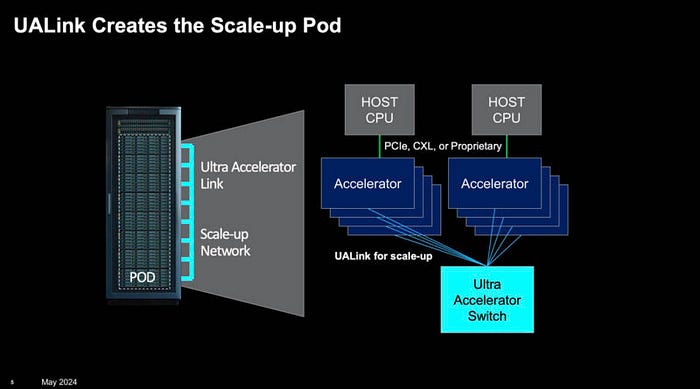

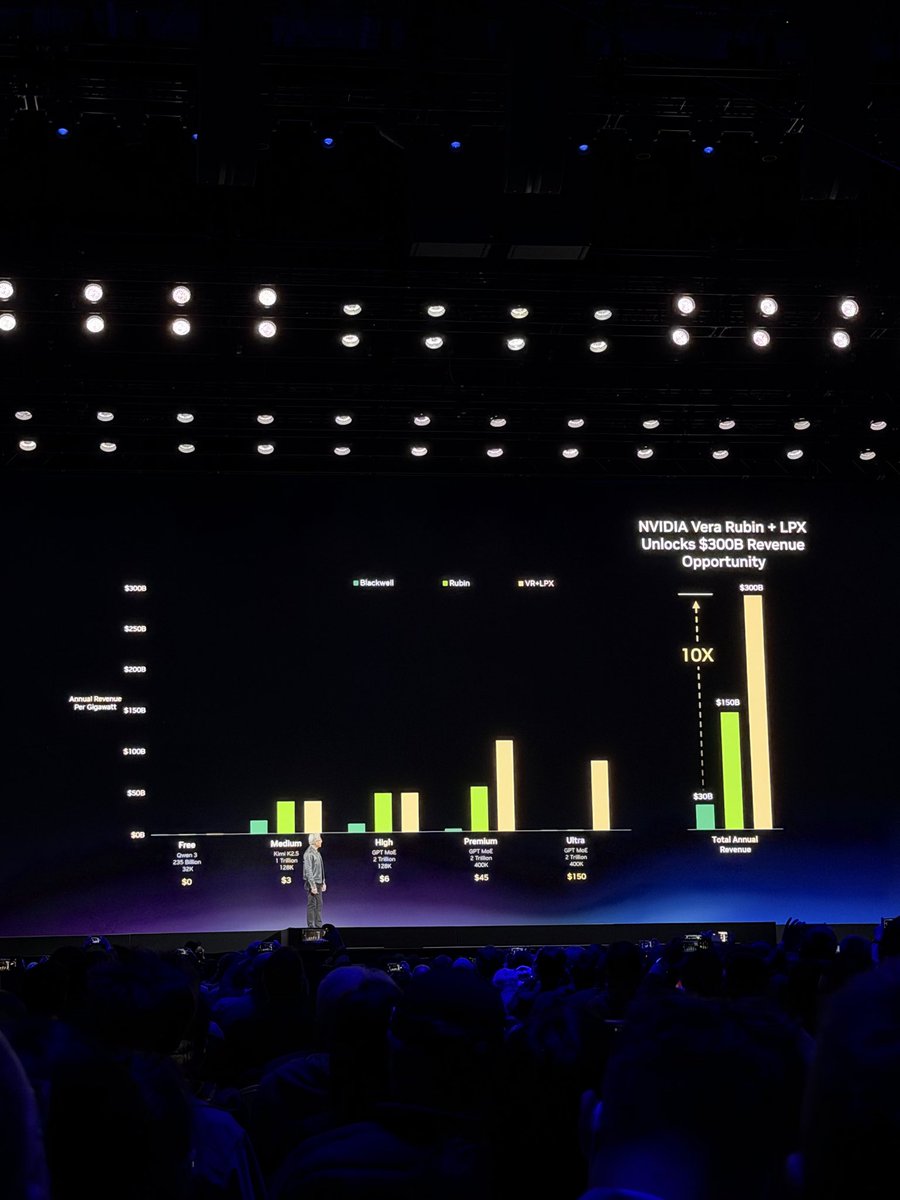

Because of this, inference has become increasingly heterogeneous, with certain aspects better suited to GPUs and other specialized hardware. Nvidia's $20 billion acquihire of Groq in December is a prime example. Groq's SRAM-heavy chip architecture could churn out tokens faster than any GPU, but limited compute capacity and aging chip tech meant they couldn't scale efficiently. Nvidia side-stepped this by moving compute-heavy prefill to GPUs while keeping bandwidth-constrained decode on Groq's LPUs. [Per the source]

Disaggregated compute becomes the norm

This combination isn't unique to Nvidia. AWS announced a disaggregated compute platform using its Trainium accelerators for prefill and Cerebras Systems' wafer-scale accelerators for decode. Intel also announced a reference design using GPUs for prefill and SambaNova's new RDUs for decode. So far, most chip startups' wins have been on the decode side, where SRAM's speed advantage shines. [The Register reports]

Optical inference enters the fray

This week, UK-based startup Lumai detailed its optical inference accelerator, which uses light instead of electrons to perform matrix multiplication at a fraction of the power of digital architecture. Lumai expects its next-gen Iris Tetra systems to achieve an exaOPS of AI performance in a 10kW power budget by 2029. Initially, the chip is positioned as a standalone alternative to GPUs for compute-bound inference workloads like batch processing. Longer-term, the company plans to use its optical accelerators as prefill processors. The architecture currently runs billion-parameter models like Llama 3.1 8B or 70B. [According to the source]

Key Takeaways

- Inference shift from training to serving creates opportunities for AI chip startups.

- Nvidia's $20B Groq acquihire validates disaggregated compute strategies.

What to watch

Watch for Nvidia's next-generation Rubin architecture and whether it integrates disaggregated inference natively, potentially closing the window for startups. Also track Lumai's Iris Tetra tape-out timeline and customer adoption in 2027.