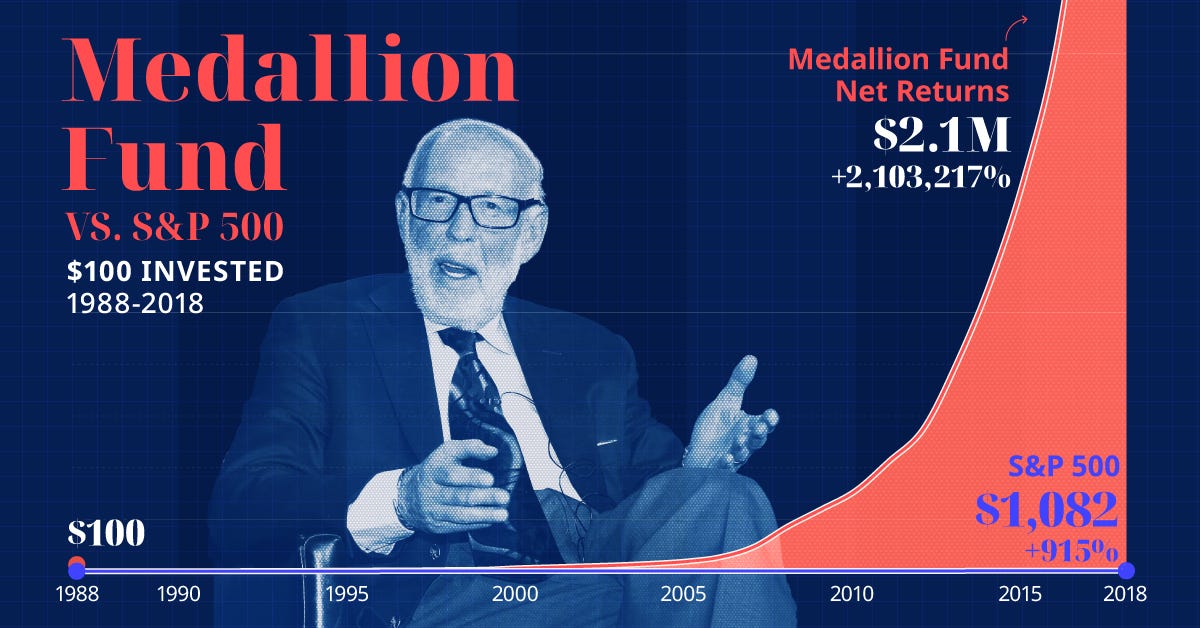

A prompt engineer, Navtoor, has published a framework claiming to distill the core quantitative methodology of Jim Simons' Renaissance Technologies, specifically its famed Medallion Fund, into 12 prompts for large language models. The fund, which operated from 1988 to 2021, is legendary in finance for its unprecedented performance, reportedly averaging 66% annual returns before fees over three decades—a track record that far surpasses those of Warren Buffett, George Soros, and every other major investor.

Simons, a mathematician and former NSA codebreaker, founded Renaissance on a principle radically different from traditional Wall Street: hiring scientists—mathematicians, physicists, and cryptographers—instead of financiers. The firm's success was built on discovering subtle, non-intuitive statistical patterns in market data using complex mathematical models, a field known as quantitative or "quant" trading. The specific algorithms and signals used by Medallion have remained one of the most closely guarded secrets in finance.

Key Takeaways

- A prompt engineer has translated the legendary, math-driven investment strategy of Jim Simons' Medallion Fund into a set of 12 AI prompts.

- This attempts to codify a historically opaque, 30-year algorithmic trading secret into a reproducible framework for large language models.

What's in the Prompts?

The published prompt set is an attempt to reverse-engineer and encode the philosophical and methodological pillars of Simons' approach into instructions an LLM can follow. While the prompts themselves are not detailed in the source tweet, the implication is that they guide the AI to think like a Renaissance quant. This would involve tasks such as:

- Identifying non-obvious, statistical patterns in time-series data instead of relying on traditional financial news or fundamental analysis.

- Prioritizing mathematical rigor and backtesting over narrative or intuitive market calls.

- Embracing a scientific, hypothesis-driven approach to market prediction, where models are constantly tested and refined.

- Ignoring conventional Wall Street wisdom and sentiment, a reflection of Simons' policy of not hiring from traditional finance.

In essence, the prompts aim to shift an LLM's "reasoning" from a qualitative, narrative-based analysis of markets to a quantitative, signal-detection mode.

The Technical Translation Challenge

The core challenge this project tackles is the translation of a proprietary, mathematically-intensive trading system—likely involving custom statistical models, high-frequency data processing, and advanced signal processing—into the natural language processing domain of LLMs. Traditional quant models are built on precise equations and probability theory; LLMs operate on token prediction and semantic understanding.

The prompts likely act as a meta-framework, instructing the AI to, for example, frame financial data analysis as a code-breaking problem (nodding to Simons' NSA background) or to generate and evaluate trading hypotheses as if they were scientific experiments. The goal isn't to replicate the Medallion Fund's exact signals—an impossible task without its data and models—but to emulate its foundational process.

What This Means in Practice

For an AI practitioner, this represents an experiment in methodology transfer. It's an attempt to use prompt engineering to impose a specific, highly successful analytical discipline onto a general-purpose reasoning engine. Success would be measured not by achieving 66% returns, but by whether the prompted LLM generates analysis that is quantitatively distinct and more statistically grounded than its default output.

Key Limitations:

- The Black Box Remains: The true "secret sauce" of Medallion was its specific alpha signals, data sources, and execution algorithms. Prompts cannot conjure this proprietary knowledge.

- Data is Key: Renaissance's edge came from analyzing unique and expansive datasets. An LLM working on publicly available data is operating with a fundamentally different, and likely inferior, information set.

- Backtesting vs. Live Trading: The prompts may guide analysis, but they do not constitute a full, executable trading system with risk management and execution logic.

gentic.news Analysis

This development sits at a fascinating intersection of three major trends we track: the democratization of advanced financial strategies, the encapsulation of expert knowledge into LLM prompts, and the ongoing fusion of mathematical rigor with generative AI.

First, it continues the trend of deconstructing elite, institutional knowledge for broader consumption, similar to how open-source libraries have democratized machine learning techniques once confined to tech giants. The attempt to prompt-engineer Simons' philosophy is a direct parallel to efforts to make AI reason like a expert physicist or biologist through careful instruction.

Second, it highlights a significant shift in quant finance itself. The original Renaissance era was defined by custom C++ and Fortran code, statistical libraries, and in-house data pipelines. The new frontier, as seen here, involves abstracting those principles into a layer that can guide foundation models. This aligns with our previous coverage on firms like Aidyia or Qraft Technologies, which are exploring how LLMs can generate, explain, or enhance quantitative trading factors, moving beyond pure numerical analysis to incorporate semantic understanding of market events.

However, a critical caveat is necessary. Renaissance's success was built on discovering signals that were not just complex, but non-intuitive and often fleeting. There's a fundamental tension between encoding a methodology into a publicly shared prompt and maintaining an edge. If the prompt set successfully captures a viable analytical approach, its widespread use could theoretically arbitrage away the very inefficiencies it seeks to exploit. The real value for a practitioner may not be in using these exact prompts, but in studying them as a blueprint for how to impose rigorous, scientific, and anti-narrative discipline onto an LLM's financial reasoning—a valuable skill as AI agents move deeper into algorithmic trading and portfolio analysis.

Frequently Asked Questions

Can I use these prompts to get rich like Jim Simons?

Almost certainly not. The prompts are a framework for an analytical philosophy, not a plug-and-play trading system that replicates Medallion's 66% returns. That performance was the result of unique talent, proprietary data, advanced infrastructure, and specific mathematical models developed over decades. The prompts might improve how an LLM analyzes financial data, but they do not contain the secret alpha signals themselves.

How would I actually use these AI prompts for trading?

You would integrate them into a larger pipeline. For example, you could use a prompted LLM to analyze market data summaries, generate and rank quantitative hypotheses for backtesting, or write code for statistical analysis. The LLM becomes a reasoning engine guided by the Medallion methodology, but its outputs would need to be rigorously validated, backtested, and integrated into a full trading system with risk controls—a complex engineering task far beyond just running a prompt.

Is this related to AI-powered quantitative hedge funds today?

Yes, thematically. Modern quant funds are increasingly incorporating machine learning and AI. However, they use techniques like reinforcement learning, gradient boosting, and neural networks on structured numerical data. This prompt-based approach is different; it attempts to use a large language model's reasoning and code-generation capabilities within a quant framework. It's a more nascent, exploratory approach compared to the established use of non-LLM AI in high finance.

Did Jim Simons' fund use AI?

Not in the modern sense of deep learning or LLMs. Renaissance's Medallion Fund peaked before the current AI revolution. Its "AI" was the collective intelligence of its PhD scientists building statistical models. However, its core premise—using advanced mathematical patterns invisible to humans—is the philosophical precursor to today's AI-driven quant finance. This prompt set is an attempt to bridge that old-school mathematical philosophy with new-school generative AI tools.