Key Takeaways

- The article outlines a practical methodology for monitoring and enhancing AI agent performance post-deployment.

- It emphasizes combining automated LLM-based evaluation with human feedback loops to create actionable datasets for fine-tuning.

What Happened

A new technical guide from Towards AI, published via the LangFuse platform, provides a comprehensive framework for evaluating AI agents in production environments. The core premise is that deployment is not the finish line; continuous evaluation and iterative improvement are critical for maintaining agent reliability, safety, and business value. This follows Towards AI's recent pattern of publishing deep-dive technical content on production AI systems, including guides on agent harnesses, Claude agent patterns, and the "100th Tool Call Problem."

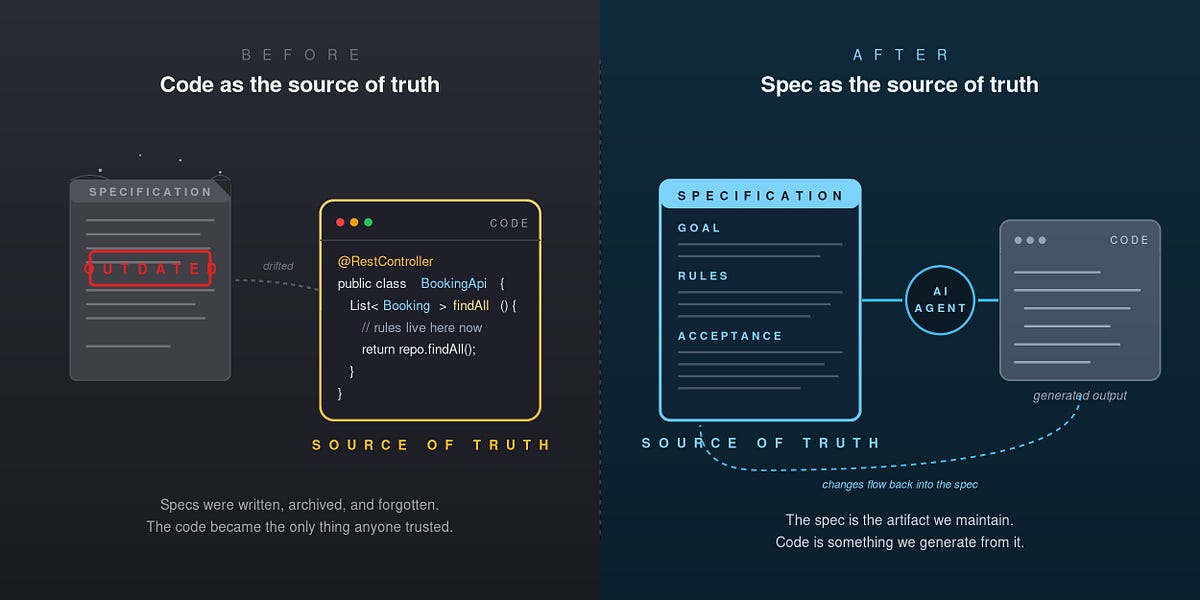

The article positions itself as a practical guide, moving beyond theoretical benchmarks to address the messy reality of live systems. It argues that traditional offline evaluation on static datasets is insufficient for agents that interact dynamically with users and tools.

Technical Details

The proposed framework rests on three interconnected pillars:

LLM-as-a-Judge: This involves using a separate, often more powerful or specialized LLM to automatically score an agent's outputs across defined criteria (e.g., correctness, helpfulness, safety, adherence to brand voice). The judge model is provided with a detailed rubric and the conversation context to generate consistent, scalable evaluations. This automates the initial quality gate for thousands of agent interactions.

Curated Datasets: The evaluations generated by the LLM judge, combined with direct user feedback (thumbs up/down, corrections), are used to build a dynamic, high-quality dataset. This dataset is purpose-built for fine-tuning and is far more relevant than generic public corpora. It captures the specific failures, edge cases, and stylistic nuances of the production application.

The Feedback Loop: This is the operational engine. Data from production (traces, tool calls, outputs) is fed into the LLM judge for scoring. Scores and human feedback are aggregated into the curated dataset. This dataset is then used to fine-tune the agent's underlying model or adjust its prompting, configuration, and tool use. The improved agent is redeployed, closing the loop and enabling continuous, data-driven enhancement.

The guide likely delves into implementation specifics using the LangFuse platform for observability (traces, metrics) and dataset management, though the full technical walkthrough is in the source article.

Retail & Luxury Implications

For retail and luxury brands deploying AI agents—whether for personalized shopping assistants, concierge services, inventory query systems, or creative ideation—this framework addresses the central challenge of quality control at scale.

- Brand Voice & Luxury Experience: An LLM judge can be explicitly tuned to evaluate whether an agent's tone, terminology, and recommendations align with the brand's luxury positioning. Is it suggesting appropriate cross-sells? Is it using the correct product nomenclature? Automated scoring on these subjective criteria is a powerful tool for consistency.

- Accuracy & Hallucination Mitigation: In domains with precise product data (materials, SKUs, availability), correctness is non-negotiable. An LLM judge can verify agent responses against a knowledge base, flagging hallucinations about product features or inventory. The resulting error dataset becomes direct fuel for improving factual grounding.

- Personalization Feedback Loop: User interactions with a shopping agent reveal unmet needs and preferences. Structuring this implicit feedback (e.g., a user rejecting a suggestion) into a fine-tuning dataset allows the agent to learn and personalize more effectively over time, moving from a static ruleset to a learning system.

- Operationalizing Human Expertise: Store associates and customer service leads provide invaluable qualitative feedback. This framework gives a structured channel to incorporate their expert corrections and stylistic notes directly into the agent's training cycle, blending human artistry with AI scalability.

The gap between this research and production is minimal; the article is explicitly about production practices. The challenge for retailers is not the concept but the implementation: establishing the pipelines for evaluation, dataset management, and responsible fine-tuning within existing tech and data governance frameworks.