A new research paper proposes a novel architectural approach to improve large language model (LLM) reasoning by decomposing complex tasks across multiple specialized agents that collaborate through a shared workspace. The work, highlighted by AI researcher Rohan Pandey (@rohanpaul_ai), directly addresses the core problem of single LLM agents struggling with long-horizon planning, tool use, and maintaining consistency in multi-step reasoning.

What the Paper Proposes

The central hypothesis is that a single LLM agent, acting as a monolithic "generalist," is suboptimal for tasks requiring sequential planning, external tool invocation, and state tracking. Instead, the researchers propose a framework where a complex task is broken down and assigned to a team of LLM-based agents. Each agent has a defined role or specialization (e.g., a planner, a tool executor, a verifier). Critically, these agents do not operate in isolation. They coordinate by reading from and writing to a shared workspace—a structured memory or state representation that contains the task context, intermediate results, execution history, and current goals.

This architecture is designed to mimic a software engineering team or a structured problem-solving process, where different experts handle different aspects of a project while referring to a common document or whiteboard. The shared workspace prevents agents from working at cross-purposes and allows subsequent agents to build directly on the outputs of their predecessors.

The Core Problem: Limitations of Single-Agent LLMs

The paper identifies specific failure modes of current single-agent LLM systems:

- Planning Breakdowns: Difficulty in creating and adhering to a long, coherent plan.

- Tool Misuse: Incorrectly sequencing API calls or forgetting the state returned by previous tools.

- Error Propagation: A mistake in an early step cascades through later steps with no mechanism for correction.

- Context Dilution: The core instruction and goal can get "lost" in a long conversation history filled with intermediate reasoning steps.

By decomposing the task, the framework aims to contain errors within a specific agent's domain and allow other agents (like a verifier) to identify and rectify them before proceeding.

How the Shared Workspace Framework Would Work

While the full technical details and implementation are contained within the paper, the proposed workflow likely follows a pattern:

- Task Decomposition & Assignment: A master or router agent analyzes the initial user query and decomposes it into sub-tasks. It assigns these sub-tasks to the appropriate specialized agents.

- Agent Specialization: Each agent is prompted or fine-tuned for its specific role. A Planner Agent focuses on high-level strategy. An Executor Agent is optimized for correctly formatting and calling external tools/APIs. A Critic/Verifier Agent evaluates the correctness and completeness of results.

- Collaboration via Shared Workspace: Agents do not pass messages directly to each other. Instead, they post their outputs (a plan, a code snippet, a result, a critique) to the shared workspace. The next agent consults the workspace to understand the current state and performs its own task, updating the workspace in turn.

- Orchestration: A lightweight orchestration layer manages the flow, determining which agent should act next based on the state of the workspace, until a final answer is produced and validated.

This design separates concerns, making the system more modular, interpretable (you can inspect the workspace), and potentially more robust. It also allows for non-LLM components, like code interpreters or database clients, to be cleanly integrated as tools whose I/O is logged in the workspace.

Potential Implications and Required Validation

If successfully implemented and validated, this framework could lead to more reliable LLM systems for:

- Complex Code Generation: Where planning, writing, testing, and debugging are separate phases.

- Sophisticated Research & Analysis: Requiring iterative search, synthesis, and citation.

- Business Process Automation: Involving multiple decision points and external system checks.

The critical next step is empirical validation. The paper's claims need to be tested against established benchmarks for agentic reasoning and tool use (e.g., SWE-Bench, WebArena, AgentBench). Key metrics would include task success rate, robustness to ambiguity, and efficiency (total token cost across all agents). The overhead of managing multiple agents and the workspace must be justified by a significant improvement in performance.

gentic.news Analysis

This research direction aligns strongly with the industry-wide pivot from evaluating standalone LLM chat performance to building reliable, compound AI systems. As we covered in our analysis of Meta's Chameleon, the frontier is no longer just about model scale but about novel architectures that chain models and tools effectively. The "shared workspace" concept is a formalization of patterns emerging in practice, such as the CrewAI and AutoGen frameworks, which also employ multi-agent designs but often rely on direct agent-to-agent messaging.

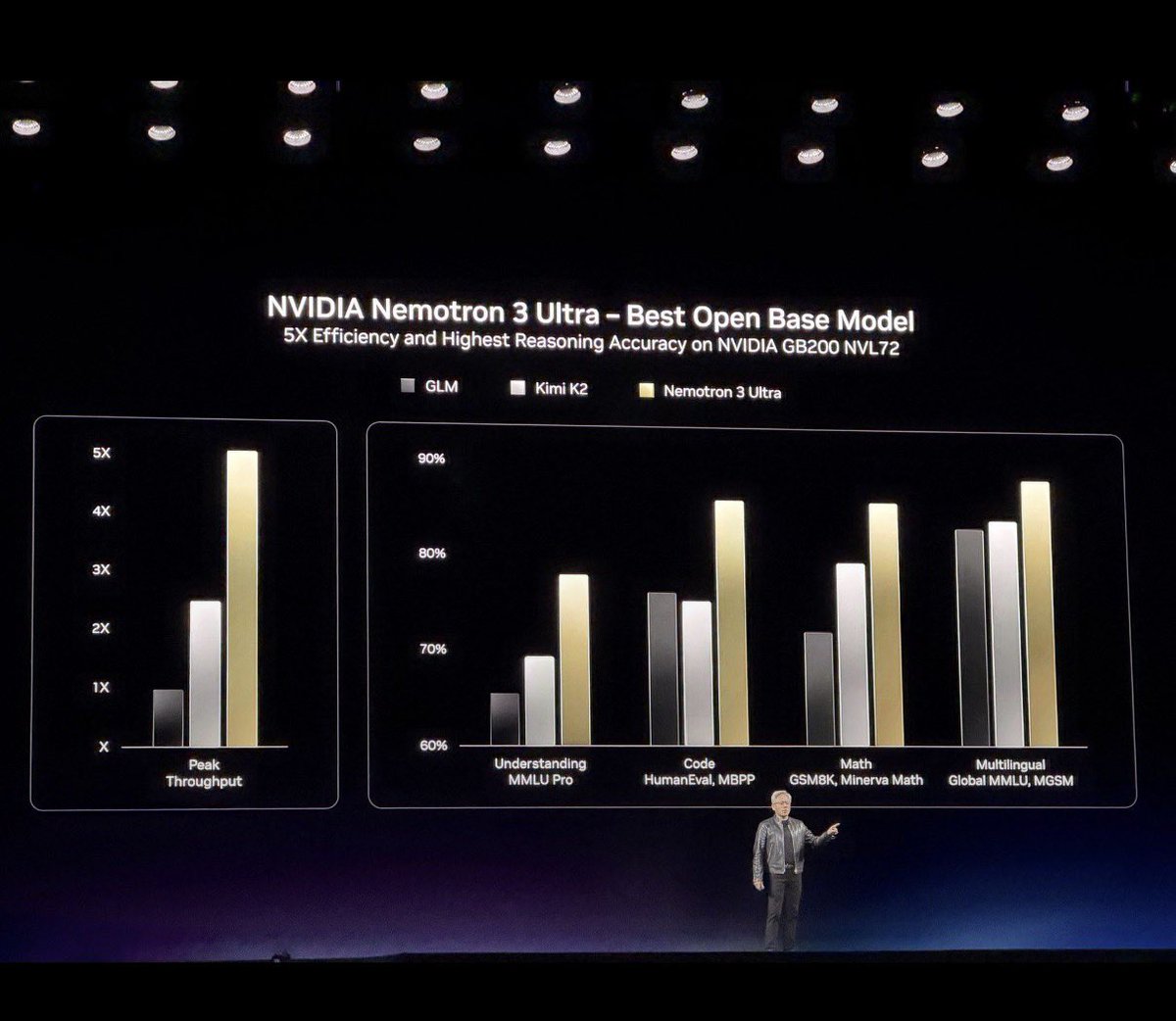

The proposed architecture notably contrasts with—and could be complementary to—another major trend: the development of massive Mixture-of-Experts (MoE) models like Mistral AI's models and xAI's Grok-1. While MoE models route within a single model, this framework routes between separate model instances or calls. The former optimizes for computational efficiency; the latter, as proposed here, optimizes for reasoning structure and reliability. A hybrid approach, using smaller, specialized MoE models as the agents, is a logical future step.

This work also connects to Google DeepMind's recent efforts on "System 2" reasoning for LLMs, which emphasizes slow, deliberate, and multi-step thought. The shared workspace provides a concrete mechanism to implement such a system, moving beyond a simple chain-of-thought prompt. The success of this framework will hinge on the design of the workspace itself—its data structure, update rules, and how effectively it prevents state confusion—which will likely be the focus of significant follow-on research.

Frequently Asked Questions

What is a multi-agent LLM framework?

A multi-agent LLM framework is a system architecture where multiple instances or specializations of large language models work together to solve a single task. Instead of one model trying to do everything, different agents take on specific roles (like planning, execution, or verification), similar to a team of specialists. This is designed to improve performance on complex, multi-step problems.

How does a 'shared workspace' differ from agents just talking to each other?

In a direct messaging approach, agents pass their entire output to the next agent, which can bury critical information in long chat histories and make state tracking difficult. A shared workspace acts as a central, structured database or "whiteboard." Agents read the current state from and write their results to this workspace. This provides a single source of truth for the task's progress, making the system's reasoning more transparent and less prone to context loss.

What are the main challenges with this multi-agent approach?

The primary challenges are increased complexity and cost. Orchestrating multiple agents requires careful design to avoid infinite loops or deadlocks. Each agent consumes tokens, so the total computational cost (latency and API expense) can be significantly higher than a single-agent approach. The framework must demonstrate that the performance gains outweigh this added cost and complexity.

Has this been implemented yet, and where can I find the paper?

The source is a tweet highlighting a new research paper. The full paper, which contains the detailed methodology, experiments, and results, would typically be published on arXiv. To evaluate the claims, one must review the paper's empirical benchmarks comparing the multi-agent shared workspace framework against strong single-agent and other multi-agent baselines on standard reasoning tasks.