What Happened

A new command-line tool called llmfit aims to solve a common frustration for developers running large language models (LLMs) locally: downloading models only to find they won't run on available hardware due to memory constraints.

The tool performs a system scan of RAM, CPU, and GPU resources, then cross-references that information against a database of 497 models from 133 providers—including Llama, Mistral, DeepSeek, and Qwen families. For each model, llmfit provides scores across four dimensions: quality, speed, fit (to your system), and context length.

A key technical feature is its awareness of Mixture-of-Experts (MoE) architectures like Mixtral and DeepSeek-V3. These models often have lower memory requirements than their parameter counts might suggest, as only a subset of experts are active per token. llmfit accounts for this, potentially preventing users from overlooking capable models they mistakenly believe won't fit.

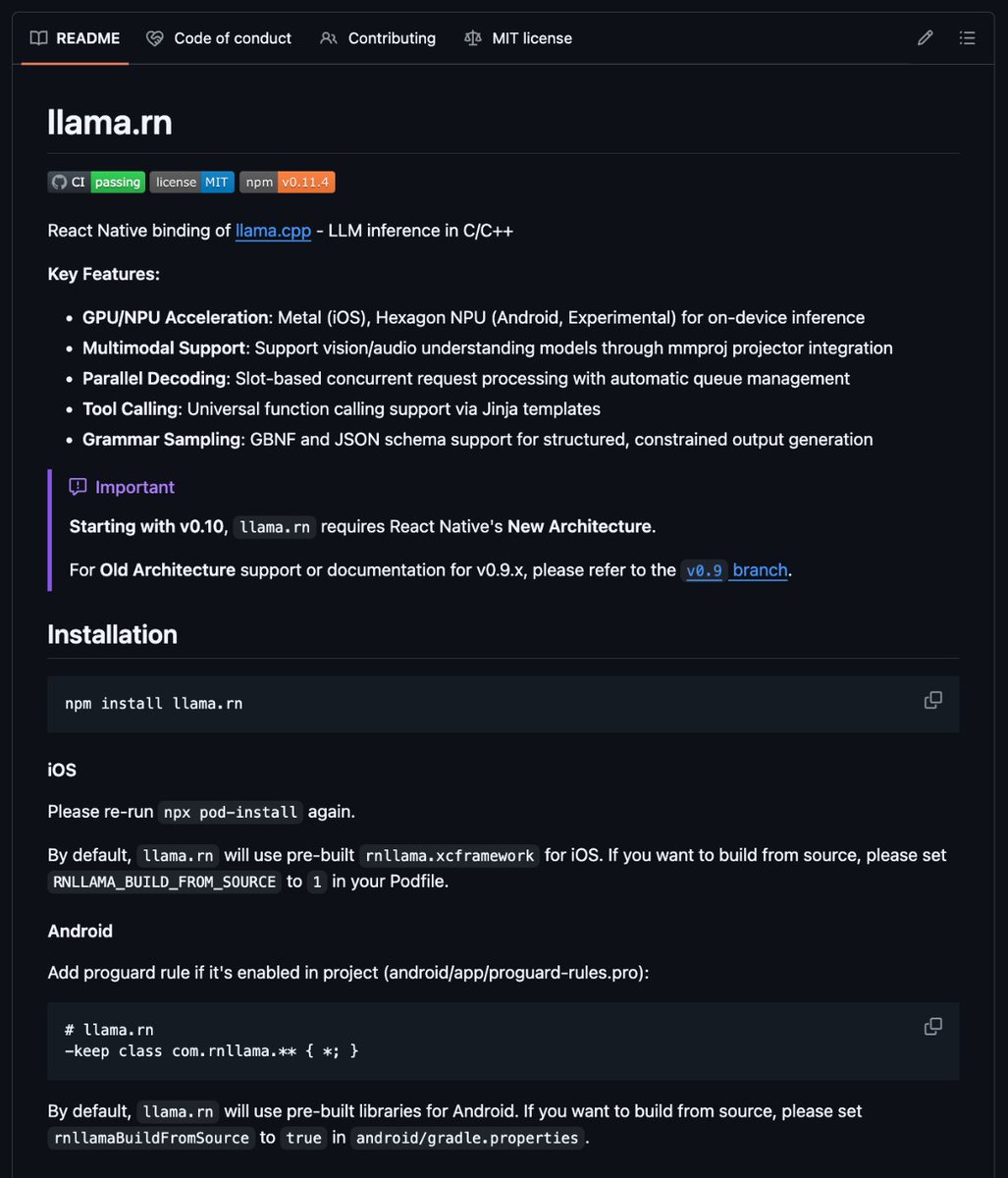

Once a suitable model is identified, the tool can pull it directly via Ollama from its Terminal User Interface (TUI), streamlining the workflow from discovery to deployment.

Context

The proliferation of open-weight LLMs has created a practical problem for developers and researchers: model selection is often a tedious process of checking published hardware requirements, estimating memory overhead, and trial-and-error downloads. This frequently leads to out-of-memory (OOM) crashes, wasted bandwidth, and frustration.

Tools like Ollama and LM Studio have simplified local model management and serving, but the initial step of choosing a model that fits a specific system's constraints remained manual. llmfit attempts to automate this selection process by building a comprehensive, system-aware recommendation engine.

Its database of nearly 500 models suggests a focus on breadth, covering major open-source families and their variants (different sizes, quantizations). The scoring on "quality" and "speed" likely incorporates known benchmark results (like MMLU or MT-Bench) and published inference performance data, though the exact methodology isn't detailed in the announcement.

The direct integration with Ollama positions llmfit as a front-end discovery layer for an existing, popular local inference ecosystem.