What Happened

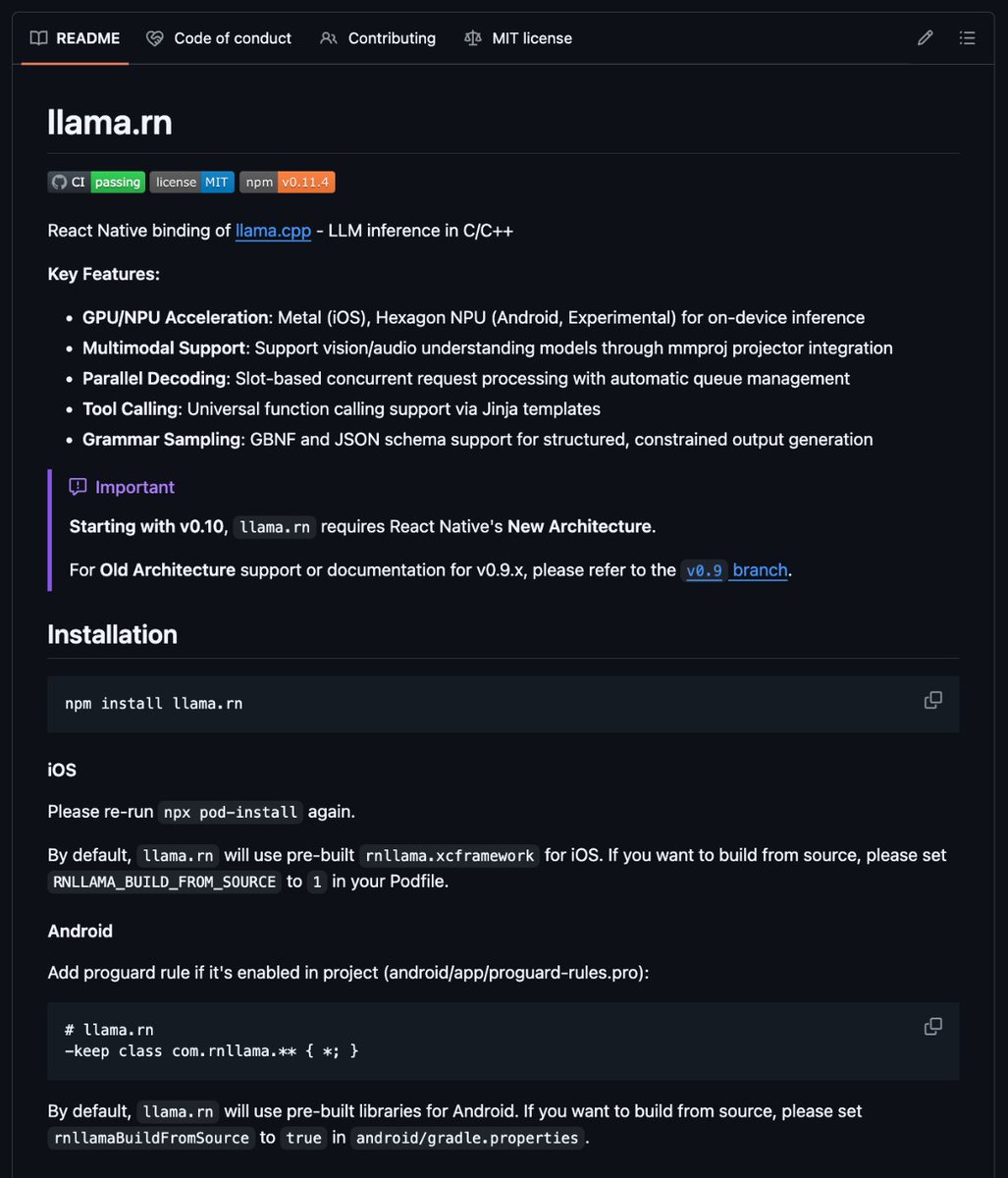

The LlamaFactory project has released a tool that replaces the traditional, code-intensive process of fine-tuning large language models (LLMs) with a visual, no-code interface. According to the announcement, the platform supports fine-tuning for over 100 models, including newly added support for Llama 4, Qwen, DeepSeek, and Mistral.

The tool directly addresses a common pain point in machine learning engineering: the significant overhead required to prepare and launch a fine-tuning job. The source material characterizes the old workflow as involving writing roughly 300 lines of boilerplate code and spending hours debugging CUDA-related issues—a process often described as frustrating.

Context

Fine-tuning is a critical technique for adapting pre-trained foundation models (like Llama or Mistral) to specific tasks, domains, or datasets. Historically, this process required deep technical expertise in frameworks like PyTorch or Hugging Face Transformers, along with proficiency in managing GPU memory and dependencies. This created a high barrier to entry for practitioners who wanted to customize models without becoming experts in low-level ML engineering.

Projects like LlamaFactory aim to democratize this capability by abstracting away the underlying code. By providing a drag-and-drop or point-and-click interface, the tool allows users to select a model, upload their dataset, configure training parameters, and launch a job without writing manual training loops or handling device placement logic.

Support for over 100 models indicates the tool likely acts as a unified wrapper or adapter for multiple popular model families and repositories, such as those on Hugging Face Hub. The specific mention of Llama 4, Qwen, DeepSeek, and Mistral suggests ongoing updates to include the latest model releases from major AI labs.

The Tool's Implication

The primary value proposition is a drastic reduction in setup time and complexity. Engineers and researchers can potentially shift focus from infrastructure debugging to experiment design and evaluation. For small teams or individual developers, this lowers the cost of prototyping custom model variants.

However, the announcement is light on technical specifics. Key details for practitioners—such as supported fine-tuning methods (e.g., LoRA, QLoRA, full-parameter), maximum trainable parameter sizes, GPU requirements, dataset format support, and whether the tool is open-source or a hosted service—are not provided in the source. The provided link likely leads to a GitHub repository or documentation containing these details.

The trend toward no-code/low-code ML tooling is accelerating, with LlamaFactory positioning itself within a niche focused specifically on LLM fine-tuning. Its direct competition includes other open-source projects like Axolotl, as well as commercial platforms from cloud providers (AWS SageMaker, Google Vertex AI) and startups.