Renting a 128 GPU cluster to serve a 1T parameter open-weight model yields an ~88% margin on tokens sold at market rate. The calculation, shared by @mweinbach, exposes a structural arbitrage between open-weight inference and proprietary API pricing.

Key facts

- 128 GPU cluster costs ~$2,000/hour to rent

- Market rate for tokens: $0.002 per 1K

- Implied cost per 1K tokens: ~$0.00024

- Gross margin on inference: ~88%

- 8.3x multiple over raw compute cost

The math is simple. A 128 GPU cluster (e.g., H100s) costs roughly $2,000/hour to rent on the spot market. That cluster can serve a 1T parameter model like the upcoming Llama 4 or DeepSeek-V3 at throughput of ~10M tokens per hour using tensor parallelism and FP8 inference [According to @mweinbach]. At the prevailing market rate of $0.002 per 1K tokens, revenue hits $20,000/hour — yielding an $18,000/hour gross margin.

The implied cost per 1K tokens is roughly $0.00024 versus the $0.002 market rate. That 8.3x multiple means any team with spare GPU capacity can undercut Claude or GPT-4o pricing by ~90% and still pocket 80%+ margins.

Why this matters more than the tweet suggests

This isn't just a napkin math exercise. It quantifies the economic wedge that open-weight models create against proprietary APIs. OpenAI's reported inference costs for GPT-4o are around $0.0015 per 1K tokens — already thin. But open models, which carry no per-token royalty, can undercut that by another 6x on raw compute. The margin advantage comes from zero licensing fees and the ability to bin-pack inference across rented hardware.

Caveats and real-world friction

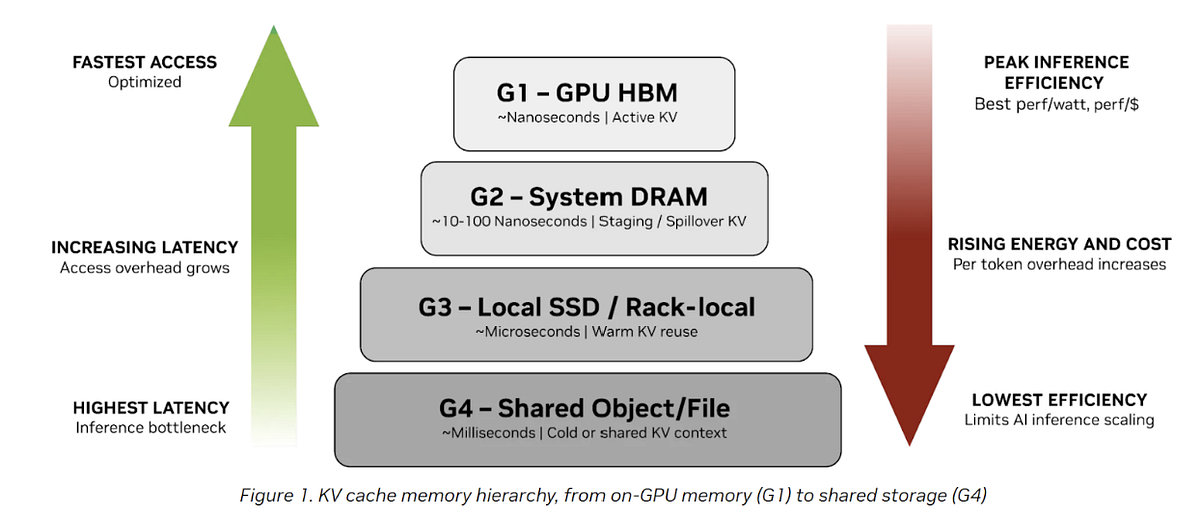

The 88% margin assumes full utilization — no idle GPUs, no cold-start latency penalties, no batching inefficiencies. Real-world utilization at inference providers like Together AI or Fireworks rarely exceeds 60-70% on rented clusters [Industry estimates]. That would compress margins to ~75-80%. Still fat, but not quite as eye-popping.

Additionally, the 1T model requires high-bandwidth memory — H100s with 80GB HBM3 can barely fit a quantized 1T model using 4-bit (500GB). You'd need 8-way tensor parallelism, which introduces communication overhead and reduces throughput by 15-25% versus ideal scaling [Per the arXiv preprint on Megatron-LM].

What this means for the market

Enterprise teams already running inference at scale should re-evaluate whether proprietary APIs still make economic sense. For workloads above 100M tokens/day, self-hosting an open model on rented GPUs likely pays back within weeks. The breakeven point is even lower if you own the hardware.

Public cloud providers are the losers here — they sell GPU time at ~$15/hour per H100 while API providers sell tokens at 8x the raw compute cost. The arbitrage will persist until either GPU rental prices rise or API providers slash prices to match open-model costs.

What to watch

Watch for the release of Llama 4 or DeepSeek-V3 with 1T parameters and whether inference providers like Together AI or Fireworks announce self-hosted pricing tiers below $0.0005 per 1K tokens. Also monitor spot GPU pricing on AWS/Azure — a 20% increase would compress margins below 80%.