In the rapidly evolving landscape of artificial intelligence, a persistent challenge has remained: while large language model (LLM) agents excel at complex tasks, they struggle to form meaningful, lasting relationships with individual users. Current AI assistants might remember your last question but fail to learn your preferences, communication style, or unique needs over time. This limitation isn't just a technical hurdle—it represents a fundamental barrier to creating truly helpful, personalized AI companions.

A groundbreaking paper titled "MAPLE: A Sub-Agent Architecture for Memory, Learning, and Personalization in Agentic AI Systems" proposes a radical solution to this problem. Published on arXiv in February 2026, the research introduces a novel framework that fundamentally rethinks how AI systems handle adaptation, moving beyond the current monolithic approaches to create agents that can genuinely learn and evolve alongside their users.

The Core Problem: Architectural Conflation

The MAPLE researchers identify what they call a "critical architectural conflation" in current systems. Most LLM agents treat memory, learning, and personalization as a single, unified capability rather than three distinct mechanisms requiring different infrastructure, operating on different timescales, and benefiting from independent optimization.

Think of it this way: human cognition separates short-term memory (what you just heard), long-term memory (your life experiences), learning (extracting patterns from those experiences), and personalization (applying what you've learned to new situations). Current AI systems mash these together, resulting in agents that might remember facts but don't truly learn from them or apply that learning in personalized ways.

The MAPLE Solution: A Tripartite Architecture

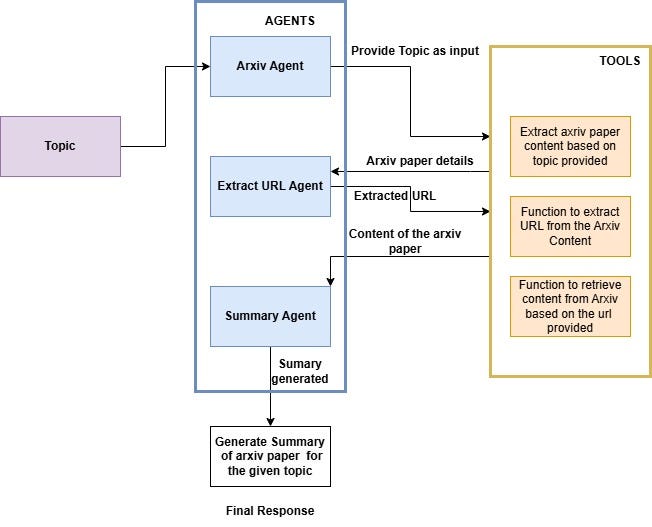

MAPLE—which stands for Memory-Adaptive Personalized LEarning—proposes a principled decomposition where:

Memory handles the storage and retrieval infrastructure, serving as the system's foundational knowledge base. This isn't just about storing conversations but creating organized, accessible repositories of user interactions.

Learning operates asynchronously to extract intelligence from accumulated interactions. This component analyzes patterns, identifies preferences, and builds models of user behavior without slowing down real-time interactions.

Personalization applies learned knowledge in real-time within finite context budgets, ensuring that each interaction feels tailored to the individual user while maintaining computational efficiency.

Each component operates as a dedicated sub-agent with specialized tooling and well-defined interfaces, creating a modular system where improvements to one component don't require overhauling the entire architecture.

Experimental Results: Quantifying Improvement

The research team developed the MAPLE-Personas benchmark to evaluate their architecture, comparing it against stateless baselines and existing approaches. The results were striking:

- 14.6% improvement in personalization score compared to stateless baselines (p < 0.01, Cohen's d = 0.95)

- Trait incorporation rate increased from 45% to 75%

- Significant improvements in consistency and adaptation over extended interactions

These numbers translate to practical improvements: AI agents that not only remember your preferences but actually learn from them, applying that knowledge consistently across different types of interactions.

Technical Implementation: How It Works

The MAPLE architecture implements several innovative technical approaches:

Asynchronous Learning Cycles: Unlike systems that attempt to learn during real-time interactions, MAPLE's learning component operates on separate cycles, analyzing accumulated data to extract patterns without impacting response times.

Structured Memory Organization: The memory system goes beyond simple vector databases to create organized, hierarchical storage that reflects different types of knowledge and their relationships.

Context-Aware Personalization: The personalization component intelligently selects which learned traits to apply based on current context, conversation history, and user goals.

Modular Interface Design: Well-defined APIs between components allow for independent development and optimization, creating a flexible system that can evolve as new techniques emerge.

Practical Applications: Beyond Better Chatbots

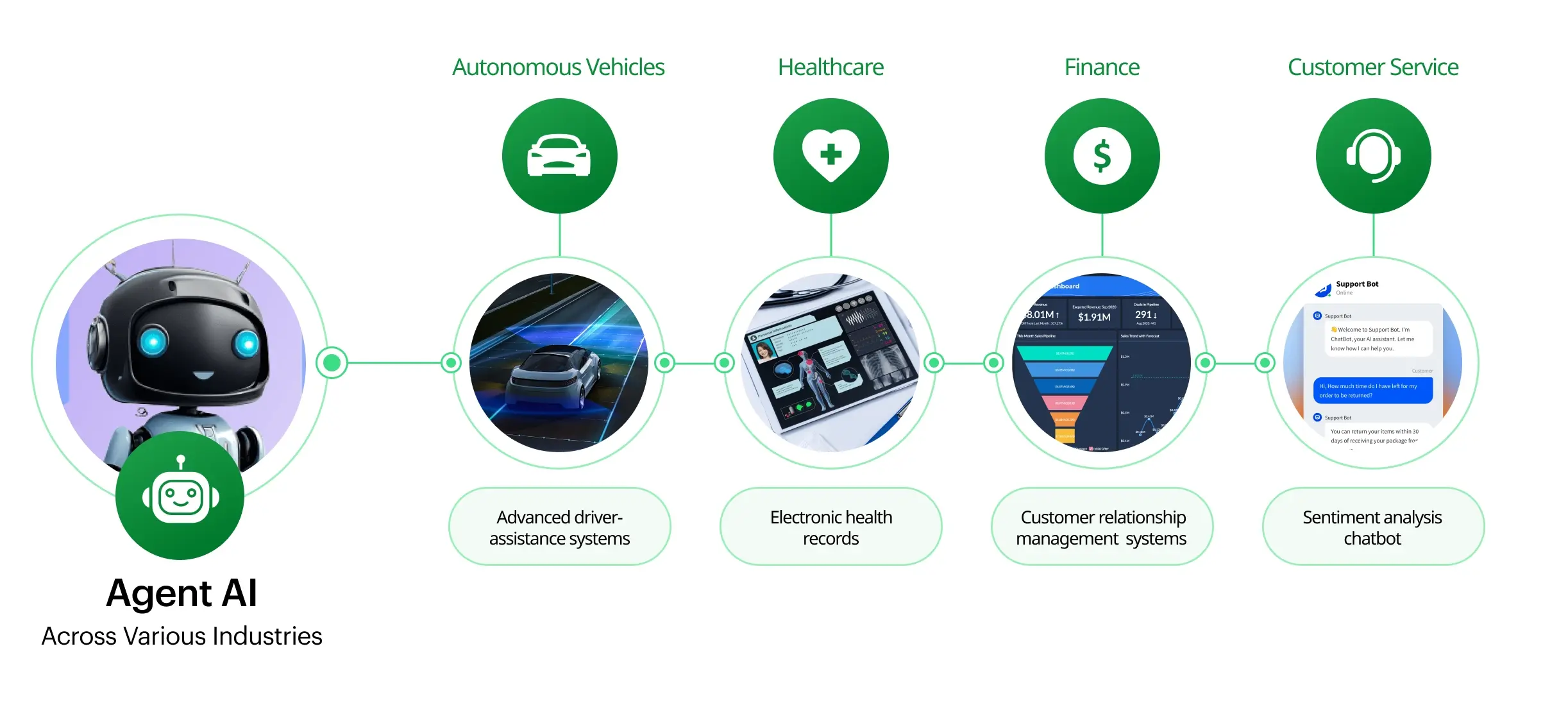

The implications of MAPLE extend far beyond creating more pleasant conversational agents:

Healthcare: AI assistants that genuinely learn patient histories, preferences, and response patterns could provide more personalized care recommendations and follow-up.

Education: Learning systems that adapt not just to student performance but to individual learning styles, motivation patterns, and knowledge gaps.

Enterprise Tools: Business applications that learn user workflows, anticipate needs, and personalize interfaces based on individual working styles.

Accessibility: Systems that adapt to users with different abilities, learning how to best support each individual's unique needs.

Challenges and Future Directions

While MAPLE represents significant progress, the researchers acknowledge several challenges:

Privacy Considerations: Systems that learn deeply about individuals require robust privacy protections and user control over what's learned and remembered.

Computational Efficiency: Maintaining three specialized components requires careful optimization to remain practical for widespread deployment.

Evaluation Complexity: Measuring true personalization and learning remains challenging, requiring new benchmarks and evaluation methodologies.

Integration Complexity: Deploying such systems in real-world applications requires solving significant integration challenges with existing infrastructure.

The research team suggests several promising directions for future work, including exploring different learning algorithms within the framework, developing more sophisticated memory organization schemes, and creating standardized interfaces for sub-agent communication.

The Broader Context: A Shift in AI Architecture

MAPLE arrives at a crucial moment in AI development. As systems become more capable, the focus is shifting from raw capability to usability, reliability, and personal connection. The architecture represents a move toward more human-like cognitive organization in AI systems—not through mimicking brain biology, but through functional decomposition that reflects how different cognitive processes naturally separate.

This approach aligns with broader trends in AI toward modular, interpretable systems. By separating concerns, MAPLE not only improves performance but also makes systems more understandable and debuggable—a crucial consideration as AI becomes more integrated into sensitive applications.

Conclusion: Toward Truly Adaptive AI

The MAPLE architecture represents more than just another technical improvement; it signals a fundamental shift in how we think about AI agents. By recognizing that memory, learning, and personalization require distinct approaches and treating them as separate but interconnected components, researchers have created a framework for building AI that doesn't just perform tasks but genuinely adapts to individual users.

As this approach develops and matures, we may see a new generation of AI systems that feel less like tools and more like partners—systems that remember our past interactions, learn from them, and apply that learning to help us more effectively. The 14.6% improvement demonstrated in the research is just the beginning; the true potential lies in creating AI that grows with us, understanding our unique needs and preferences in ways current systems cannot.

Source: "MAPLE: A Sub-Agent Architecture for Memory, Learning, and Personalization in Agentic AI Systems" (arXiv:2602.13258v1, February 2026)