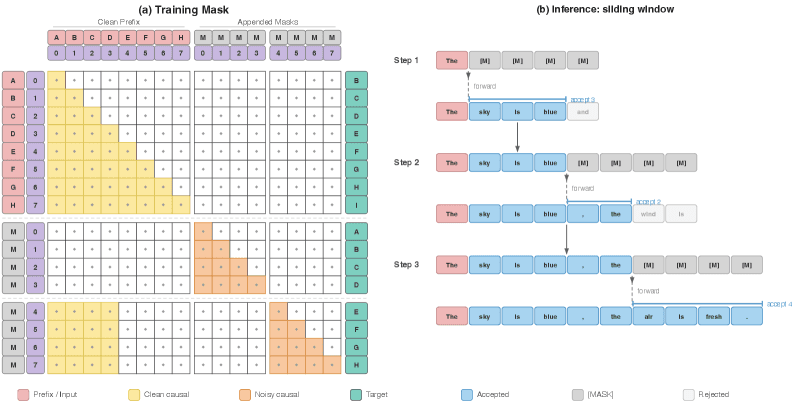

A new method called MARS (Multi-token generation for Autoregressive models) enables standard transformer-based language models to generate multiple tokens per forward pass without any architectural modifications, achieving 1.5-1.7x throughput improvements while maintaining baseline accuracy. The approach uses confidence thresholding to dynamically adjust generation speed in real-time, offering a practical acceleration technique that requires no retraining or model changes.

What the Method Does

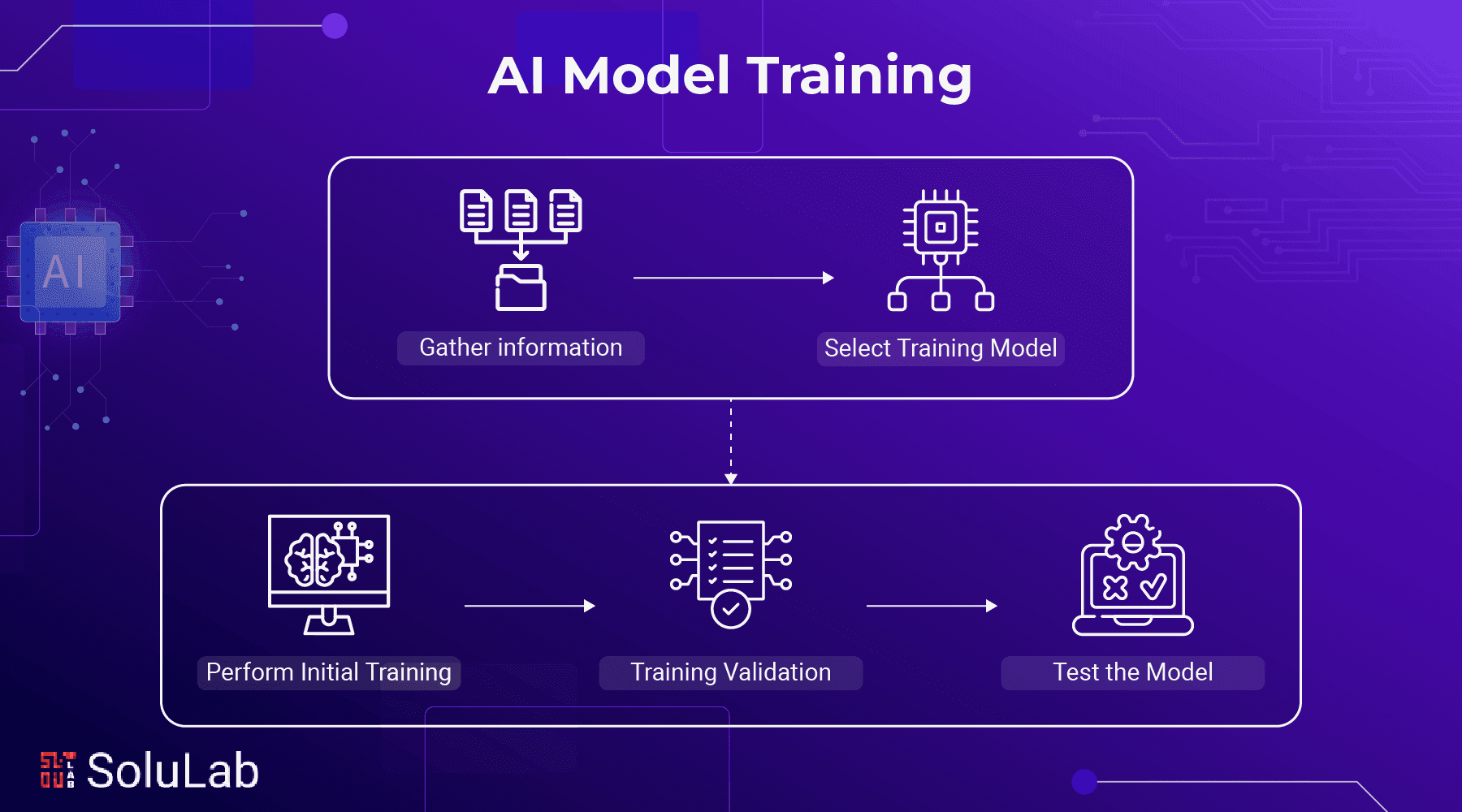

MARS addresses the fundamental bottleneck of autoregressive generation: models typically produce just one token per forward pass, creating sequential dependencies that limit throughput. The core insight is that during generation, some next-token predictions are highly confident, making them suitable candidates for parallel generation without sacrificing quality.

The method works by having the model predict multiple future tokens in a single forward pass when confidence exceeds a threshold. When confidence is lower, it falls back to standard single-token generation. This dynamic adjustment happens in real-time during inference, allowing the system to maintain output quality while accelerating generation where possible.

Technical Implementation

While the source tweet doesn't provide implementation details, the key innovation appears to be in the decoding strategy rather than model architecture. The "zero architectural changes" claim suggests MARS operates at the inference layer, likely modifying the sampling process rather than the transformer blocks themselves.

The confidence thresholding mechanism is particularly noteworthy—it allows users to trade off between speed and quality dynamically. Higher thresholds would produce more conservative (slower) generation that closely matches baseline quality, while lower thresholds would enable more aggressive parallelization at the potential cost of occasional quality degradation.

Performance Characteristics

According to the source, MARS achieves:

- 1.5-1.7x throughput improvement over standard autoregressive generation

- Maintained baseline accuracy when using appropriate confidence thresholds

- Real-time speed adjustment via tunable confidence parameters

These improvements come without the computational overhead of architectural modifications like speculative decoding or draft models, making MARS particularly attractive for production deployments where model changes are costly.

Practical Implications

For AI engineers and ML practitioners, MARS represents a low-friction optimization technique. Since it requires no model retraining or architectural changes, it can be applied to existing deployed models immediately. The real-time adjustability means the same model can serve different use cases—conservative thresholds for high-stakes applications, aggressive thresholds for low-latency requirements.

The method appears most valuable in scenarios where:

- Inference throughput is bottlenecked by sequential generation

- Model architecture changes are prohibited (e.g., proprietary models)

- Dynamic quality/speed tradeoffs are desirable

- Minimal deployment overhead is required

Limitations and Considerations

Without access to the full paper, several questions remain:

- How does confidence thresholding affect output diversity and creativity?

- What's the computational overhead of the confidence evaluation?

- Does the method work equally well across different model sizes and architectures?

- How does it compare to other acceleration techniques like speculative decoding or quantization?

gentic.news Analysis

MARS arrives during a period of intense focus on LLM inference optimization. This follows NVIDIA's recent TensorRT-LLM updates in March 2026 that improved throughput by 2-3x for certain models, and Google's November 2025 publication on Medusa speculative decoding frameworks. What makes MARS distinctive is its claim of requiring zero architectural changes—a significant advantage over methods that require modifying model architecture or adding draft models.

The timing is particularly relevant given the industry's shift toward making large models more practical for real-time applications. As we covered in our February 2026 analysis of the inference optimization landscape, most acceleration techniques require either model modifications (like Medusa heads) or additional computational resources (like draft models). MARS's approach of working with existing model weights could make it immediately applicable to proprietary models where architectural changes aren't possible.

This development aligns with the broader trend we've observed since late 2025: inference optimization is becoming as important as training efficiency. With companies like Anthropic, OpenAI, and Google deploying increasingly large models, techniques that improve throughput without quality loss are becoming critical competitive advantages. MARS's confidence-based approach also echoes recent work in adaptive computation, where models dynamically allocate resources based on input complexity.

Frequently Asked Questions

How does MARS differ from speculative decoding?

While both aim to accelerate autoregressive generation, speculative decoding typically uses a smaller draft model to predict multiple tokens ahead, which are then verified by the main model. MARS appears to use the same model for both prediction and verification, eliminating the need for a separate draft model and potentially reducing implementation complexity.

Can MARS be applied to any autoregressive model?

The source claims "zero architectural changes," suggesting it should work with standard transformer architectures. However, performance likely depends on the model's confidence calibration—models with well-calibrated confidence scores would enable more aggressive parallelization without quality loss.

What's the trade-off between speed and quality with MARS?

The method uses confidence thresholding to dynamically adjust generation speed. Higher confidence thresholds maintain quality closer to baseline but offer less speedup, while lower thresholds enable greater acceleration at the potential cost of occasional quality degradation. Users can adjust this parameter based on their specific requirements.

Does MARS require additional training or fine-tuning?

Based on the "zero architectural changes" claim and the description as a "training-free method," MARS appears to work with existing model weights without additional training. This makes it particularly attractive for production systems where retraining is costly or impractical.