Meta AI has released research proposing a significant shift in how small language models (SLMs) should be trained using knowledge distilled from larger, more capable "expert" models. The core finding challenges a common practice: instead of having a small model learn from many expert models simultaneously, it should be trained sequentially, focusing on one expert at a time.

What Happened

A research team at Meta has detailed a new training methodology in a forthcoming paper. The work addresses the process of distilling knowledge from large, high-performing models (like Llama 3.1 405B or GPT-4) into much smaller, more efficient models (e.g., 1-3B parameters).

The conventional approach, often called multi-teacher distillation, involves training the small student model on data labeled or generated by several large teacher models at once. The new Meta recipe argues this is suboptimal. Their proposed method, which we'll call Sequential Expert Specialization Training, involves training the small model on outputs from a single large expert model for an entire training phase before moving on to the next expert.

The Proposed Method: Sequential Specialization

The intuition behind the method is that learning from multiple, potentially conflicting, sources of expertise simultaneously can confuse a small model with limited capacity. By dedicating a full training phase to mastering the patterns and knowledge of one expert, the small model can build a more stable and coherent foundation.

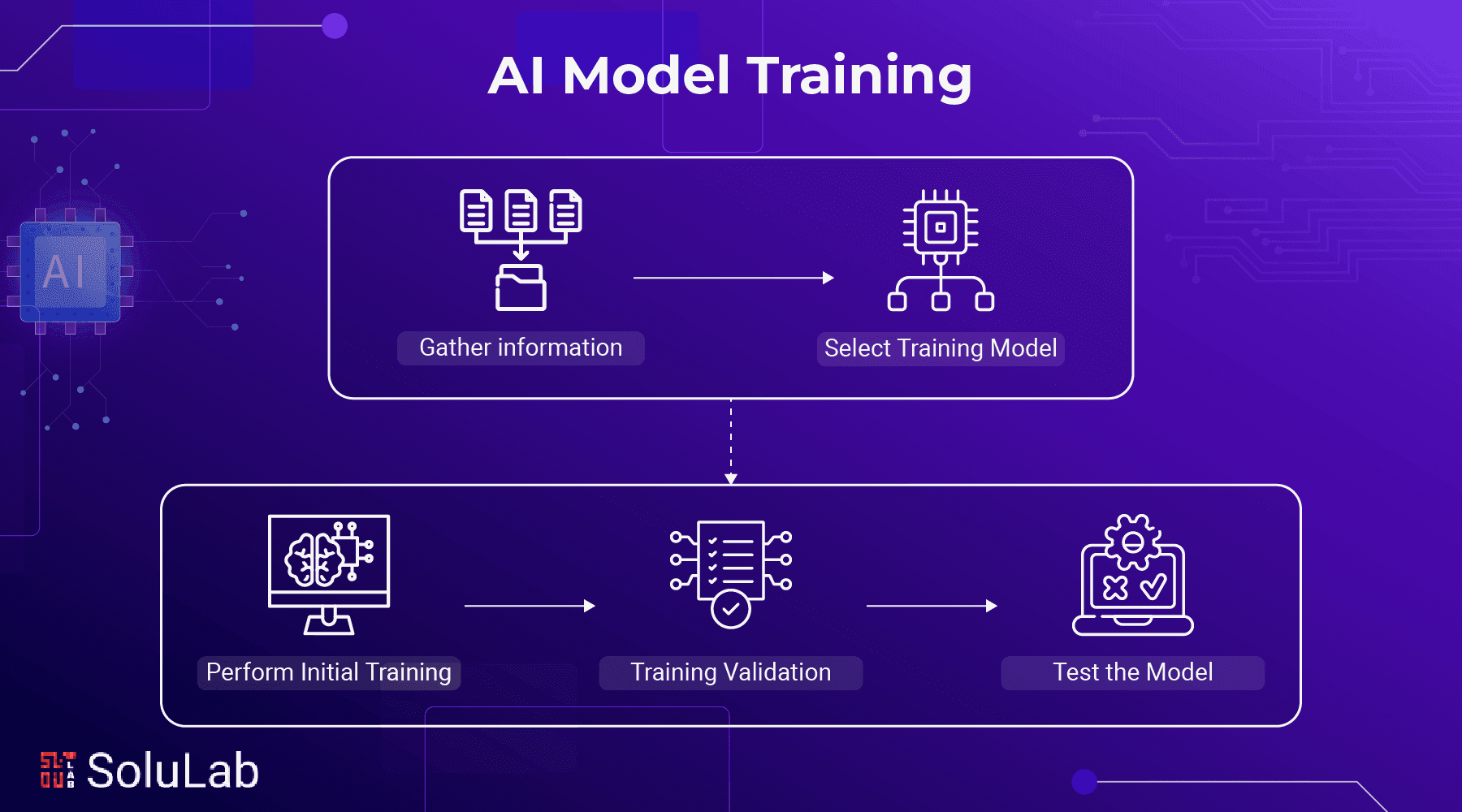

The training recipe likely follows these steps:

- Phase 1 - Expert A: Train the small model exclusively on high-quality data generated or labeled by a single large model (e.g., a coding expert like CodeLlama).

- Phase 2 - Expert B: Once stable, take the checkpoint from Phase 1 and continue training it exclusively on data from a different expert model (e.g., a reasoning expert like GPT-4).

- Repeat for additional domains or capabilities.

The paper suggests this sequential approach leads to better final performance across evaluated benchmarks compared to a model trained on a mixture of all experts' data from the start. It also may simplify training dynamics and improve compute efficiency.

Why It Matters

This research has direct implications for the burgeoning field of creating capable small language models. As organizations seek to deploy efficient, specialized models on-device or in cost-sensitive environments, effective distillation from frontier models is critical.

- Efficiency: A clearer, more stable training path could reduce the total compute required to achieve a target performance level for an SLM.

- Performance: The claimed improvements in benchmark scores suggest we can extract more capability from a given model size using smarter training protocols.

- Practical Training: This provides a concrete, actionable recipe for other teams building SLMs, moving beyond the intuition that "more expert data is always better."

The work aligns with Meta's broader strategy of open-sourcing foundational models and training methodologies, as seen with the Llama family, to advance the field while establishing its research leadership.

gentic.news Analysis

This research is a tactical refinement in the high-stakes race for efficient AI. It follows Meta's established pattern of investing in fundamental training research, as seen with their previous work on Llama 2, Llama 3, and the recent Llama 3.1 release. The focus on distillation efficiency is particularly timely. As the cost of training frontier models soars, extracting their value into smaller, cheaper-to-run models becomes a key economic lever. This isn't about beating a new SOTA benchmark; it's about improving the cost/performance curve for the vast majority of real-world deployments.

The method also subtly acknowledges a limitation of current SLMs: limited capacity. The sequential approach is essentially a form of curriculum learning, preventing the model's representational space from becoming cluttered with conflicting signals. This connects to broader trends in modular and mixture-of-experts (MoE) architectures, where the goal is to manage capacity intelligently. Here, the management is temporal rather than architectural.

For practitioners, this paper will be a mandatory reference when designing distillation pipelines. It suggests that the order of specialization matters and that patience—training thoroughly on one domain before adding another—pays off. The next logical step is for the community to test this recipe across more model families and domains to see how broadly it applies.

Frequently Asked Questions

What is knowledge distillation in AI?

Knowledge distillation is a training technique where a smaller "student" model learns to mimic the behavior of a larger, more complex "teacher" model. Instead of learning from raw data, the student is trained on the teacher's outputs (like its answers or internal representations), aiming to achieve similar performance with far fewer parameters and lower computational cost.

How is this new method different from standard distillation?

Standard multi-teacher distillation typically involves training the student model on a blended dataset containing outputs from several expert teachers simultaneously. Meta's new method changes this by having the student model focus on learning from only one teacher model at a time, completing a full phase of training before being exposed to the next teacher. This sequential approach is argued to reduce confusion and improve learning efficiency for the small model.

Why are small language models important?

Small language models (typically under 10 billion parameters) are crucial for deploying AI in resource-constrained environments like mobile phones, edge devices, or applications where latency and cost are primary concerns. They are cheaper to run, faster to respond, and easier to fine-tune for specific tasks compared to massive frontier models, making them essential for scalable, real-world AI integration.

Has Meta released the code for this training recipe?

As of this reporting, the full research paper detailing the method has been announced but may not yet be publicly available. Based on Meta's historical practice with foundational research (like the Llama series), it is highly likely that the paper will be published on arXiv, and accompanying code or detailed configurations may be released to facilitate replication and further research by the community.