What Happened

A technical discussion on X (formerly Twitter) highlighted an emerging architecture called Memory Sparse Attention (MSA). According to the source, MSA enables AI models to directly store and reason over massive long-term memory inside their attention system, rather than relying on external retrieval mechanisms or lossy compression techniques. The key claimed benefit is that this approach makes models "far more accurate and scalable" for long-context tasks.

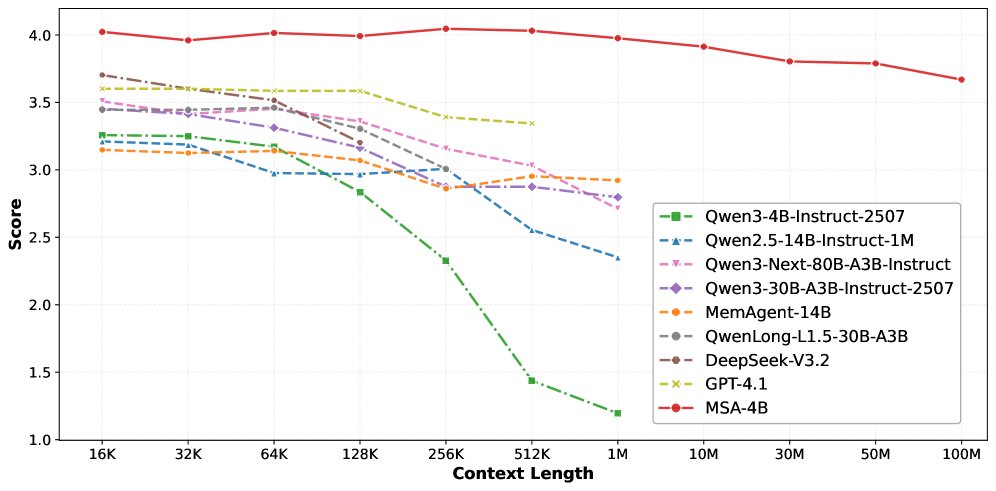

The most concrete technical claim is that MSA allows for a 100 million token context window with minimal performance loss. This represents a potential order-of-magnitude leap beyond current state-of-the-art long-context models, which typically operate in the 128K to 1M token range with significant performance degradation at the outer bounds of their context windows.

Context

Current approaches to long-context AI face fundamental trade-offs:

- External Retrieval-Augmented Generation (RAG): Models query external vector databases or document stores, introducing latency, potential retrieval errors, and architectural complexity.

- Lossy Compression: Methods like summarization, hierarchical attention, or token compression discard information to fit context into limited windows.

- Sparse Attention Variants: Existing techniques like Longformer, BigBird, or StreamingLLM use fixed patterns (local + global) or sliding windows to reduce the quadratic O(n²) attention complexity, but they still face memory/performance constraints at extreme scales.

MSA appears to be positioned as a different paradigm—keeping memory internal to the attention mechanism while maintaining sparsity to handle the computational complexity. The "memory" component suggests persistent storage across sequences or sessions, while "sparse attention" indicates computational efficiency through selective attention patterns.

What We Don't Know (Based on Available Information)

The source provides no technical details about:

- The specific sparse attention pattern or memory addressing mechanism

- Training methodology or datasets used

- Published benchmarks or peer-reviewed evaluations

- Computational requirements (FLOPs, memory footprint)

- Comparison to existing long-context architectures

- Whether this is a research paper, corporate project, or conceptual proposal

Without these details, practitioners should treat the 100M token claim as an unverified architectural possibility rather than a demonstrated capability.