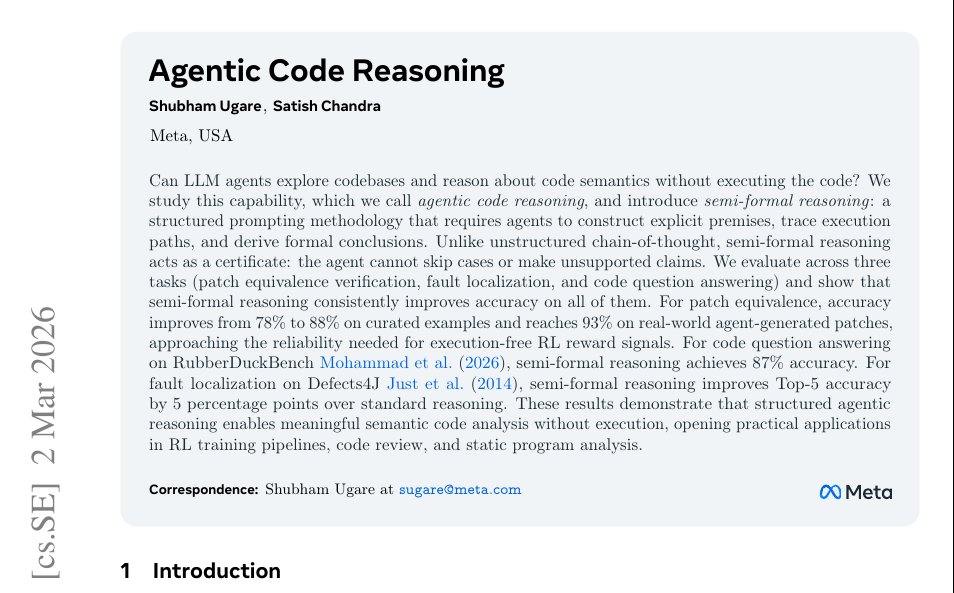

Meta AI researchers have made a significant discovery in how to improve the reliability of large language models (LLMs) when generating code patches. According to findings shared by AI researcher Rohan Paul, when LLMs are forced to display their reasoning step-by-step with proof verification, their code patch error rate drops dramatically—by approximately 90%.

The Discovery: Reasoning Transparency as a Quality Control Mechanism

The research reveals a fundamental insight about how LLMs process complex programming tasks. When these models are allowed to generate code patches without showing intermediate reasoning steps, they tend to produce more errors. However, when researchers implemented a system that requires the AI to articulate each logical step and provide proof for its decisions, the quality of output improved substantially.

This approach essentially forces the AI to "think aloud" rather than jumping directly to conclusions. The step-by-step reasoning process appears to create a form of self-checking mechanism where the model must verify each logical progression before moving to the next step.

How the Method Works

While the original source doesn't provide exhaustive technical details, the core principle involves modifying how LLMs approach code generation tasks. Instead of generating a complete code patch in one pass, the model is constrained to:

- Break down the problem into discrete logical steps

- Articulate the reasoning behind each step

- Provide proof or verification for each decision

- Synthesize these verified steps into a final solution

This methodology appears to work particularly well for code patching—the process of identifying and fixing bugs or vulnerabilities in existing code. Code patching requires not just generating syntactically correct code, but understanding the underlying logic, identifying edge cases, and ensuring the fix doesn't introduce new problems.

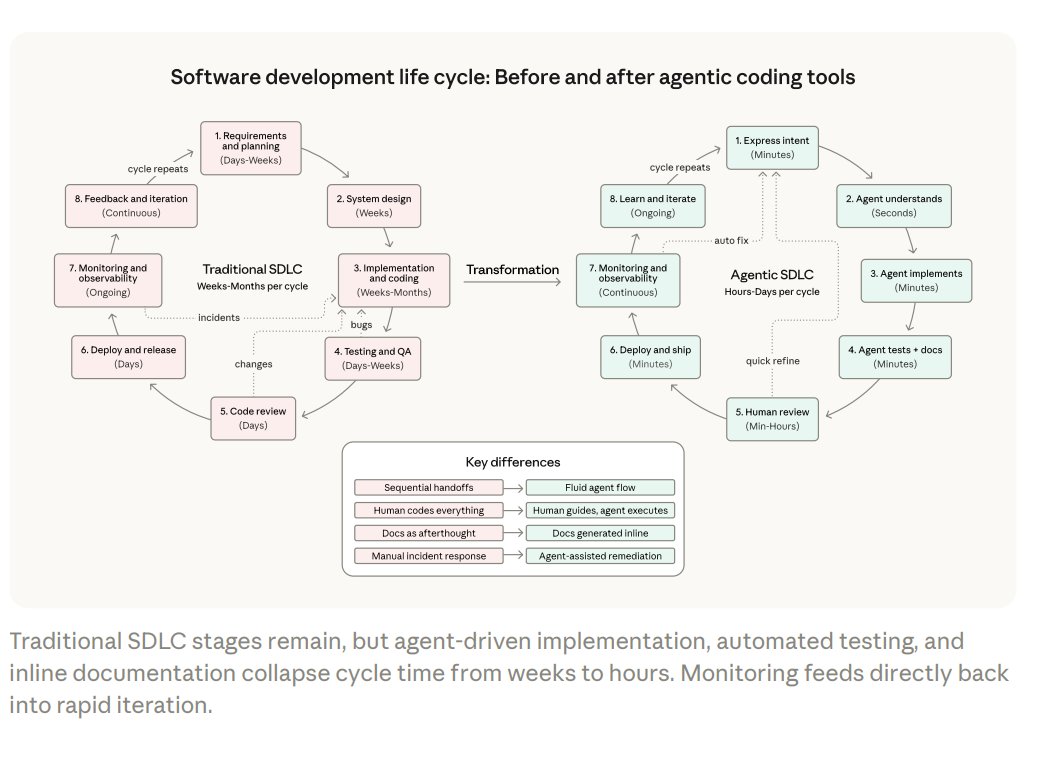

Implications for AI-Assisted Programming

The implications of this discovery are substantial for the field of AI-assisted software development:

Enhanced Code Quality: A 90% reduction in error rates could make AI-generated code patches significantly more reliable, potentially making them production-ready with less human oversight.

Debugging Transparency: When AI systems show their reasoning, human developers can better understand why certain fixes were proposed, making collaboration between humans and AI more effective.

Training Improvements: This approach could inform how future LLMs are trained, potentially incorporating reasoning transparency as a fundamental component rather than an afterthought.

Trust and Adoption: More transparent reasoning could increase developer trust in AI coding assistants, accelerating adoption in professional software development environments.

Broader Applications Beyond Coding

While the initial discovery focuses on code generation, the principle of forcing step-by-step reasoning with proof verification likely applies to other complex domains where LLMs are employed:

- Mathematical problem-solving

- Scientific reasoning and hypothesis generation

- Legal analysis and contract review

- Medical diagnosis support systems

- Complex decision-making in business contexts

In each of these domains, the ability to trace the AI's reasoning process could significantly improve accuracy and reliability while making the systems more interpretable to human experts.

Challenges and Limitations

Despite the promising results, several challenges remain:

Computational Overhead: The step-by-step approach likely increases computational requirements and response times compared to direct answer generation.

Implementation Complexity: Integrating this reasoning framework into existing LLM architectures may require significant architectural changes.

Domain Specificity: The effectiveness of this approach may vary across different types of tasks and problem domains.

Human Verification Burden: While the AI shows its work, humans still need to verify the reasoning chain, which could offset some efficiency gains.

The Future of Transparent AI Reasoning

Meta's discovery points toward a future where AI systems are designed not just to produce correct answers, but to demonstrate how they arrived at those answers. This aligns with growing demands for explainable AI (XAI) across industries where understanding the "why" behind decisions is as important as the decisions themselves.

As LLMs become more integrated into critical systems—from healthcare to finance to infrastructure—methods that improve their reliability and transparency will become increasingly valuable. Meta's approach represents a significant step toward making AI systems more trustworthy partners in complex problem-solving.

Source: Rohan Paul's report on Meta AI research findings shared via social media.