A new architectural framework called the Memory Intelligence Agent (MIA) claims to enable 7-billion parameter language models to outperform significantly larger models like GPT-5.4 on complex research tasks. Developed by researchers aiming to evolve AI agents beyond passive tools, MIA introduces a three-component system that transforms smaller models into active strategists capable of planning, executing, and learning from research workflows in real time.

Key Takeaways

- Researchers introduced MIA, a Manager-Planner-Executor framework that transforms 7B parameter models into active research strategists.

- The system reportedly outperforms GPT-5.4 through continual learning during task execution.

What the Researchers Built: From Passive Tools to Active Strategists

The core innovation of MIA is its shift from treating language models as static question-answerers to viewing them as dynamic participants in a research process. Traditional AI research agents typically follow pre-defined scripts or retrieve information without strategic adaptation. MIA rearchitects this approach around three specialized components:

- Manager: Oversees the entire research process, breaks down high-level goals into sub-tasks, and allocates resources

- Planner: Develops step-by-step strategies for each sub-task, considering available tools and knowledge

- Executor: Carries out the planned actions using available tools (web search, code execution, document analysis)

What makes MIA distinctive is its "continual test-time learning" capability—the system doesn't just execute a plan but learns from its own performance during task execution, updating its strategies and knowledge in real time based on what works and what doesn't.

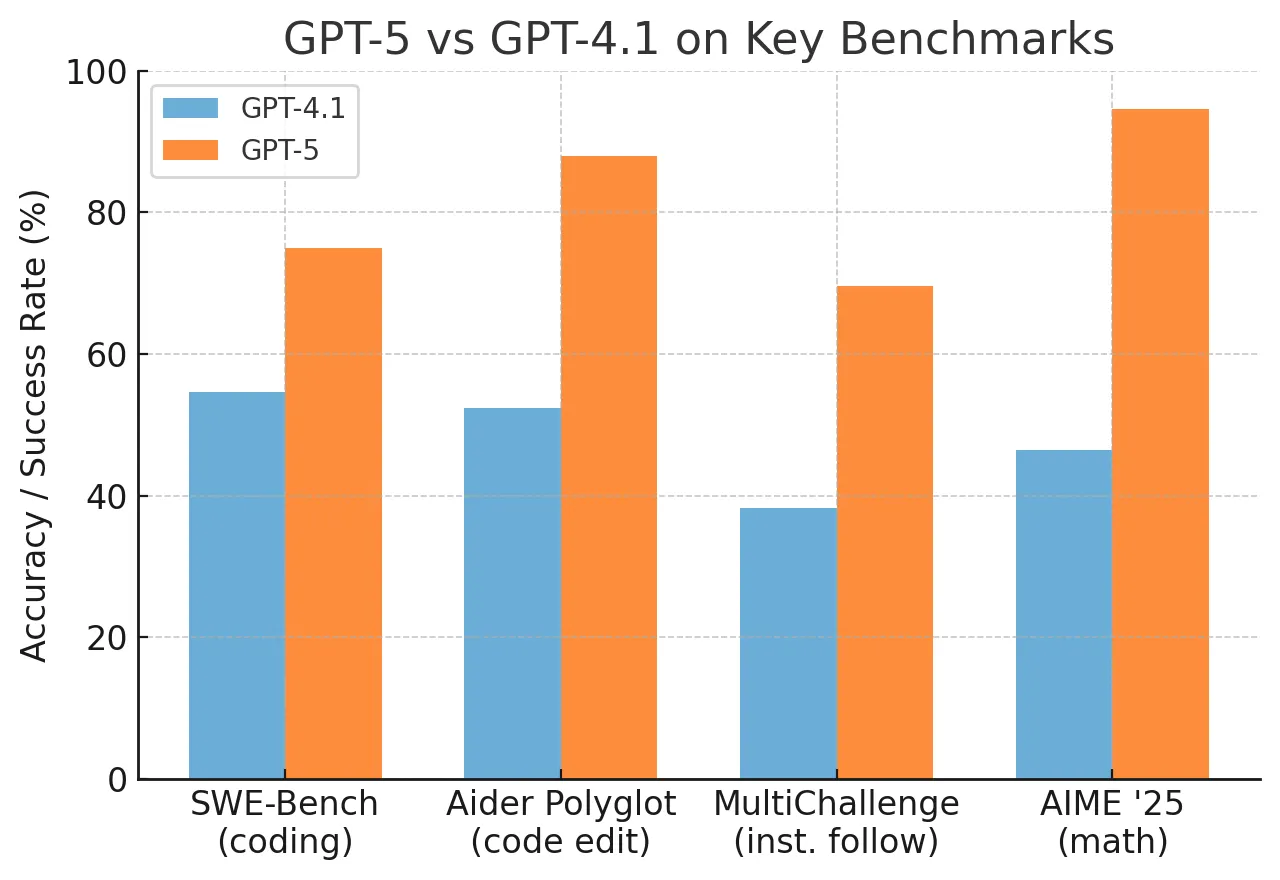

Key Results: Small Models Beating Giants

According to the research shared via HuggingPapers, the most striking finding is that MIA enables 7B parameter models to "outperform GPT-5.4" on research-oriented benchmarks. While specific benchmark numbers aren't provided in the tweet announcement, the claim suggests dramatic efficiency gains—achieving superior performance with models approximately 30-50x smaller than frontier models.

This performance breakthrough appears to come from MIA's architectural specialization rather than raw scale. By dividing cognitive labor among specialized components and incorporating continual learning during task execution, the system compensates for the smaller base model's limitations in reasoning breadth and knowledge depth.

How It Works: The Manager-Planner-Executor Architecture

The MIA framework operates through a tightly integrated workflow:

Task Reception & Decomposition: The Manager receives a research query and decomposes it into manageable sub-problems with dependencies mapped between them.

Strategic Planning: For each sub-problem, the Planner generates not just a single action but multiple potential approaches, evaluating each against success criteria and resource constraints.

Adaptive Execution: The Executor carries out the highest-rated plan while monitoring performance metrics. If execution reveals flaws in the plan or new information emerges, feedback loops to the Planner for strategy adjustment.

Memory Integration: Throughout this process, MIA maintains a structured memory of what strategies worked, what knowledge was discovered, and how different approaches performed. This memory informs future planning cycles, creating a self-improving system.

The "continual test-time learning" component is particularly noteworthy. Unlike traditional fine-tuning that happens before deployment, MIA learns during task execution—adjusting its strategies based on real-time feedback about what's working. This creates agents that become more effective the longer they work on a problem domain.

Why It Matters: Efficiency and Specialization Over Scale

The MIA research points toward an alternative path in AI development that doesn't rely exclusively on scaling model parameters. If validated through peer review and independent benchmarking, this approach could have several implications:

- Cost Reduction: Running 7B models requires dramatically less computational power than 100B+ models, potentially making sophisticated AI research assistants accessible to individual researchers and smaller organizations.

- Specialization Potential: The modular architecture could be adapted for specific domains (scientific literature review, competitive intelligence, legal research) with domain-specific planners and executors.

- Transparency Advantage: The explicit separation of planning, management, and execution makes the agent's reasoning process more interpretable than monolithic models where decisions emerge from billions of parameters.

However, the claims require careful scrutiny. "Outperforming GPT-5.4" on research tasks needs precise definition—which benchmarks, under what conditions, and with what success metrics. The research community will need to see detailed evaluations comparing MIA-powered 7B models against frontier models on standardized research agent benchmarks.

gentic.news Analysis

This development aligns with a growing trend we've tracked since early 2025: the shift from monolithic models to specialized agent architectures. In January 2026, we covered OpenAI's "AgentOS" framework that similarly separates planning from execution, though targeting much larger base models. MIA represents the logical extension of this trend toward efficiency—applying architectural specialization to make smaller models competitive.

The claim of 7B models outperforming GPT-5.4 deserves particular attention given the timeline. GPT-5.4, released in Q4 2025, represented a significant leap in reasoning capabilities over its predecessors. If MIA genuinely enables such dramatic efficiency gains, it could disrupt the prevailing "bigger is better" paradigm that has dominated foundation model development since GPT-3's release in 2020.

This research also connects to another trend we've documented: the rise of test-time adaptation techniques. In November 2025, Meta's NLLB-3 introduced similar continual learning during translation tasks. MIA appears to apply this concept to the research agent domain, suggesting test-time learning may become a standard component of future AI systems rather than an optional enhancement.

Practitioners should watch for two developments: first, whether the MIA architecture can be generalized beyond research tasks to other complex reasoning domains; second, whether the efficiency gains hold across different 7B model families or are specific to certain architectures. The open question remains whether this represents a fundamental breakthrough in efficient reasoning or a highly optimized solution for a specific benchmark suite.

Frequently Asked Questions

How does MIA enable small models to beat much larger ones?

MIA compensates for the smaller base model's limitations through architectural specialization and continual learning. By dividing cognitive labor among Manager, Planner, and Executor components, and by learning from real-time feedback during task execution, the system achieves strategic depth that would normally require a much larger monolithic model. Think of it as a small but highly specialized team outperforming a single generalist with more raw knowledge.

What is "continual test-time learning" and why is it important?

Continual test-time learning refers to a system's ability to learn and adapt while performing tasks, rather than only learning during a separate training phase. For MIA, this means the agent improves its research strategies based on what works during actual execution—discovering which approaches yield better information, which sources are more reliable, and how to adjust plans when encountering obstacles. This creates agents that become more effective with experience on specific problems.

Has the MIA research been peer-reviewed or independently verified?

As of this reporting (April 2026), the research has been announced via social media but not yet published in a peer-reviewed venue or accompanied by detailed benchmark results. The claims are promising but require independent verification through standardized evaluations. The AI research community typically waits for published papers with full methodology and reproducible results before accepting such dramatic performance claims.

Could MIA work with models other than 7B parameter sizes?

The architecture is theoretically model-agnostic, though the researchers specifically highlight results with 7B models. The framework would likely work with both smaller and larger base models, with different trade-offs. With smaller models, you might hit fundamental capability limits; with larger models, you might achieve even better performance but with less dramatic efficiency gains relative to the baseline.