In a strategic move that challenges the industry's obsession with ever-larger models, Microsoft has released Phi-4-reasoning-vision-15B, a 15-billion parameter open-weight multimodal reasoning model specifically engineered for tasks that demand both visual perception and selective reasoning. This compact yet capable model represents Microsoft's latest contribution to making advanced AI more accessible and deployable across various applications, particularly in scientific, mathematical, and user interface understanding domains.

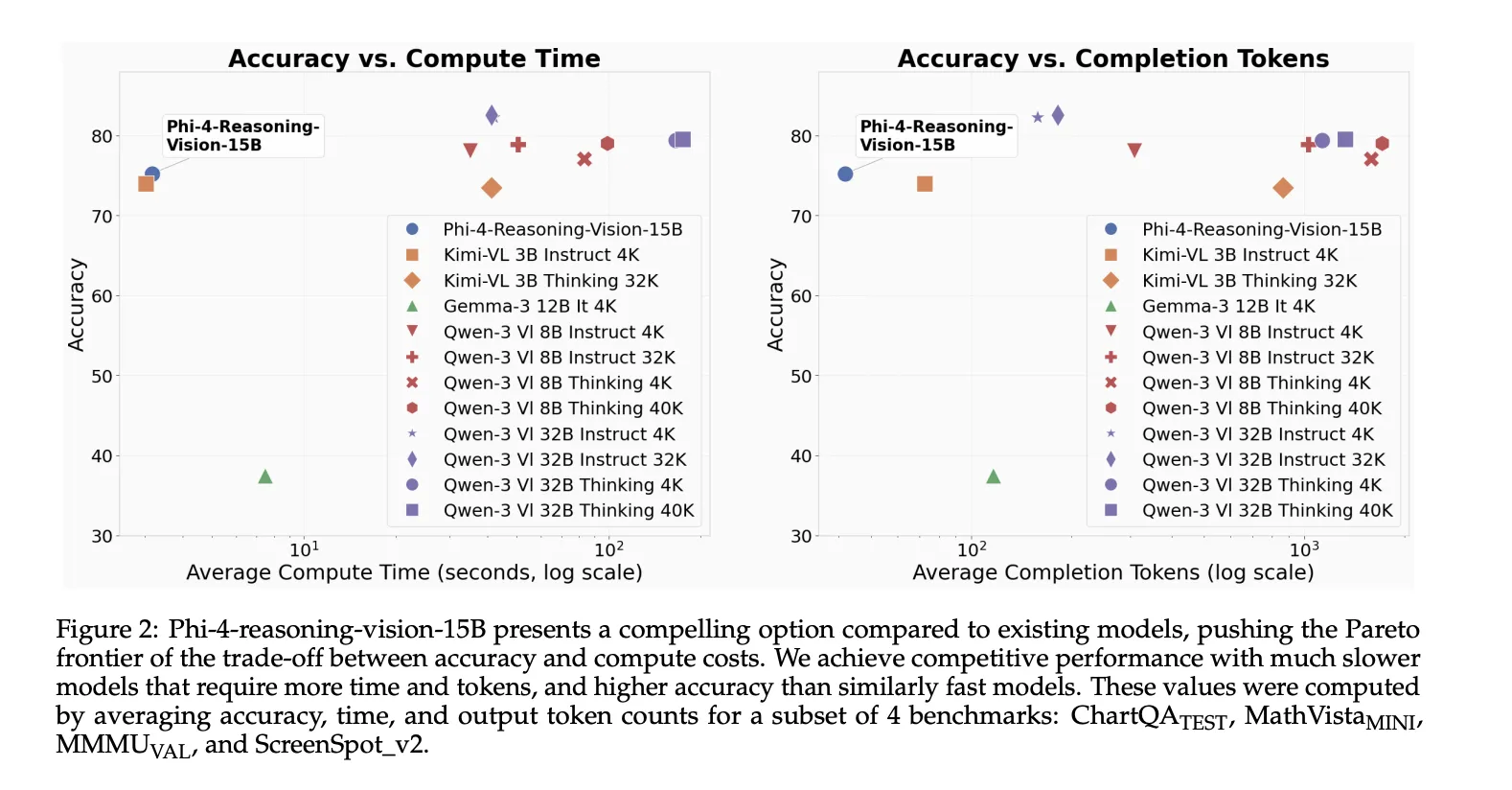

According to the announcement, Phi-4-reasoning-vision-15B is designed to balance reasoning quality with computational efficiency and training-data requirements—a deliberate departure from the trend of increasingly massive vision-language models that come with significant latency and deployment costs.

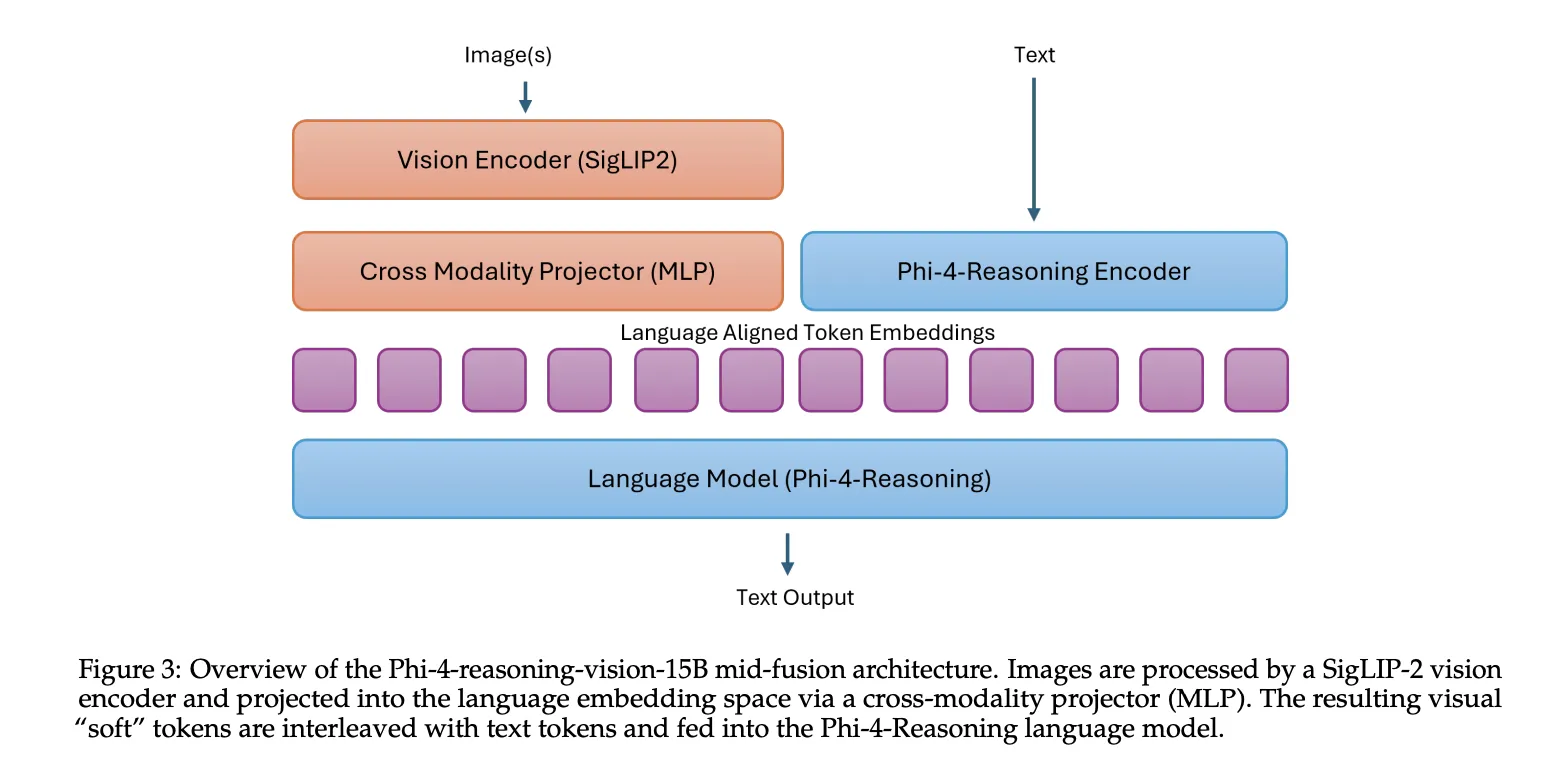

Architectural Innovation: The Mid-Fusion Approach

At its core, Phi-4-reasoning-vision-15B combines Microsoft's Phi-4-Reasoning language backbone with the SigLIP-2 vision encoder using what developers describe as a mid-fusion architecture. This design represents a practical compromise between performance and efficiency.

In this setup, the vision encoder first converts images into visual tokens, which are then projected into the language model's embedding space and processed by the pretrained language model. This approach preserves strong cross-modal reasoning capabilities while keeping both training and inference costs manageable compared to heavier early-fusion designs that might integrate visual processing more deeply into the model's architecture.

The mid-fusion architecture allows the model to maintain the reasoning strengths of the Phi-4 language model while adding robust visual understanding capabilities without requiring a complete architectural overhaul or massive additional parameters.

Why Smaller Models Matter

Microsoft's decision to pursue a smaller-model route comes at a time when many vision-language models have grown substantially in both parameter count and token usage, creating practical barriers to deployment. Larger models typically require more computational resources, generate higher latency, and incur greater costs—factors that limit their real-world applicability, especially for organizations without massive computing infrastructure.

Phi-4-reasoning-vision-15B was specifically built as a more practical alternative that can handle common multimodal workloads without relying on extremely large training datasets or excessive inference-time token generation. The model was trained on approximately 200 billion multimodal tokens, a substantial but not astronomical dataset by today's standards.

This efficiency-focused approach aligns with Microsoft's broader strategy of making AI more accessible and deployable across different environments, from cloud servers to potentially edge devices.

Specialized Capabilities: Math, Science, and GUI Understanding

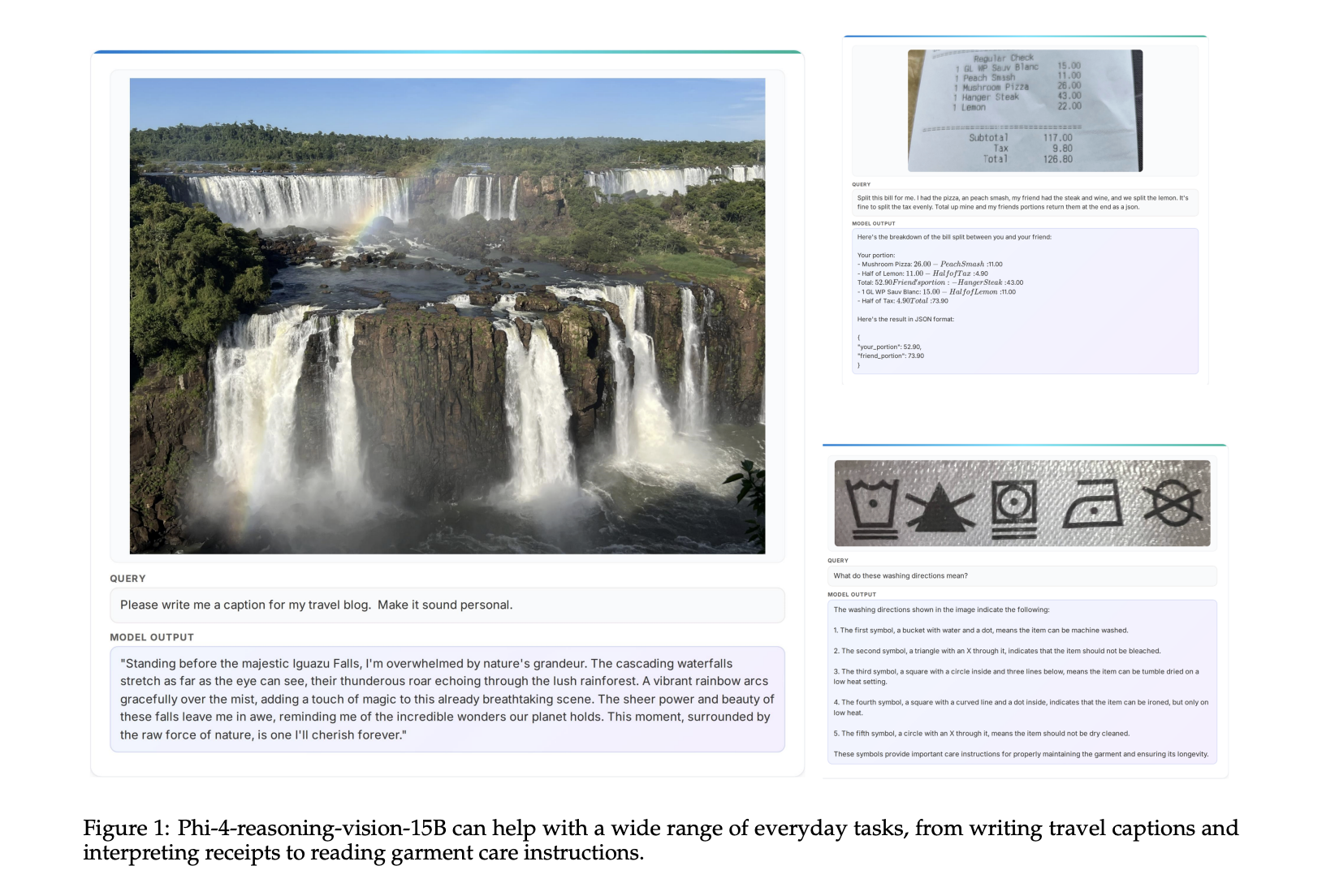

What sets Phi-4-reasoning-vision-15B apart is its specialized focus on scientific and mathematical reasoning and user interface understanding. These capabilities position the model for practical applications in education, research, and software development.

For mathematical and scientific tasks, the model can presumably interpret diagrams, charts, equations, and scientific notation while providing reasoned explanations or solutions. This makes it potentially valuable for educational technology, research assistance, and data analysis applications.

The GUI understanding capability is particularly noteworthy, as it suggests the model can interpret screenshots of software interfaces, potentially enabling applications in software testing, accessibility technology, and automated workflow assistance. This aligns with Microsoft's recent focus on AI integration across its software ecosystem, including Windows and productivity applications.

Context Within Microsoft's AI Strategy

This release comes amid a flurry of AI-related announcements from Microsoft in early 2026. Just days before Phi-4-reasoning-vision-15B's release, Microsoft launched Codex for Windows, bringing OpenAI's code-generation AI to the Windows desktop operating system. Additionally, leaked details suggest Windows 12 will be an AI-first operating system with heavy Copilot integration.

Microsoft has also recently released a complete, open-source AI degree curriculum on GitHub, making professional machine learning education more accessible worldwide. These moves collectively demonstrate Microsoft's comprehensive approach to AI: developing the models, integrating them into products, and educating the next generation of practitioners.

The company's legal team also recently determined that the EU's gatekeeper designation of Anthropic does not require blocking Claude on Microsoft platforms, suggesting a pragmatic approach to regulatory compliance while maintaining access to diverse AI technologies.

Open-Weight Philosophy and Industry Implications

By releasing Phi-4-reasoning-vision-15B as an open-weight model, Microsoft continues its pattern of contributing to the open AI ecosystem while maintaining proprietary control over its largest commercial offerings. This approach allows researchers, developers, and organizations to build upon the technology while Microsoft potentially benefits from community improvements and broader adoption.

The model's release comes at a time when AI is beginning to appear in official productivity statistics, potentially resolving what economists have called the "productivity paradox"—the disconnect between massive technology investment and measured productivity gains. Efficient, specialized models like Phi-4-reasoning-vision-15B could accelerate this trend by making AI capabilities more practically deployable across industries.

Future Applications and Development

While the initial announcement focuses on the model's capabilities in math, science, and GUI understanding, the underlying architecture suggests potential for broader applications. The combination of visual understanding with selective reasoning could prove valuable in fields ranging from medical imaging analysis to technical documentation processing.

The model's efficiency characteristics make it particularly suitable for applications where latency matters, such as real-time assistance tools, educational applications, or embedded systems. As Microsoft continues to develop its AI ecosystem, models like Phi-4-reasoning-vision-15B may serve as building blocks for more complex, specialized applications.

Microsoft's continued investment in both large-scale and efficient AI models reflects a nuanced understanding of the technology landscape: while massive models push the boundaries of what's possible, practical, efficient models drive real-world adoption and create tangible value.

Source: MarkTechPost, March 6, 2026