A developer has open-sourced a solution to one of the most persistent frustrations when working with AI coding assistants: their complete lack of memory between sessions. The tool, called Mind, creates a shared, persistent memory layer that allows AI coding agents to retain project context, architectural decisions, constraints, and bug fixes across different tools and sessions.

What Mind Solves: The Amnesia Problem

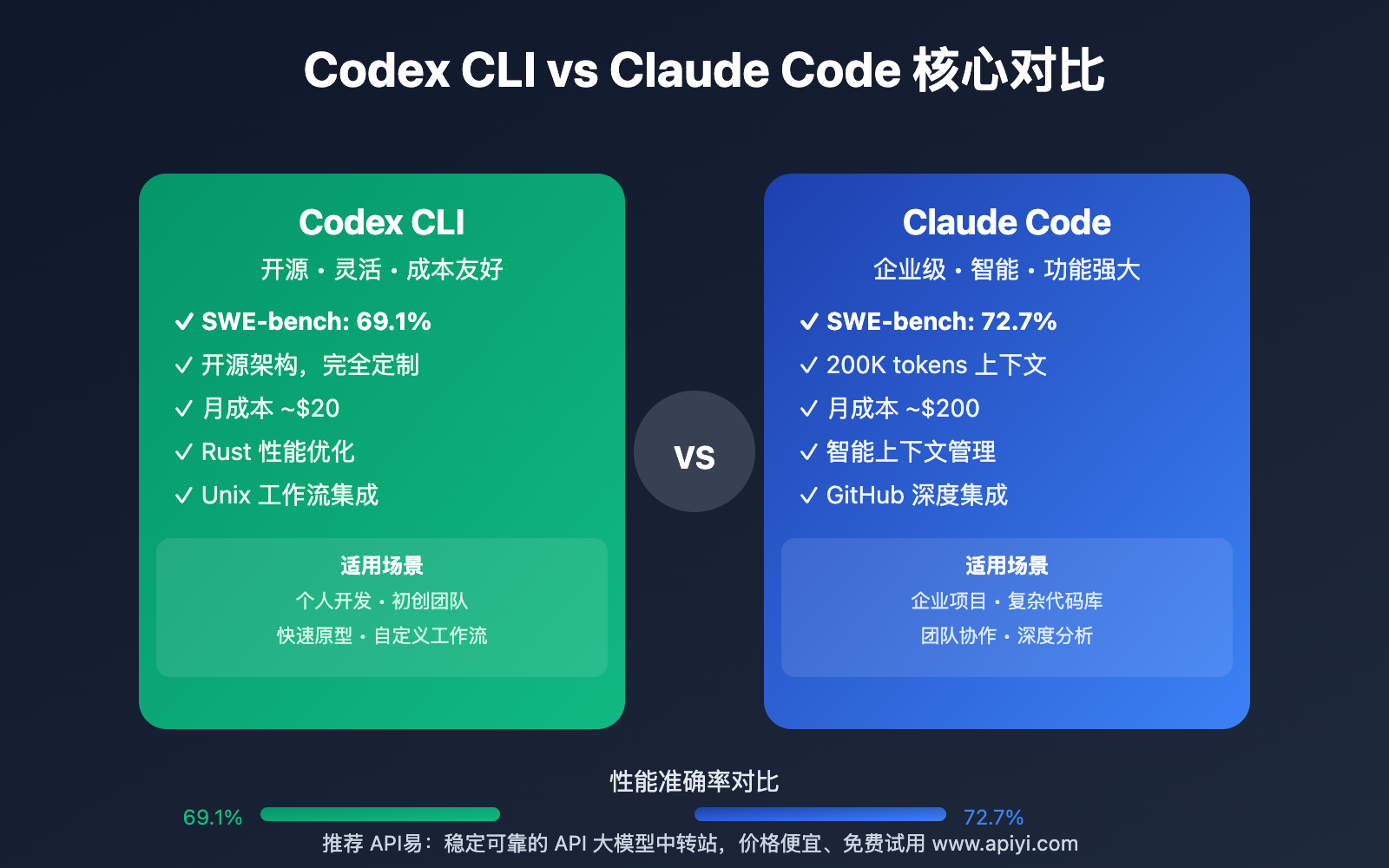

Currently, when developers use AI coding assistants like Claude Code, Cursor, Codex, OpenCode, Gemini CLI, or Windsurf, each new session starts from scratch. The AI has no memory of:

- Your project's architecture decisions

- Constraints you've explained previously

- Bugs you fixed in previous sessions

- Design patterns you've established

- API documentation you've provided

This means developers waste significant time re-explaining context that should persist. As the source tweet notes: "Every new session = re-explaining your architecture, your constraints, your decisions, the bug you fixed last Tuesday."

How Mind Works: A Shared Memory Layer

Mind operates as a persistent memory system that sits between the developer and various AI coding tools. According to the announcement:

- Cross-Tool Compatibility: Mind works with Claude Code, Cursor, Codex, OpenCode, Gemini CLI, and Windsurf

- Session Persistence: Memory persists across different coding sessions

- Environment Flexibility: Developers can start in the terminal and continue in their IDE with the same context

- Shared Brain: All tools access the same persistent memory store

The implementation appears to create a standardized memory format that different AI coding tools can read from and write to, effectively giving these tools a "long-term memory" for each project.

Technical Implementation Approach

While specific implementation details weren't provided in the brief announcement, Mind likely works through one of several approaches:

- Context Injection: Automatically injecting relevant project history into each new AI prompt

- Vector Database Storage: Storing project context in embeddings that can be retrieved based on similarity

- Project-Specific Memory Files: Creating persistent memory files that AI tools can reference

- API Integration: Providing a standardized API that different coding tools can implement

The key innovation is creating a memory system that works across different tools rather than being locked into a single vendor's ecosystem.

Practical Implications for Developers

For developers using AI coding assistants, Mind could significantly reduce the friction of context switching:

- Reduced Repetition: No more re-explaining project architecture each session

- Consistent Context: The AI remembers decisions made days or weeks earlier

- Tool Flexibility: Switch between different AI coding tools without losing context

- Project Onboarding: New team members could benefit from accumulated project knowledge stored in Mind

Current Limitations and Unknowns

Based on the brief announcement, several questions remain:

- Security: How is sensitive code and project information stored and protected?

- Performance: Does adding memory context increase prompt sizes significantly?

- Integration Depth: How deeply integrated is Mind with each supported tool?

- Setup Complexity: What's required to get Mind working across multiple tools?

- Open Source Status: What license is it released under, and where is the repository?

gentic.news Analysis

This development addresses a fundamental limitation in current AI coding assistants that has become increasingly apparent as developers rely on them for larger, more complex projects. The lack of persistent memory forces developers to either work in marathon sessions or accept significant context-rebuilding overhead.

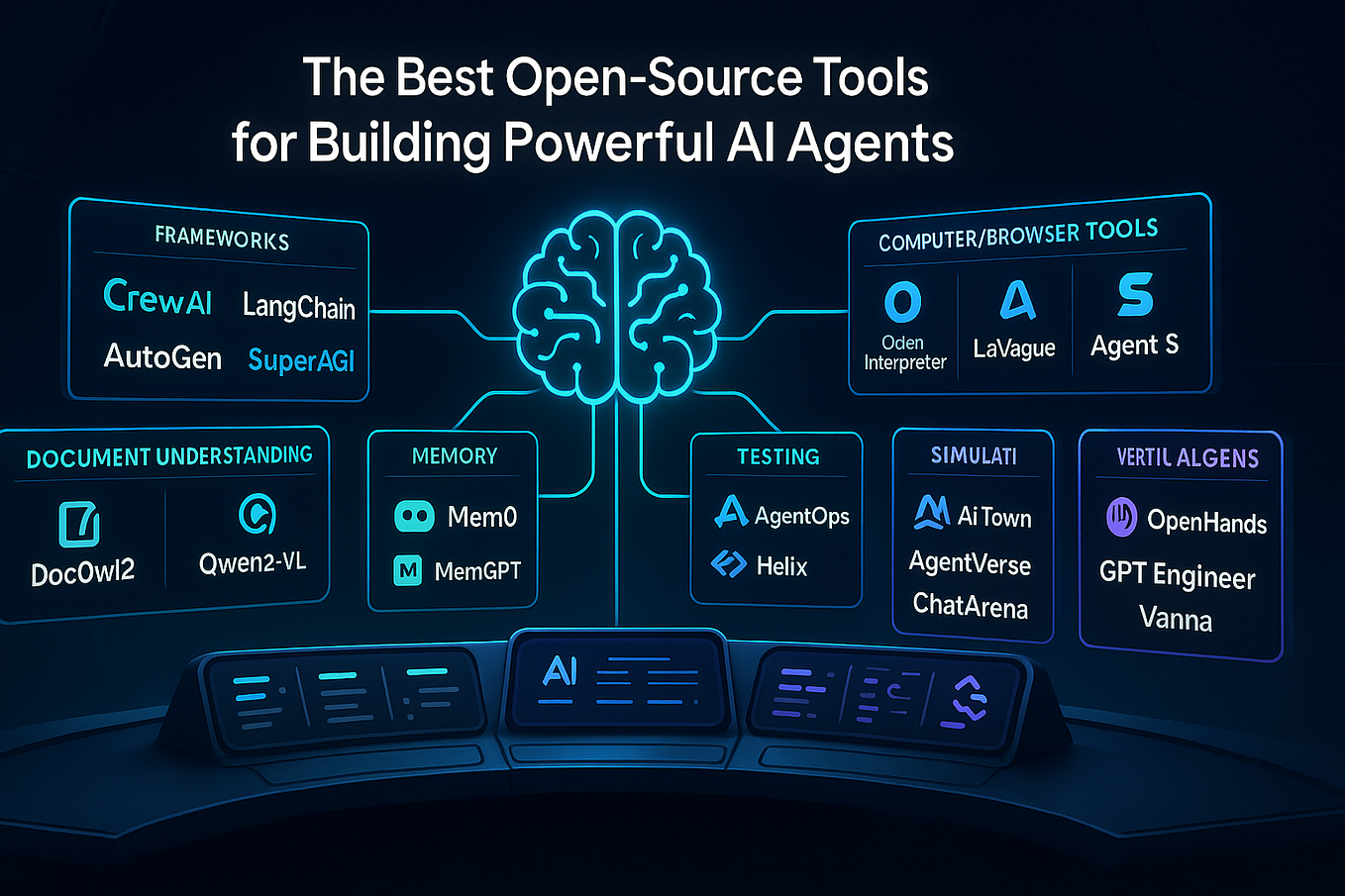

Technically, Mind represents an interesting approach to the context window problem. Rather than trying to fit everything into a single massive context window (which has diminishing returns and increasing costs), it creates a selective retrieval system that pulls relevant historical context into each session. This aligns with broader trends in AI agent architecture where retrieval-augmented generation (RAG) systems are becoming standard for handling large knowledge bases.

Competitively, this open-source approach could pressure proprietary AI coding tools to either adopt similar memory features or risk being seen as inferior. We've seen this pattern before with tools like Continue.dev and Tabnine, where open-source alternatives force commercial players to accelerate their feature development. If Mind gains traction, expect companies like GitHub (Copilot), Amazon (CodeWhisperer), and JetBrains (AI Assistant) to either implement similar features or face developer complaints about their tools' "amnesia."

Practically, the success of Mind will depend on three factors: ease of integration with existing tools, the quality of its memory retrieval (does it pull the right context at the right time?), and whether it can handle the complexity of real-world software projects with multiple interconnected components. The multi-tool support is particularly smart—developers often use different AI assistants for different tasks, and a memory system that only works with one tool would have limited utility.

Frequently Asked Questions

How does Mind store and retrieve project memory?

While specific implementation details aren't provided in the announcement, tools like Mind typically use a combination of vector embeddings and semantic search. Project context (architecture decisions, constraints, bug fixes) is converted into embeddings and stored in a vector database. When a developer starts a new session, the system retrieves the most relevant context based on the current task and injects it into the AI's prompt.

Is Mind secure for proprietary codebases?

Security considerations are crucial for any tool that stores project context. The open-source nature of Mind means developers can inspect the code and deployment options. For sensitive projects, Mind would likely need to run entirely locally, storing embeddings in a local vector database rather than cloud services. The announcement doesn't specify security measures, so early adopters should carefully evaluate the implementation.

Can Mind work with custom or proprietary AI coding tools?

The announcement lists several popular AI coding tools, but the open-source nature suggests Mind could be extended to work with other tools. Most AI coding assistants have some form of plugin or extension system, and Mind would need to provide integration points for different tools. The success of this approach depends on whether Mind uses a standardized API that different tools can implement.

How does Mind compare to built-in memory features in tools like Cursor?

Some AI coding tools are beginning to add limited memory features. For example, Cursor has experimented with remembering project context across sessions. Mind's advantage is its cross-tool compatibility—it creates a unified memory layer that works regardless of which AI assistant you're using. This is particularly valuable for developers who use multiple tools for different purposes or who switch between tools as their needs change.