What Happened

MIT researchers have developed a new AI architecture called Recursive Language Models (RLMs) that eliminates the need for Retrieval-Augmented Generation (RAG) and handles inputs up to 10 million tokens or more. According to a tweet from @HowToAI_, the method treats long documents as an external environment, storing data as Python variables, and allows the AI to write code to search, slice, and filter the document itself. It then recursively spawns smaller "sub-AIs" to read specific snippets in parallel, preserving all original context without summarization.

The results show dramatic improvement: on the hardest long-context reasoning benchmarks, a standard model scored 0.04, while the RLM architecture achieved 58.00. The system scales easily to 10 million+ tokens and costs less than running a standard massive prompt.

How It Works

RLMs replace the traditional approach of stuffing all data into a giant context window or using RAG to chop documents into summaries. Instead, the AI is placed in a sandbox where the data is stored as a Python variable. When a question is asked, the AI writes code to actively search, slice, and filter the document. It then recursively spawns smaller "sub-AIs" to read specific snippets in parallel, never summarizing or deleting data.

This approach preserves every single piece of original context, avoiding the "context rot" that occurs when models process giant prompts in one pass. The method is inspired by how humans read: we don't memorize entire books; we search, skim, and focus on relevant sections.

Key Results

The benchmark results are stark. On the hardest long-context reasoning benchmarks, the standard model scored 0.04, while the RLM architecture hit 58.00 — a 1,450x improvement. The system handles inputs up to two orders of magnitude beyond normal context windows, scaling easily to 10 million+ tokens.

Long-context reasoning score 0.04 58.00 Maximum context length ~128K tokens 10M+ tokens Cost High for large prompts Lower than standard massive promptWhy It Matters

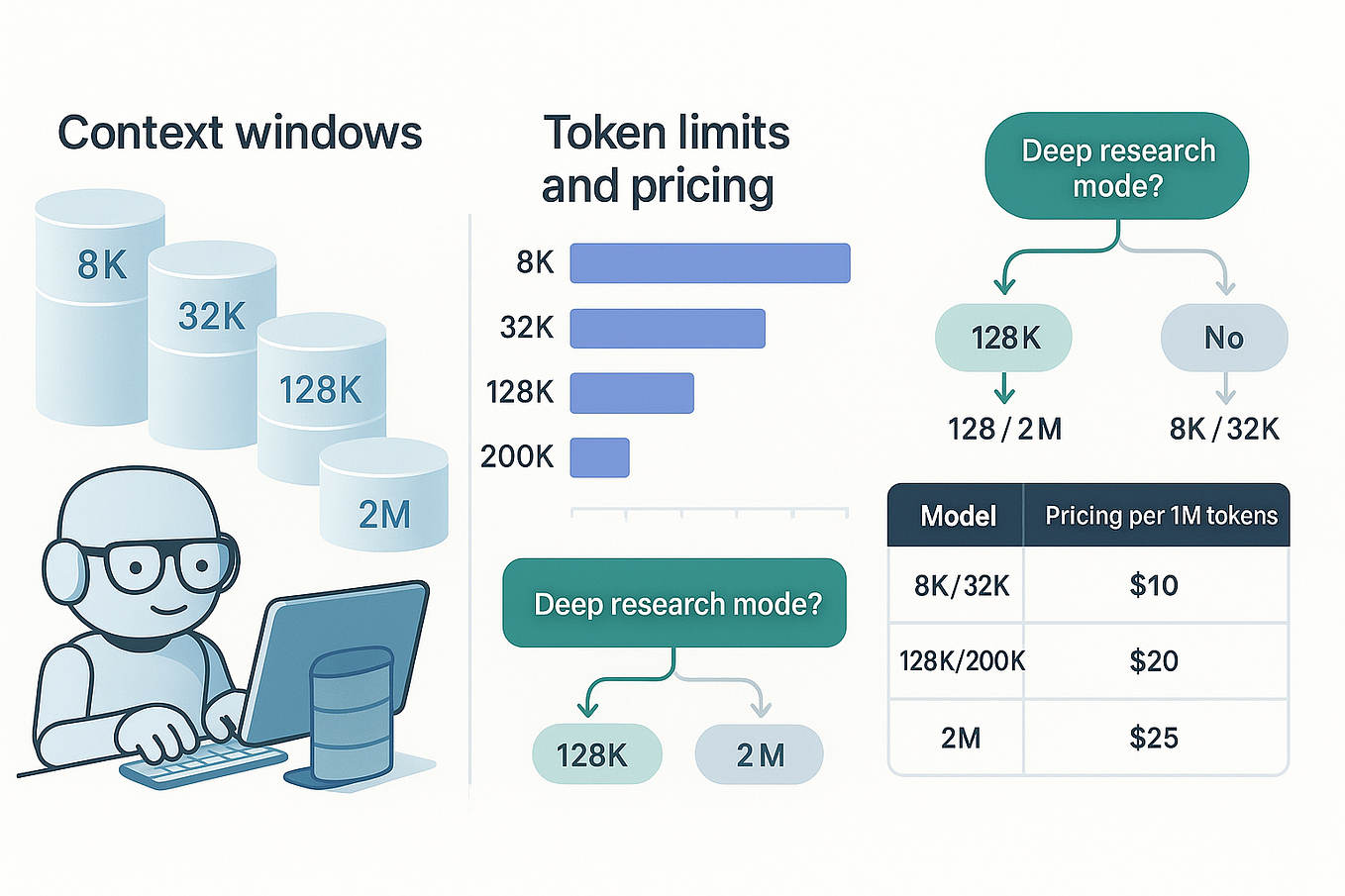

This development challenges the prevailing approach of building ever-larger context windows. Over the past two years, companies have burned millions in compute trying to scale context windows, with models like GPT-4-128K and Claude 3-200K pushing boundaries. But MIT's approach suggests that the future isn't about forcing a model to swallow a giant wall of text — it's about teaching it how to read.

RLMs could dramatically reduce compute costs for long-document analysis, legal document review, scientific literature analysis, and any application requiring understanding of large datasets. The ability to preserve all original context without summarization is particularly important for domains where nuance matters, such as legal contracts, medical records, and research papers.

What This Means in Practice

For practitioners, RLMs could eliminate the need for complex RAG pipelines for many use cases. Instead of building vector databases, chunking strategies, and retrieval systems, developers could use a single model that knows how to search and read documents. This could simplify architectures and reduce latency for applications that require deep understanding of large documents.

Limitations and Caveats

The source is a tweet from @HowToAI_, which summarizes the paper. The original paper's details — exact model sizes, training data, and reproducibility — are not yet fully available. The benchmark comparison (0.04 vs 58.00) may be on a specific test set, and generalizing to all long-context tasks requires further validation.

gentic.news Analysis

This development arrives at a critical inflection point in the AI industry's approach to context windows. For the past two years, the dominant narrative has been that bigger context windows are the path to better long-document understanding. Companies like Anthropic (Claude 3.5 with 200K tokens) and Google (Gemini 1.5 Pro with 1M tokens) have invested heavily in scaling context. MIT's RLM flips this assumption on its head, suggesting that architectural innovation — not brute-force scaling — may be the more efficient path.

The recursive approach is reminiscent of other recent trends in AI, such as chain-of-thought reasoning and tool use. By treating the document as an external environment and using code to interact with it, RLMs effectively give the model a "search engine" for its own context. This is a fundamentally different paradigm from both RAG (which requires external retrieval) and long-context windows (which require expensive attention mechanisms).

If RLMs prove reproducible and scalable, they could reshape the economics of AI applications. Currently, RAG systems require significant infrastructure: vector databases, embedding models, and retrieval logic. RLMs could collapse this stack into a single model, reducing complexity and cost. However, the recursive spawning of sub-models could introduce latency challenges, and the approach may be less effective for tasks that require holistic understanding of an entire document rather than targeted search.

Frequently Asked Questions

What is Recursive Language Model (RLM)?

An RLM is a new AI architecture from MIT that treats long documents as an external environment. Instead of processing all text in one pass, it writes code to search, slice, and filter the document, then spawns smaller sub-models to read specific snippets in parallel. This preserves all original context without summarization.

How does RLM compare to RAG?

RAG (Retrieval-Augmented Generation) chops documents into chunks, summarizes them, and stores them in a vector database for retrieval. RLM eliminates this by keeping the full document as a Python variable and letting the AI search it directly. This preserves nuance and context that RAG's summarization can lose.

What benchmarks does RLM outperform?

On the hardest long-context reasoning benchmarks, a standard model scored 0.04, while the RLM architecture scored 58.00 — a 1,450x improvement. The system handles inputs up to 10 million+ tokens, compared to typical context windows of 128K-200K tokens.

Is RLM available for public use?

The source is a tweet summarizing a research paper. The paper's code and model weights are not yet publicly available. Developers should monitor MIT's publications and GitHub for releases.