What Happened

Cisco Blogs has published a technical guide titled "Fine-Tuning Embedding Models for Enterprise Retrieval: A Practical Guide with NVIDIA Nemotron Recipe." The article serves as a detailed, practitioner-focused walkthrough for adapting general-purpose embedding models to specific enterprise domains. While the full content is linked via Google News, the title and source indicate a collaborative effort highlighting NVIDIA's Nemotron framework as the chosen methodology.

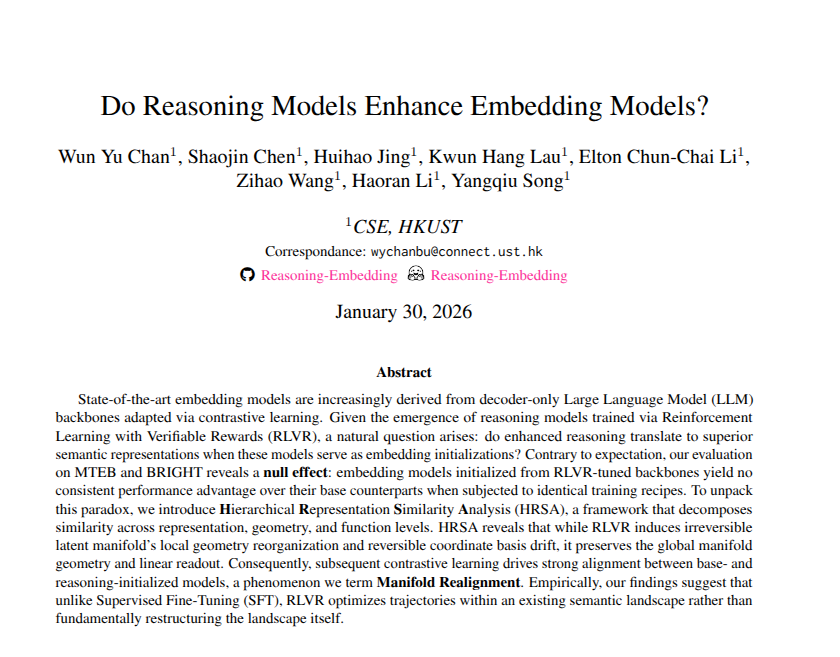

This guide arrives amidst a surge in enterprise interest in Retrieval-Augmented Generation (RAG), where the quality of retrieved information is paramount. Fine-tuning embedding models—the AI components that convert text into numerical vectors for similarity search—is a proven method to significantly boost retrieval accuracy for specialized vocabularies and use cases, such as legal documents, technical support, or proprietary product catalogs.

Technical Details: The "Nemotron Recipe"

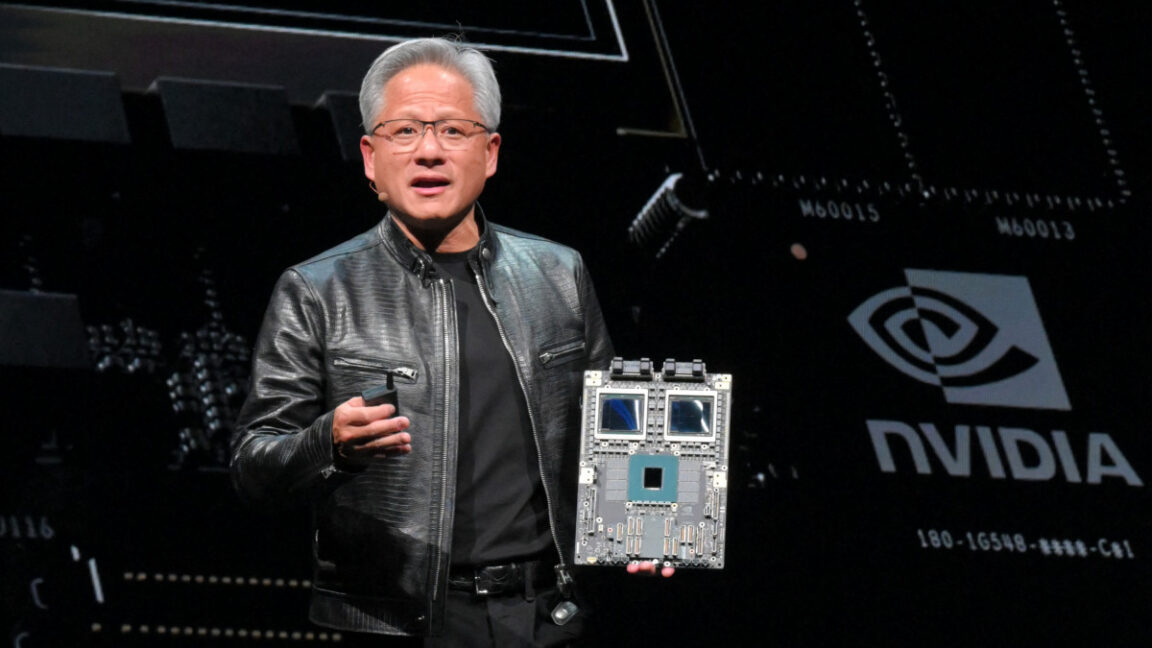

While the specific steps are in the source guide, the core concept involves using NVIDIA's Nemotron model family. Based on the Knowledge Graph, NVIDIA has been actively developing its Nemotron suite, including models like Nemotron-Cascade 2 and Nemotron 3 Super, which are likely candidates for this fine-tuning process.

The typical "recipe" for fine-tuning an embedding model involves:

- Domain-Specific Data Curation: Gathering high-quality, representative pairs of queries and relevant documents from the enterprise's own data (e.g., customer service logs, product manuals, internal knowledge bases).

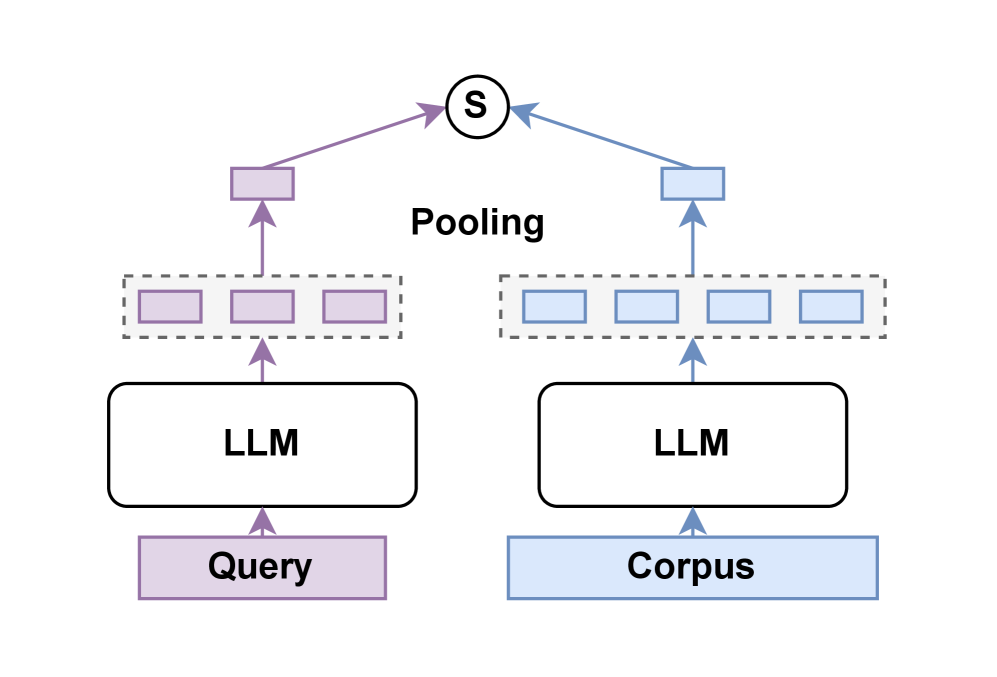

- Contrastive Learning: Training the model to pull the vector representations of relevant query-document pairs closer together in the vector space while pushing irrelevant pairs apart. NVIDIA's tools likely simplify the infrastructure needed for this computationally intensive task.

- Evaluation and Deployment: Measuring the improved retrieval performance on a held-out dataset before deploying the fine-tuned model into a production vector database ecosystem.

This process moves enterprises beyond generic, off-the-shelf embeddings (like OpenAI's or Google's Gemini Embedding 2) towards models that deeply understand company-specific jargon, product names, and internal processes.

Retail & Luxury Implications

For retail and luxury brands, the ability to create hyper-accurate, domain-specific search is a cornerstone of next-generation digital experiences. A fine-tuned embedding model is the engine that could power:

- Precision Product Discovery: A search for "evening bag with chain strap" would reliably retrieve relevant products, even if the catalog descriptions use internal SKU codes or specific material names like "grain de poudre leather." This directly improves conversion rates and reduces customer frustration.

- Enhanced Customer Service AI: A customer chatbot or internal agent assistant could instantly retrieve the exact policy document, care instruction, or inventory status based on a conversational query, dramatically improving resolution time and accuracy.

- Personalized Styling & Recommendations: By understanding the nuanced relationships between items in a lookbook or across seasonal collections, a fine-tuned model can power more sophisticated "complete the look" or archival product recommendation systems.

- Internal Knowledge Management: Enabling designers, buyers, and retail staff to instantly find past trend reports, supplier information, or visual references from massive internal archives.

The guide is significant because it demystifies a technically complex process. It provides a concrete starting point for AI teams at brands like LVMH, Kering, or Burberry who are looking to move from experimental RAG prototypes to robust, production-grade systems that genuinely understand the language of luxury.

Implementation Approach & Considerations

Adopting this guide requires a mature data and MLOps foundation. Key steps include:

- Data Pipeline: Establishing a secure pipeline to curate, clean, and label thousands of high-quality query-document pairs from internal systems.

- Technical Stack: Access to NVIDIA GPU infrastructure (like the H100, referenced in our recent coverage of Google's TurboQuant) and familiarity with frameworks like NVIDIA NeMo for model training.

- Evaluation Framework: Defining clear, business-relevant metrics for retrieval success (e.g., mean reciprocal rank, recall@k) beyond simple technical benchmarks.

- Integration: Deploying the new model into existing vector search platforms (e.g., Pinecone, Weaviate, or proprietary solutions) and updating inference pipelines.

The effort is non-trivial but offers a clear path to competitive advantage through superior AI-driven search and knowledge retrieval.