What the Research Found

A new study titled "Do Reasoning Models Enhance Embedding Models?" (arXiv:2601.21192) delivers a counterintuitive finding: training large language models (LLMs) to excel at complex reasoning tasks—like math and logic puzzles—does not improve their performance when adapted into embedding models for general-purpose semantic search or question answering.

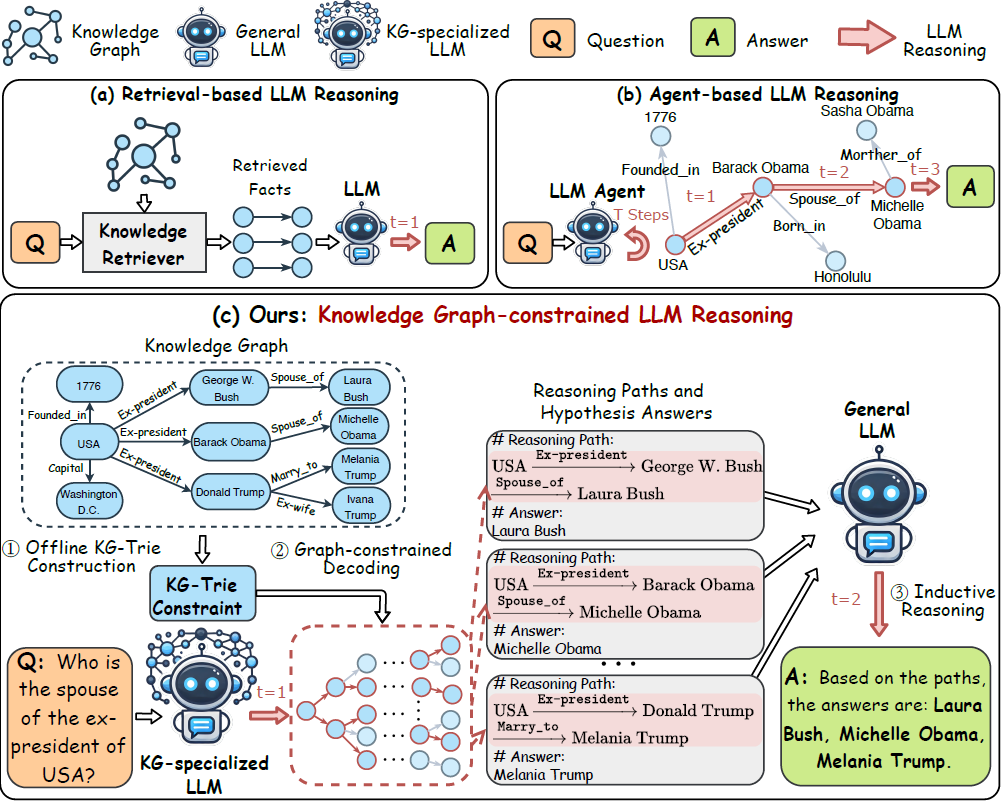

Researchers took base LLMs and their "reasoning-enhanced" counterparts (models fine-tuned with chain-of-thought or similar reasoning datasets) and converted both into embedding models. These models transform text into dense vector representations (embeddings) where semantic similarity is measured by vector distance—the core technology behind modern search and retrieval-augmented generation (RAG) systems.

Key Results: No Performance Gain

When evaluated on standard industry benchmarks for retrieval and general question answering, the reasoning-enhanced models showed no measurable improvement over their base counterparts. Despite their superior performance on dedicated reasoning tasks, this capability did not transfer to creating better general-purpose embeddings.

The researchers employed a novel diagnostic framework called Hierarchical Representation Similarity Analysis (HRSA) to analyze the internal representations of both model types. This technique allowed them to compare the "thought maps"—how the models organize information internally—across different layers and abstraction levels.

How They Tested It

The experimental setup was straightforward:

- Base vs. Reasoning Models: Start with identical base LLMs (e.g., LLaMA or Mistral architectures). Create reasoning variants through additional training on datasets requiring step-by-step reasoning.

- Embedding Conversion: Apply the same adaptation procedure (typically adding a pooling layer and fine-tuning on contrastive loss) to convert both the base and reasoning models into embedding models.

- Benchmark Evaluation: Test both embedding models on standard retrieval benchmarks (like MTEB) and general QA tasks.

- Internal Analysis: Use HRSA to compare the internal representations of both models at different layers.

The HRSA analysis revealed why the transfer fails: while reasoning training reorganizes local neighborhoods in the representation space (how specific concepts relate to each other), it preserves the global semantic structure. The overall "map" of how language concepts are organized remains nearly identical to the base model.

Why This Matters

This finding has immediate practical implications for AI engineering:

- Resource Allocation: Organizations investing heavily in reasoning training for models intended for retrieval or general QA might be wasting compute resources. The study suggests these capabilities are largely orthogonal.

- Model Selection: When building embedding models for search or RAG systems, there's currently no evidence that starting with a reasoning-enhanced base model provides any advantage.

- Understanding Capability Transfer: The research challenges the assumption that improvements in one cognitive capability (reasoning) automatically enhance others (semantic understanding). It suggests these may be more modular than previously thought.

The paper concludes that the "massive effort spent teaching models to think through problems step-by-step does not automatically give them a better 'gut feeling' for general language similarity."