Key Takeaways

- Epoch AI estimates Nvidia's B200 GPU costs $5,700–$7,300 to produce, with HBM memory and advanced packaging accounting for two-thirds of the cost.

- At a $30k–$40k sale price, chip-level gross margins reach ~82%, though rack-scale margins may be lower.

What the Analysis Reveals

Epoch AI published a detailed bill-of-materials (BOM) analysis estimating that Nvidia's B200 GPU—the flagship chip of the Blackwell microarchitecture—costs between $5,700 and $7,300 to manufacture. At a reported sale price of $30,000 to $40,000 per chip, this implies a chip-level gross margin of approximately 82% for Nvidia.

The analysis, released on April 26, 2026, models variable costs only—excluding fixed costs like R&D—and uses probability distributions for uncertain inputs such as die yield, HBM pricing, and packaging costs.

Key Numbers

Logic die fabrication (two ~800 mm² chiplets on TSMC 4NP) ~$1,700–$2,200 ~30% HBM3E memory (192 GB) ~$2,700–$3,300 ~45% Advanced packaging (CoWoS-L) ~$1,100–$1,500 ~20% Auxiliary module components ~$200–$300 ~5% Total variable cost $5,700–$7,300 100%How the Cost Breaks Down

Logic Die Fabrication

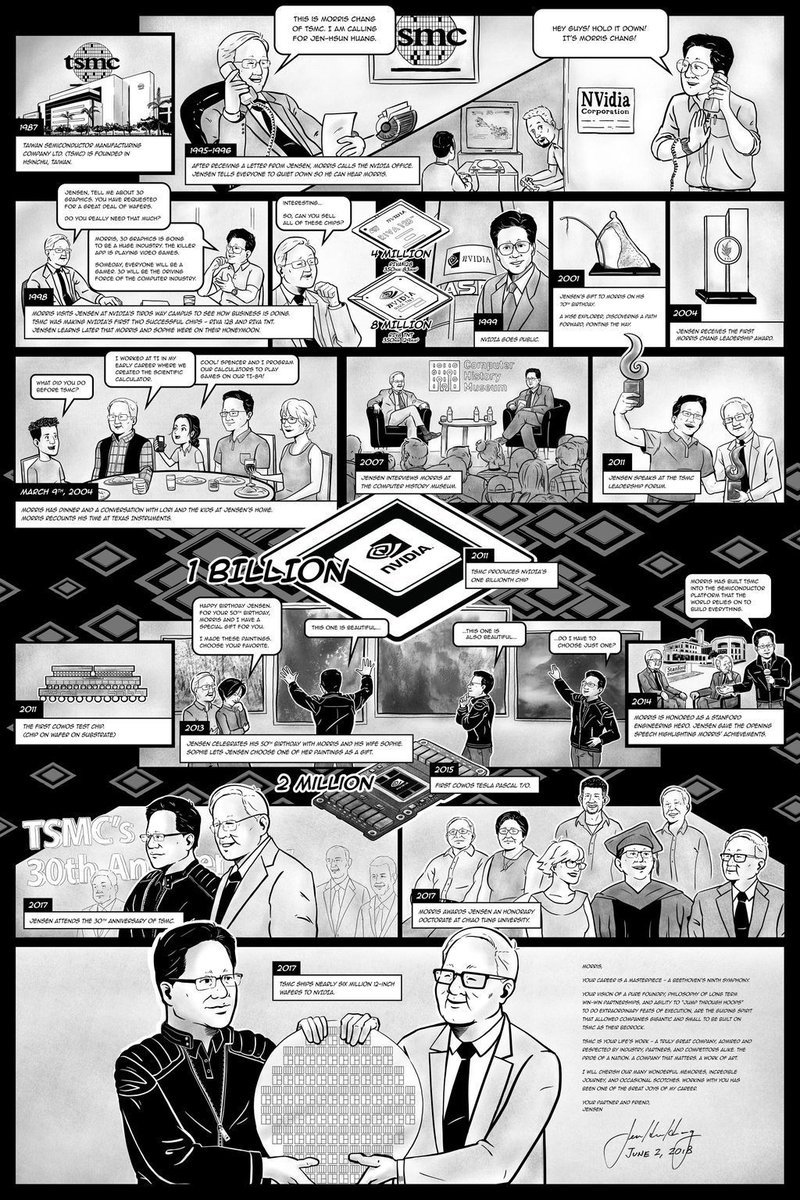

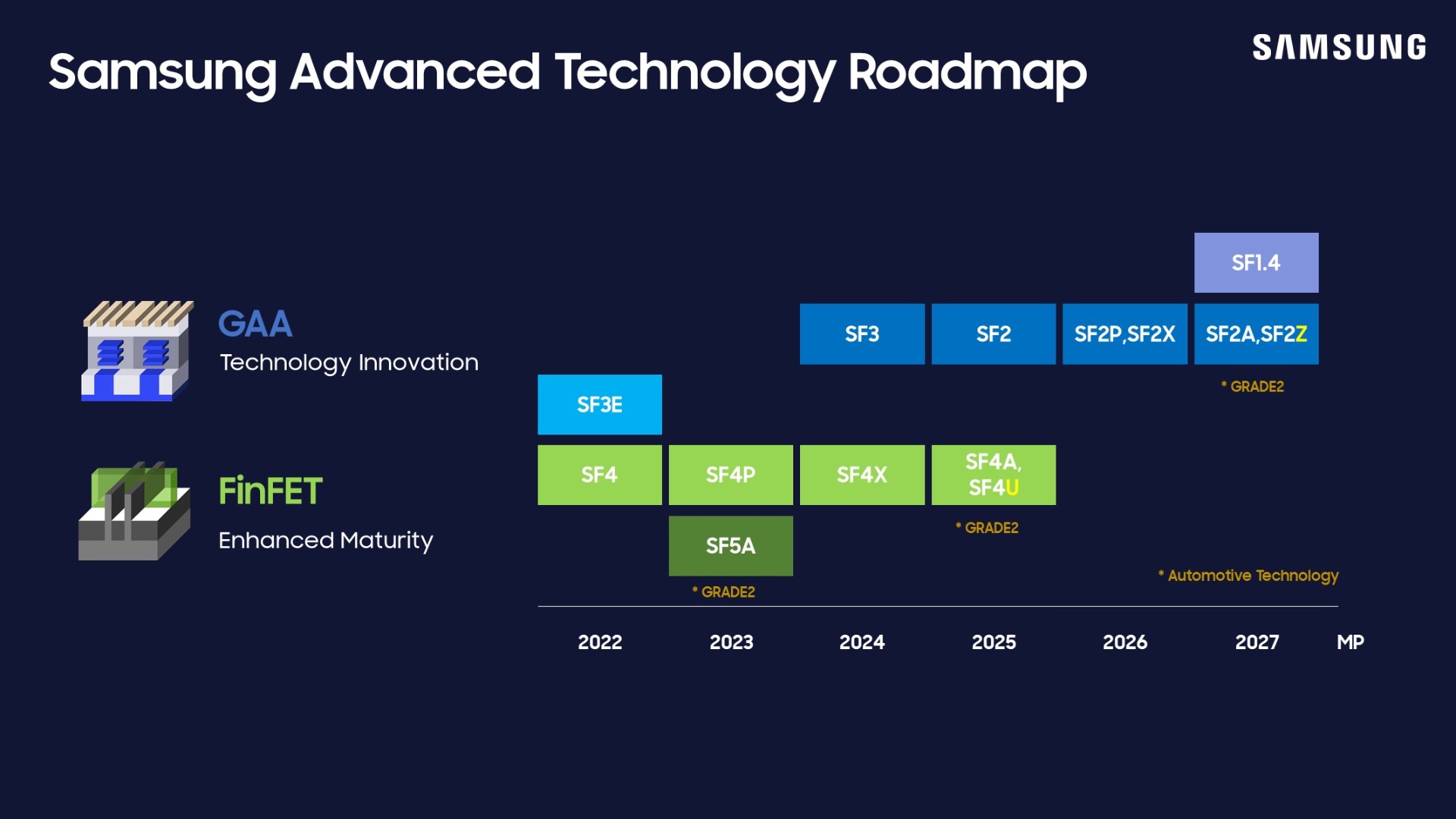

The B200 uses two compute chiplets, each approximately 800 mm², fabricated on TSMC's 4NP process node. Epoch models a 12-inch wafer cost of $17,000 at 4NP and die yield as a triangular distribution between 40% and 70%, centered at 60%. This yield range reflects the difficulty of manufacturing large chiplets at advanced nodes.

HBM Memory

The B200 integrates 192 GB of HBM3E memory, which Epoch prices at $14–$17 per GB, centered at $15. HBM is the single largest cost driver, accounting for roughly 45% of the total. This aligns with industry trends: HBM has become a bottleneck for both cost and supply in high-end AI accelerators.

Advanced Packaging

Rather than assigning a direct component-level price, Epoch uses a top-down revenue allocation approach based on TSMC's advanced packaging revenue, CoWoS share, Nvidia's share of CoWoS capacity, and projected Blackwell unit shipments (4.5–5.0 million units). The CoWoS-L packaging process yield is modeled between 65% and 95%. When a package fails, the logic dies are lost, adding to effective cost.

What This Means in Practice

At an 82% chip-level gross margin, Nvidia's Blackwell generation is highly profitable on a per-chip basis. However, most Blackwell revenue comes from servers and rack-scale systems (e.g., the GB200 NVL72), which include additional components like CPUs, networking, power, and cooling. These systems likely carry lower margins, meaning Nvidia's realized margins on overall Blackwell sales may be below the chip-level estimate.

Context: Nvidia's Pricing Power

This analysis follows a pattern of Nvidia maintaining strong pricing power in the AI accelerator market. The H100, Blackwell's predecessor, also commanded high margins. The B200's 82% chip-level margin is consistent with Nvidia's historical GPU margins, though the absolute dollar cost per chip is significantly higher due to larger die sizes, more HBM, and complex packaging.

Epoch's estimate also highlights the growing share of cost attributable to memory and packaging. Together, HBM and advanced packaging account for roughly two-thirds of the B200's production cost. This concentration creates supply chain risk: any disruption in HBM supply (dominated by SK Hynix, Samsung, and Micron) or CoWoS capacity (controlled by TSMC) directly impacts Nvidia's ability to ship Blackwell units.

Limitations of the Analysis

The BOM model is based on publicly available data, analyst estimates, and teardown-based pricing. Many inputs—such as exact die yield, HBM contract pricing, and packaging costs—are not publicly disclosed. Epoch models these as probability distributions, so the $5,700–$7,300 range reflects plausible variability rather than a single point estimate. The analysis also excludes fixed costs (R&D, software, sales) and assumes no defects beyond packaging yield loss.

Frequently Asked Questions

What is Nvidia's B200 GPU?

The B200 is Nvidia's flagship AI accelerator built on the Blackwell microarchitecture. It uses two compute chiplets fabricated on TSMC's 4NP process and integrates 192 GB of HBM3E memory, targeting training and inference for large-scale AI models.

How much does it cost to produce a B200?

Epoch AI estimates the variable manufacturing cost at $5,700 to $7,300 per chip, with HBM memory and advanced packaging accounting for roughly two-thirds of the total.

What is the gross margin on the B200?

At a reported sale price of $30,000 to $40,000 per chip, the chip-level gross margin is approximately 82%. However, realized margins on full server systems may be lower.

Why is HBM memory so expensive in the B200?

The B200 uses 192 GB of HBM3E, which costs an estimated $14–$17 per GB. HBM is a premium memory technology with complex manufacturing and limited supply, making it the single largest cost component in the chip.

gentic.news Analysis

This BOM analysis from Epoch AI provides rare granularity into Nvidia's cost structure for Blackwell, a product we've covered extensively. Our knowledge graph shows Nvidia has appeared in 23 articles this week alone (191 total), with recent coverage spanning silicon photonics research, partnerships with Google Cloud, and open-sourcing robot motion models. The B200 cost breakdown fits into a larger narrative: Nvidia's AI hardware dominance is sustained by TSMC's advanced packaging capacity and HBM supply, both of which are constrained.

Notably, the 82% chip-level margin is impressive but masks a key risk. As we reported on April 22, SemiAnalysis noted that customer feedback is driving Nvidia toward disaggregated inference architectures, which could shift demand away from monolithic high-margin chips like the B200 toward more modular, potentially lower-margin systems. The B200's high absolute cost ($30k–$40k) also invites competition: AMD's MI300X and Cerebras's wafer-scale chips target similar workloads at potentially different price points.

The concentration of cost in HBM (45%) and packaging (20%) also explains recent industry moves. Nvidia's investment in silicon photonics, which we covered on April 22, aims to reduce data center interconnect costs. Meanwhile, TSMC's CoWoS capacity expansion is critical to Nvidia's ability to ship the 4.5–5.0 million Blackwell units Epoch projects. Any delay in CoWoS ramp would directly cap Nvidia's revenue, regardless of chip-level margins.

Finally, the $6,400 midpoint cost—while high in absolute terms—is a fraction of the chip's selling price. This margin structure gives Nvidia substantial room to adjust pricing if competition intensifies, while still funding the R&D for next-generation architectures like Vera Rubin, which our knowledge graph shows Nvidia has already developed.