In a significant development for the AI hardware landscape, Groq—recently acquired by industry giant Nvidia—has dramatically expanded its manufacturing partnership with Samsung. According to reports from AI commentator Rohan Paul, the company has increased its chip order from Samsung from 9,000 to 30,000 wafers, representing a more than threefold expansion of production capacity.

The Partnership Expansion Details

The original report indicates that Groq, now operating under Nvidia's corporate umbrella, is "massively expanding its partnership with Samsung" through this substantial order increase. While specific technical details about the chips weren't provided in the brief report, the scale of the production ramp-up suggests confidence in both current demand and future market positioning.

This expansion comes shortly after Nvidia's acquisition of Groq, a company known for developing specialized AI inference processors that compete in the same space as Nvidia's own GPUs. The timing suggests Nvidia is moving quickly to integrate and scale Groq's technology following the acquisition.

Groq's Technology and Market Position

Groq has distinguished itself in the AI hardware market with its tensor streaming processors (TSPs), which are designed specifically for high-performance AI inference workloads. Unlike general-purpose GPUs, Groq's architecture focuses on deterministic performance and low latency, making it particularly suitable for real-time AI applications.

Prior to the Nvidia acquisition, Groq had positioned itself as an alternative to traditional GPU providers for inference tasks. The company's technology has been particularly noted for its efficiency in running large language models and other transformer-based architectures that dominate contemporary AI applications.

Strategic Implications for Nvidia

Nvidia's acquisition of Groq and subsequent manufacturing expansion represents a strategic move to consolidate its position in the AI hardware market. While Nvidia's GPUs dominate AI training, the inference market has seen increased competition from specialized processors like those developed by Groq.

By acquiring Groq rather than competing directly, Nvidia gains immediate access to specialized inference technology and expertise. The rapid production scale-up suggests Nvidia sees immediate market opportunities for Groq's technology, potentially as part of a diversified AI hardware portfolio.

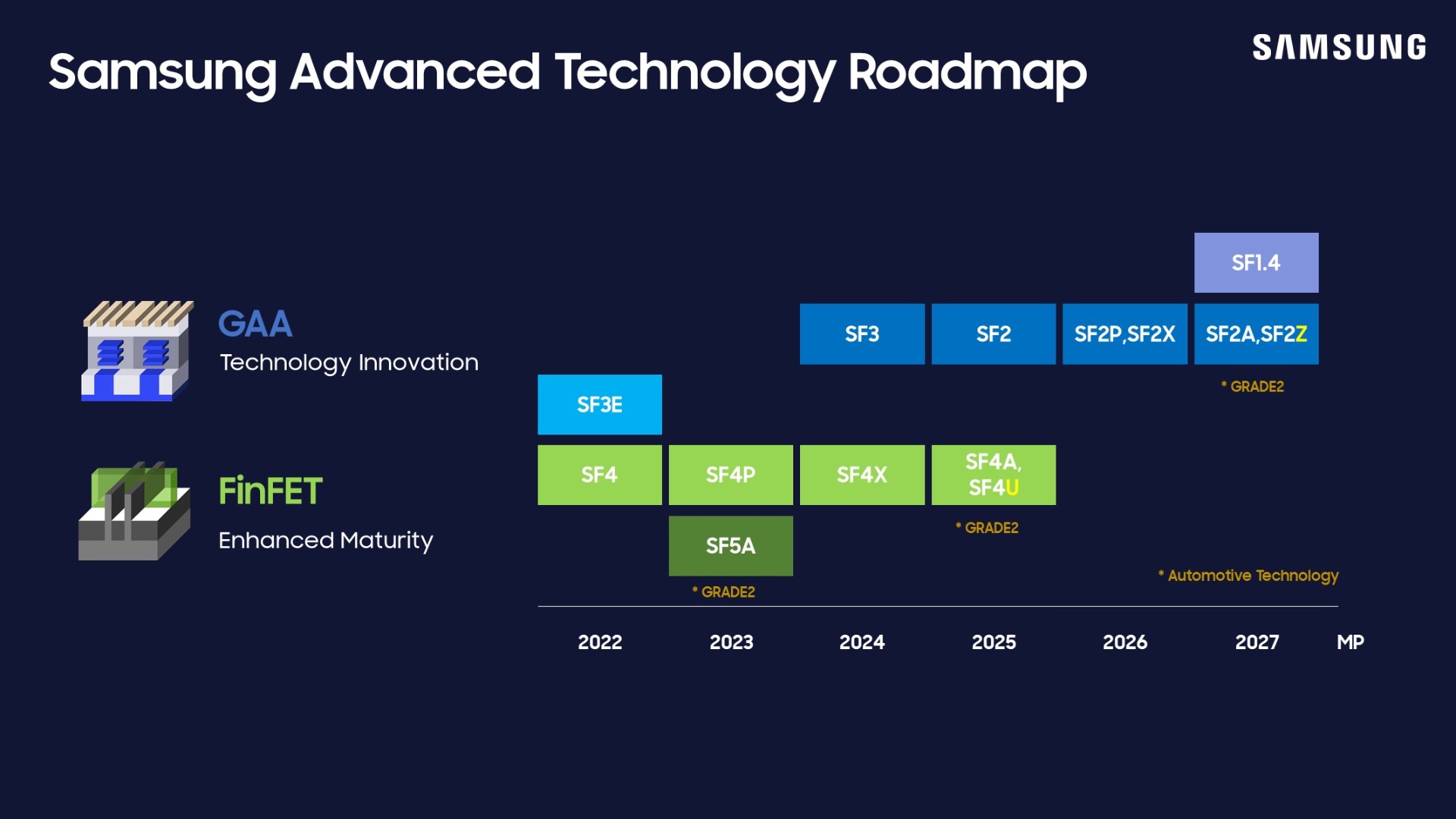

The Samsung Manufacturing Relationship

Samsung's role as the manufacturing partner for Groq's chips is significant in the context of global semiconductor production. Samsung Foundry competes directly with TSMC in the advanced semiconductor manufacturing space, and securing large orders from AI hardware companies represents important validation of their process technology.

The increased order volume—from 9,000 to 30,000 wafers—represents not just increased production but potentially a longer-term commitment to Samsung's manufacturing capabilities. This could have implications for the balance of power in semiconductor manufacturing, particularly as AI chip demand continues to grow.

Market Context and Competitive Landscape

The AI hardware market has become increasingly competitive, with multiple companies developing specialized processors for different aspects of AI workloads. While Nvidia maintains dominance in training, companies like AMD, Intel, and various startups have been targeting the inference market where Groq operates.

This production expansion comes amid reports of supply constraints for AI chips across the industry. The ability to secure manufacturing capacity at this scale suggests Nvidia is leveraging its market position and resources to ensure Groq's technology reaches the market quickly.

Potential Applications and Impact

Groq's processors are particularly suited for applications requiring real-time inference with predictable latency. This includes:

- Large language model deployment and serving

- Autonomous vehicle systems

- Real-time recommendation engines

- Edge AI applications

- Scientific computing and simulation

The increased production capacity could accelerate adoption of these specialized processors in production environments, potentially offering performance advantages over more general-purpose hardware for specific use cases.

Future Outlook and Industry Implications

The scale of this manufacturing expansion suggests Nvidia has significant plans for Groq's technology within its broader AI hardware ecosystem. Possible developments could include:

- Integration of Groq technology into Nvidia's existing product lines

- Development of hybrid systems combining training and inference optimization

- Expansion into new market segments where inference performance is critical

- Potential licensing or partnership arrangements with other hardware manufacturers

As AI models continue to grow in size and complexity, specialized hardware for efficient inference becomes increasingly important. Nvidia's move to scale Groq's production indicates recognition of this trend and a strategic response to capture value across the entire AI workflow.

Source: Rohan Paul on X (formerly Twitter) reporting on Groq's partnership expansion with Samsung.