AI researcher Omar Sar has shared his current "favorite way to consume podcasts"—a fully automated pipeline that transforms YouTube podcast content into interactive knowledge artifacts using AI agents. The system employs Claude Opus 4.7 for insight extraction and analysis, ElevenLabs Scribe for speaker diarization, and generates plain HTML/JS artifacts that form a self-improving wiki for agentic research.

Key Takeaways

- AI researcher Omar Sar automated podcast consumption using an Opus 4.7 agent that extracts insights, generates analysis, and builds interactive HTML/JS artifacts.

- The system creates a self-improving knowledge wiki for agentic research workflows.

What the System Does

Sar's workflow begins with a YouTube podcast as input. The system uses ElevenLabs Scribe to handle speaker diarization—identifying who says what throughout the conversation. The core analysis is performed by a Claude Opus 4.7 agent that:

- Spots important insights from the conversation

- Performs deep analysis of the content

- Generates thought-provoking observations that prompt further research

"The agent spots important insights, does deep analysis, and generates thought-provoking observations that really get me curious to research further," Sar explains in his post.

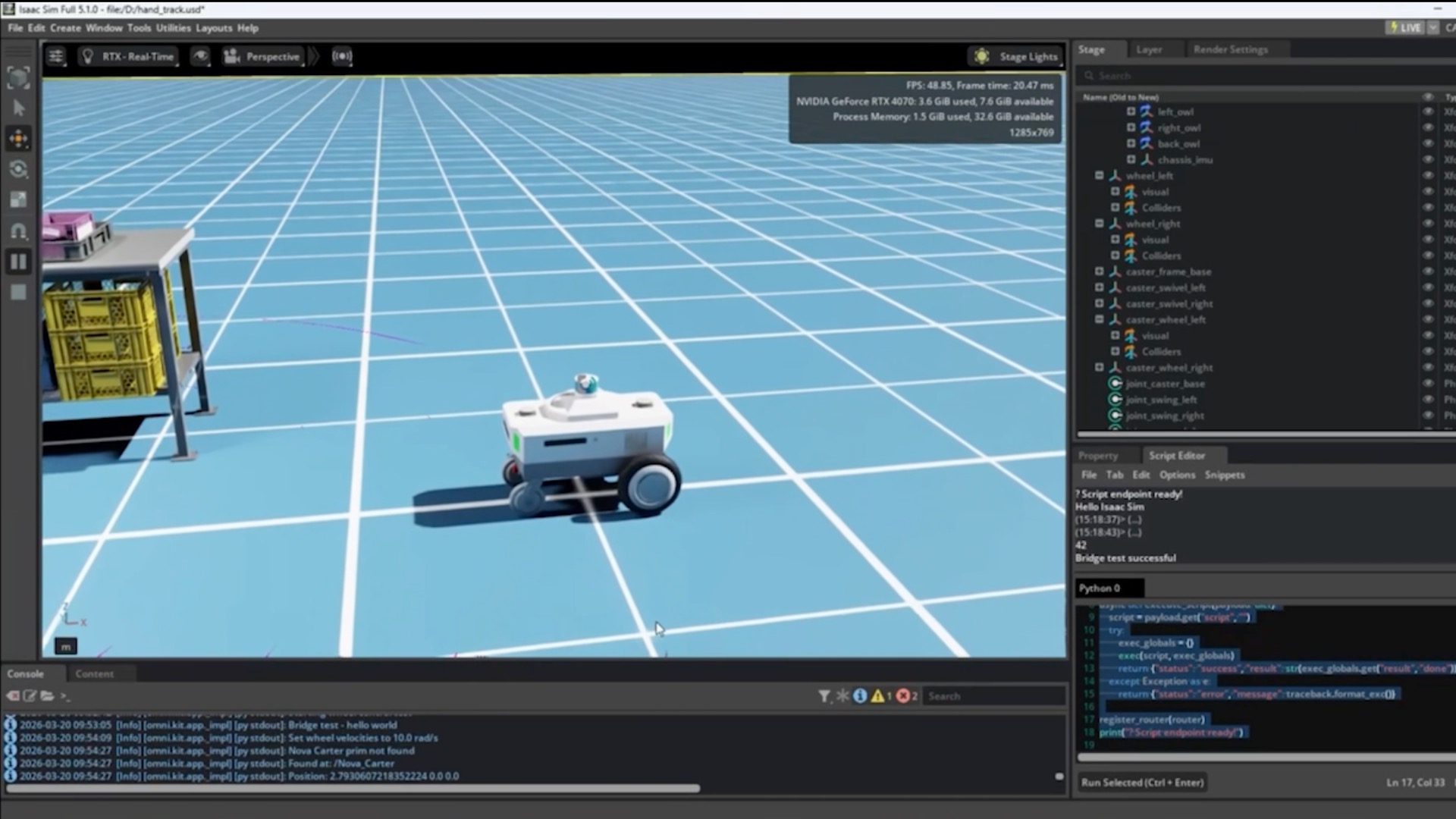

The Knowledge Artifact Output

The system generates what Sar calls "knowledge artifacts"—interactive documents built with plain HTML and JavaScript. These artifacts can include:

- Text analysis with highlighted insights

- Interactive charts using libraries like Chart.js

- Research prompts that encourage deeper investigation

All generated research feeds into what Sar describes as "a self-improving wiki for later use by any of my agents." This creates a compounding knowledge base where each analysis builds upon previous work.

Interactive Research Capabilities

A key feature demonstrated in Sar's accompanying clip is the ability to "go further on anything by selecting text and components (charts) and doing deeper research." This creates a feedback loop where:

- The initial agent analysis identifies areas worth exploring

- Users can select any element for deeper investigation

- Subsequent research enriches the knowledge base

- Future agents have more context for better analysis

Technical Implementation

The pipeline combines several cutting-edge AI tools:

- Claude Opus 4.7: Anthropic's most capable model for complex reasoning and analysis tasks

- ElevenLabs Scribe: Specialized audio transcription with speaker identification

- Custom scripts: Sar mentions using "skills and scripts to guide the artifact generation"

- HTML/JS output: Plain web technologies for maximum compatibility and interactivity

Sar notes that he expects "more people to use agents in this way" as AI capabilities improve and multimodal understanding becomes more sophisticated.

gentic.news Analysis

This development represents a natural evolution in the agentic AI workflow space that we've been tracking closely. Sar's approach builds directly on trends we identified in our December 2025 coverage of "AI Research Assistants That Actually Finish the Job," where we noted the shift from passive information retrieval to active knowledge synthesis.

The use of Claude Opus 4.7 for this task is particularly noteworthy. This follows Anthropic's February 2026 release of Opus 4.7, which specifically improved on complex document analysis and multi-step reasoning capabilities. Sar's implementation effectively stress-tests these claimed improvements in a real-world, multi-modal context.

What makes this workflow significant isn't any single component, but the integration of diarization (ElevenLabs), analysis (Claude), and interactive output (HTML/JS) into a cohesive system. This aligns with the broader industry trend toward "AI operating systems" that we discussed in our January 2026 analysis of Devin and other autonomous coding agents. The self-improving wiki aspect is especially clever—it turns what could be a one-off analysis into a compounding knowledge asset.

Practitioners should pay attention to two aspects: First, the choice of plain HTML/JS for artifacts ensures maximum longevity and portability—no proprietary formats that might become obsolete. Second, the interactive research loop (select text → deeper research) addresses a key limitation of current AI systems: they can analyze but often don't know what questions to ask next. By letting the human guide follow-up research while the AI handles the initial synthesis, Sar has created a practical human-AI collaboration pattern that could be adapted to many domains beyond podcast analysis.

Frequently Asked Questions

What AI models does Omar Sar use for his podcast analysis pipeline?

Sar uses Claude Opus 4.7 for insight extraction and deep analysis, and ElevenLabs Scribe for speaker diarization (identifying who is speaking when). The system generates output in plain HTML and JavaScript, making the knowledge artifacts portable and interactive.

How does the "self-improving wiki" feature work?

All research generated by the AI agents gets stored in a knowledge base that subsequent agents can access and build upon. When a user selects text or charts for deeper research, those investigations also feed back into the wiki. This creates a compounding effect where the system's understanding improves over time as more content is processed.

Can this system work with podcasts from platforms other than YouTube?

While Sar specifically mentions YouTube podcasts, the underlying architecture likely works with any audio or video source that can be transcribed. ElevenLabs Scribe handles the audio processing, and the Claude agent works from the transcribed text, so the source platform matters less than having clear audio with multiple speakers.

What are the main advantages of generating HTML/JS artifacts instead of using a proprietary format?

Plain HTML and JavaScript ensure maximum compatibility—the artifacts can be viewed in any web browser, hosted anywhere, and will remain accessible even if specific tools or platforms change. The interactive elements (like Chart.js visualizations) also allow users to engage with the analysis rather than just reading static text.

Is this system fully automated or does it require human guidance?

The initial analysis is automated, but Sar emphasizes the interactive research capability where users can "go further on anything by selecting text and components." This creates a collaborative workflow where the AI identifies interesting areas and the human decides which threads to pull for deeper investigation, with the AI then assisting in that follow-up research.