In a significant development for privacy-conscious AI users, developers have open-sourced Perspective Intelligence Web, a browser-based client that allows any device on a local network to access Apple's powerful on-device AI models. This MIT-licensed project addresses a critical gap in the AI ecosystem: how to leverage Apple's sophisticated local AI processing while maintaining privacy and device flexibility.

The Privacy Paradox in Modern AI

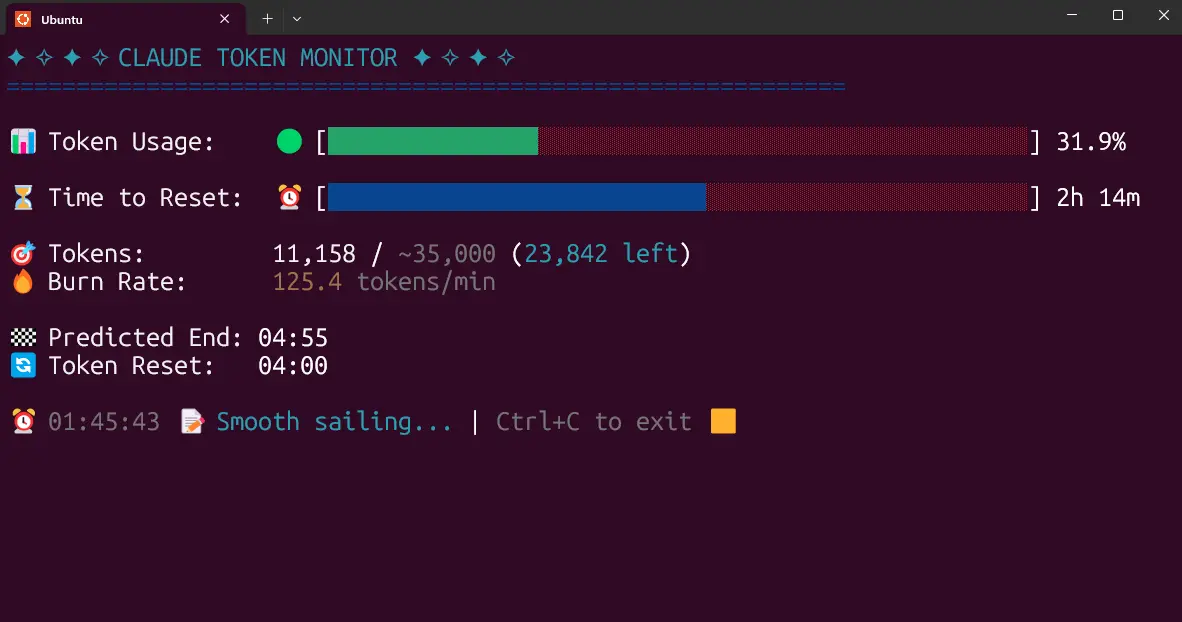

The project's creator, Taylor Arndt, articulated a common dilemma facing AI users today: "I use Claude every day but there are things I will not type into a cloud service." This sentiment resonates with growing concerns about data privacy, corporate surveillance, and the security implications of sending sensitive information to third-party AI services. While Apple has positioned itself as a privacy leader with its on-device processing approach through Apple Intelligence and Apple Foundation Models, this capability has been largely confined to Apple devices themselves.

Arndt's solution emerged from personal necessity. As a Mac user with Apple Silicon hardware capable of running Apple Foundation Models locally and privately, he found himself constrained by device limitations: "I have a Mac with Apple Silicon running Apple Foundation Models locally and privately. But I was not always at my Mac."

Technical Architecture: One Mac, Many Devices

Perspective Intelligence Web employs a straightforward yet elegant architecture. A single Mac on the network runs Perspective Server, which interfaces with Apple's local AI models. Any device with a web browser—whether a Windows laptop, Android phone, Linux machine, or Chromebook—can then connect to this server through a browser interface. The system delivers streaming responses token by token, maintaining the responsive feel of cloud-based AI services while keeping all processing within the local network.

Built with Next.js, TypeScript, and Tailwind CSS, the project represents modern web development practices while remaining accessible to developers who might want to contribute or customize the implementation. The full technical writeup is available on Arndt's Substack, where he details the journey from concept to implementation.

The Broader Context: Small Models and On-Device AI

This development arrives alongside significant industry movements toward smaller, more efficient AI models. As noted in coverage from MarkTechPost, Alibaba's Qwen team recently released their Qwen3.5 Small Model Series, featuring models ranging from 0.8B to 9B parameters. This represents a deliberate shift in industry thinking—from the relentless pursuit of larger parameter counts to a philosophy of "More Intelligence, Less Compute."

These parallel developments highlight a growing recognition that the future of practical AI deployment lies not in massive cloud-based models alone, but in optimized, efficient models that can run locally on consumer hardware. Apple's approach with its Foundation Models aligns with this trend, offering capable AI processing that respects user privacy by default.

Implications for the AI Ecosystem

Perspective Intelligence Web carries several significant implications:

1. Democratizing Access to Private AI

By extending Apple's on-device AI capabilities to non-Apple devices, this project effectively democratizes access to privacy-preserving AI. Users who value their privacy but work across multiple platforms no longer need to choose between convenience and security.

2. Challenging the Cloud-First Paradigm

The project represents a tangible challenge to the prevailing cloud-first approach to AI deployment. While services like Anthropic's Claude (including the latest Claude Opus 4.6 model) offer impressive capabilities, they require sending data to external servers. Perspective Intelligence Web demonstrates that local processing can be both powerful and accessible.

3. Extending Apple's Ecosystem Without Lock-in

Interestingly, this open-source approach extends Apple's AI capabilities beyond their traditional ecosystem without requiring users to purchase additional Apple hardware. This could influence how platform companies think about ecosystem boundaries in the AI era.

Practical Applications and Use Cases

The potential applications are extensive:

- Remote Work Environments: Employees using company-issued Windows laptops could access secure, private AI assistance powered by a Mac server in their home office.

- Educational Settings: Schools with mixed device environments could provide students with AI assistance while maintaining compliance with privacy regulations.

- Development Teams: Cross-platform development teams could standardize on a single AI assistant while using their preferred operating systems.

- Privacy-Sensitive Professions: Lawyers, healthcare professionals, and journalists could leverage powerful AI tools without compromising client confidentiality or source protection.

Technical Considerations and Limitations

While promising, the approach does have limitations. The system requires at least one Apple Silicon Mac on the network, which represents a significant hardware investment. Performance will depend on the capabilities of that Mac, and there may be latency considerations for complex queries. Additionally, the project currently focuses on chat interfaces rather than the full suite of Apple Intelligence features integrated into macOS and iOS.

The Open-Source Advantage

The MIT license represents a strategic choice that could accelerate adoption and improvement. By making the code freely available, the developers invite community contributions, security audits, and customization for specific use cases. This open approach contrasts with the proprietary nature of most AI platforms and aligns with growing sentiment around transparent, auditable AI systems.

Looking Forward: The Future of Distributed AI

Perspective Intelligence Web points toward a future where AI processing is distributed across personal networks rather than centralized in corporate data centers. As noted in the broader industry context, the trend toward smaller, more efficient models (exemplified by Alibaba's Qwen3.5 series) suggests that local AI processing will become increasingly capable on consumer hardware.

This development also raises interesting questions about business models in the AI space. If users can effectively "self-host" capable AI assistants using open-source interfaces to manufacturer-provided models, how will this impact subscription-based cloud AI services?

Conclusion: A Step Toward User-Controlled AI

Perspective Intelligence Web represents more than just a clever technical solution—it embodies a philosophical stance about who should control AI processing and where user data should reside. By bridging the gap between Apple's privacy-focused on-device AI and the multi-device reality of modern computing, this project offers a tangible path toward user-controlled, privacy-preserving artificial intelligence.

As AI becomes increasingly integrated into daily life, solutions like this that prioritize user agency while maintaining accessibility will likely gain importance. The project's release under an MIT license ensures that this approach can evolve through community collaboration, potentially influencing how both users and companies think about the balance between AI capability and data sovereignty.

The convergence of efficient small models, powerful local hardware, and open-source interfaces suggests we may be approaching an inflection point where privacy-preserving AI becomes not just possible, but practical for everyday use across all our devices.