An anonymous developer, identified only by the social media handle @aiwithjainam, has announced the open-source release of a "plug-and-play skill pack." The claim, posted on X (formerly Twitter), states this package can give "any AI agent the full capabilities of a professional software engineer."

The announcement is sparse on verifiable technical details. The source material consists solely of a social media post containing the claim and a link to a GitHub repository. No accompanying research paper, benchmark results, architectural diagrams, or detailed documentation was provided in the initial announcement.

What Was Released

Based on the social media post, the project is framed as a modular component—a "skill pack"—that can be integrated into existing AI agent frameworks. The core promise is to bootstrap a generic AI agent with a comprehensive suite of software engineering skills, potentially including code generation, debugging, system design, and repository navigation.

The term "plug-and-play" suggests the package is designed for ease of integration, abstracting away the complexity of training or fine-tuning a model for coding tasks. The repository is now publicly accessible, allowing developers to inspect, use, and modify the code.

Critical Unknowns and Immediate Questions

Without published benchmarks or a technical report, the community must evaluate the claims empirically. Key questions remain unanswered:

- Architecture: Is this a fine-tuned model checkpoint, a set of sophisticated prompts and chain-of-thought templates, a collection of tools and APIs, or an entirely new agentic framework?

- Performance: What metrics define "full capabilities of a professional software engineer"? How does it perform on established benchmarks like SWE-Bench, HumanEval, or MBPP?

- Scope: Which programming languages, frameworks, and software development lifecycle stages does it support?

- Dependencies: What are the computational requirements and underlying model dependencies (e.g., does it require GPT-4, Claude 3, or a local open-weight model)?

The value of this release will be determined by the developer community's ability to validate its performance and utility through hands-on testing.

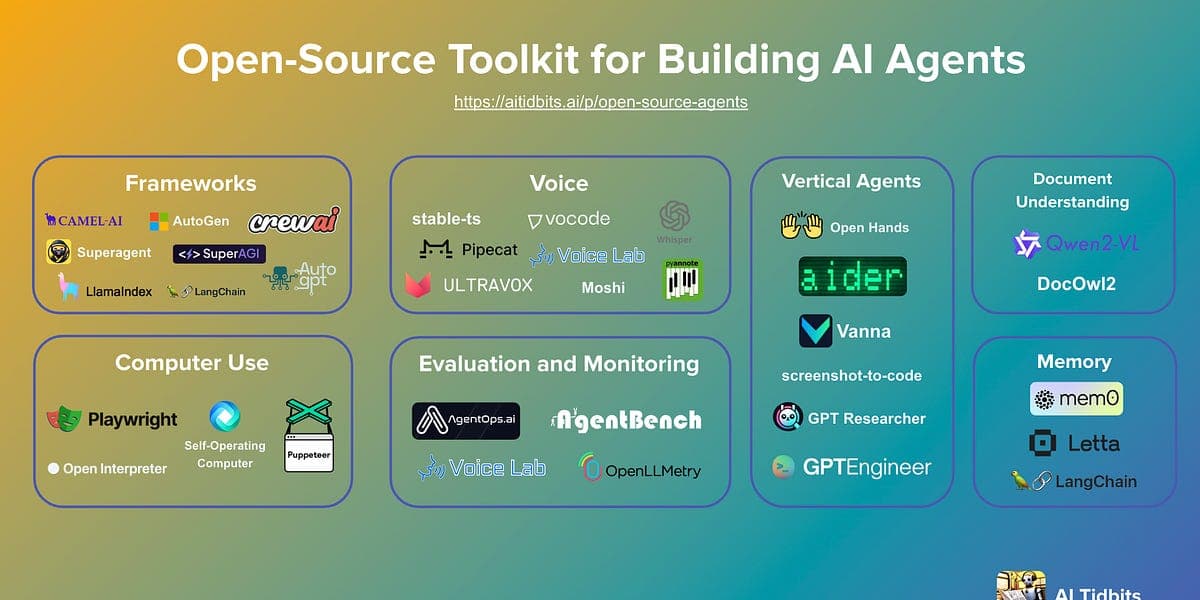

The Open-Source AI Agent Ecosystem

This release fits into a rapidly growing trend of open-source projects aimed at creating capable AI agents. Recent months have seen significant activity in this space, with projects like OpenDevin, Devika, and SmolAgent gaining traction. These projects typically provide frameworks for building, orchestrating, and equipping agents with tools for specific tasks, with software engineering being a primary focus.

The promise of a pre-packaged, high-performance "skill pack" could, if validated, accelerate development in this ecosystem by providing a ready-made superior component, reducing the need for teams to build their own coding specialization from scratch.

gentic.news Analysis

This announcement, while light on details, is a direct artifact of two powerful and converging trends we track closely: the democratization of AI agent development and the intense focus on AI for software engineering. The release follows a pattern of rapid, community-driven innovation in the agent space, often announced via social media before formal academic publication. This mirrors the release cycle of projects like OpenDevin, which we covered on its launch, and which has since spawned a large ecosystem of forks and extensions.

The claim of delivering "full professional capabilities" is an ambitious benchmark, one that even the most advanced closed-source models from Anthropic (Claude 3.5 Sonnet) and OpenAI (o1-preview) approach but do not unequivocally claim to have mastered. For an open-source, plug-and-play component to make this claim sets a high bar that will invite immediate scrutiny. If the skill pack delivers even a subset of its promise, it could significantly lower the barrier to creating competitive coding agents, potentially impacting the market position of startups building in this space and putting pressure on closed-source API providers to further differentiate their offerings.

However, the lack of immediate technical substantiation requires a heavy dose of skepticism. The history of AI hype is littered with social media announcements that outpace reality. The true test will occur in the GitHub repository's issues and pull requests over the coming days, as developers stress-test the code. Its integration and performance within established agent frameworks like CrewAI or AutoGen will be the ultimate measure of its utility.

Frequently Asked Questions

What is an AI agent 'skill pack'?

A skill pack is a modular software component designed to give an AI agent a specific set of capabilities. Think of it as a plugin or a pre-trained module that can be added to a more general AI agent framework to specialize it for a task—in this case, professional software engineering.

How can I try this open-source skill pack?

The skill pack is available on GitHub. You will need to clone the repository and follow its installation instructions (likely involving Python, a package manager like pip, and potentially specific AI model dependencies). The feasibility of running it will depend on your local hardware or cloud credits if it requires a large language model.

How does this compare to GitHub Copilot or ChatGPT for coding?

Tools like GitHub Copilot and ChatGPT are end-user applications or chat interfaces with coding capabilities. This skill pack is a backend component intended for developers who are building AI agents. It aims to be the engine that gives an agent its coding skill, which could then be integrated into a custom interface or workflow, potentially offering more specialized and agentic behavior than a general-purpose chat assistant.

Are there benchmarks proving this skill pack's performance?

As of the initial announcement, no official benchmarks or performance metrics have been published alongside the code. The community will need to independently evaluate its performance on standard coding benchmarks like HumanEval (for code generation) or SWE-Bench (for real-world software engineering issues) to assess the validity of its claims.