OpenAI has expanded its GPT-5.4 model family with the release of two new, more affordable variants: GPT-5.4 mini and GPT-5.4 nano. The announcement was made via a social media post from the account @ArtificialAnlys, which was subsequently reshared by industry observer @mweinbach.

The core offering of these new models is cost reduction while maintaining access to the same reasoning capabilities found in the standard GPT-5.4. According to the announcement, GPT-5.4 nano is described as "the smallest and cheapest" model in the series, suggesting a tiered pricing and performance structure aimed at different usage scales and budgets.

What Happened

Based on the available information, OpenAI has officially released two scaled-down versions of its GPT-5.4 model. The primary stated benefit is lower cost for developers and enterprises, with the trade-off likely being reduced parameter counts, lower inference latency, or both. The key technical claim is that these variants retain "the same reasoning modes" as their larger counterpart, implying that the core architectural improvements for complex problem-solving are preserved.

Context

This release follows a familiar pattern for OpenAI and the broader industry: launching a flagship model followed by optimized, smaller versions to address different segments of the market. The "mini" and "nano" naming convention suggests a clear hierarchy, with "nano" being the most lightweight option. This strategy allows OpenAI to compete on price for high-volume, cost-sensitive applications while still offering its most advanced reasoning technology.

At the time of writing, OpenAI's official blog and API documentation have not been updated with detailed specifications, benchmark results, or precise pricing for GPT-5.4 mini and nano. The initial announcement lacks concrete performance metrics, parameter counts, or context window details.

gentic.news Analysis

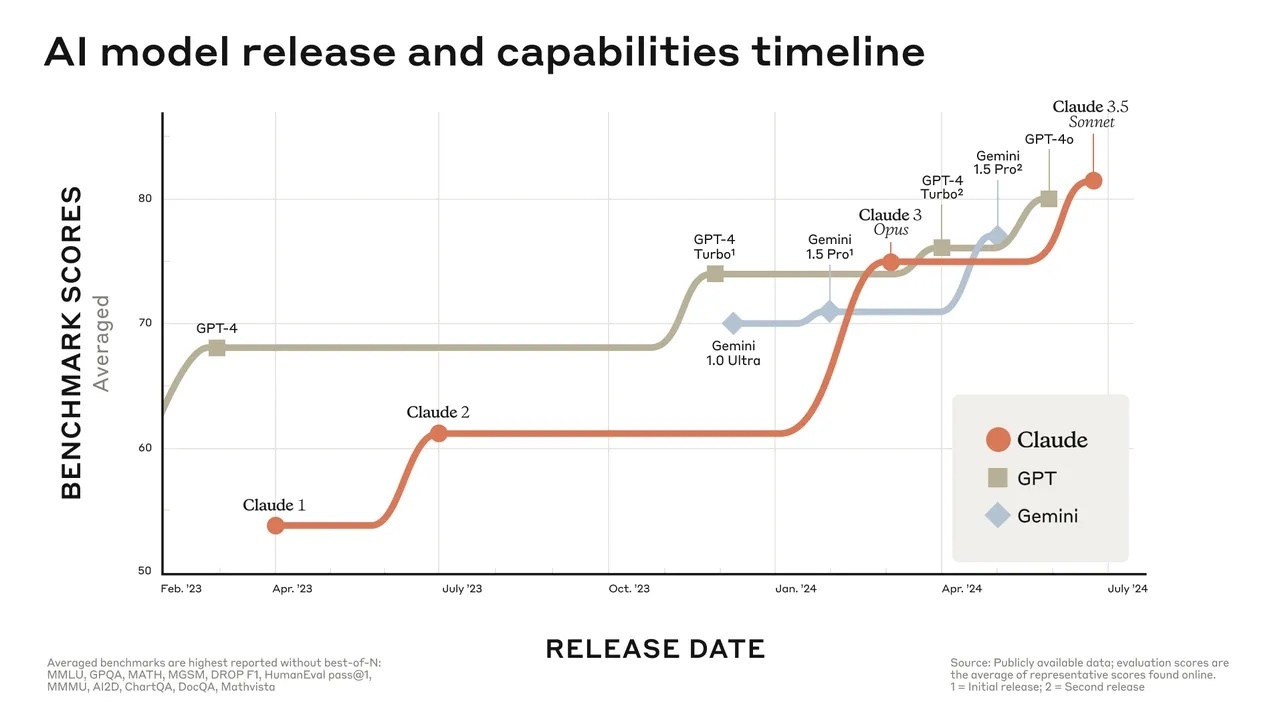

This tactical release of GPT-5.4 mini and nano is a direct competitive move in the increasingly crowded and price-sensitive frontier model market. It follows the industry-wide trend, exemplified by Google's Gemini Flash and Claude Haiku, of offering a spectrum of model sizes to balance capability, latency, and cost. By decoupling reasoning capability from pure model scale, OpenAI is attempting to defend its market share against lower-cost providers and open-source alternatives that have been gaining traction for specific, high-volume use cases.

The claim of "the same reasoning modes" is the critical technical differentiator. If validated, it means OpenAI has successfully distilled its most advanced chain-of-thought and planning architectures into significantly smaller computational footprints. This would represent an engineering achievement in model distillation or training efficiency. However, practitioners should wait for independent benchmarks on tasks like SWE-Bench, GPQA, or ARC-AGI to verify that the reasoning performance drop-off is minimal compared to the standard GPT-5.4.

Strategically, this release puts immediate pressure on competitors like Anthropic, which recently launched Claude 3.5 Sonnet, and Google's Gemini 1.5 Flash. It also preempts expected smaller model releases from other players. For developers, the arrival of a cheaper "nano" tier could make advanced AI reasoning economically viable for embedding into consumer applications, real-time analytics, and edge computing scenarios where the cost of GPT-4-level models was previously prohibitive.

Frequently Asked Questions

What is GPT-5.4 nano?

GPT-5.4 nano is the smallest and most cost-effective variant in OpenAI's GPT-5.4 model family. It is designed to offer the same core reasoning capabilities as the larger GPT-5.4 model but at a significantly reduced price, likely through a smaller parameter count and optimized architecture for faster, cheaper inference.

How much cheaper are GPT-5.4 mini and nano?

As of this initial announcement, specific pricing details for GPT-5.4 mini and nano have not been published by OpenAI. The social media announcement only states they are "cheaper variants." Pricing will likely be revealed when the models are fully integrated into the OpenAI API platform, with costs expected to be tiered below the standard GPT-5.4 model.

What does "same reasoning modes" mean?

The phrase "same reasoning modes" suggests that the GPT-5.4 mini and nano models incorporate the same underlying architectural features designed for complex problem-solving, such as chain-of-thought reasoning, planning, and step-by-step logic. This implies the quality of reasoning on tasks like code generation, mathematical problem-solving, or logical deduction should be comparable to the full GPT-5.4, even if the models are smaller and less knowledgeable.

When will GPT-5.4 mini and nano be available?

The models have been announced as "released," but availability through the official OpenAI API dashboard and documentation may follow shortly. Developers should monitor the OpenAI platform blog and API updates for official launch details, including regional availability, rate limits, and comprehensive technical specifications.