A Texas filing has revealed details about OpenAI's significant new data center project, codenamed "Freebird," in Milam, Texas. The first phase of the facility is planned to span 548,950 square feet and is estimated to cost approximately $470 million. This represents a major capital investment in the physical infrastructure required to train and serve increasingly large and complex AI models.

Key Takeaways

- OpenAI is building a massive 548,950-square-foot data center in Milam, Texas, named 'Freebird,' with a first-phase cost of around $470 million.

- This infrastructure investment is critical for scaling next-generation AI model training and inference.

What's Being Built

The project, referred to as "Freebird" in filings, is a data center development spearheaded by OpenAI. The disclosed square footage—548,950 sq ft for the initial phase—places it among the larger dedicated AI compute facilities. For comparison, a typical hyperscale data center building often ranges from 250,000 to 500,000 square feet. The $470 million price tag reflects the specialized and power-intensive nature of AI compute infrastructure, which goes beyond standard server racks to include advanced cooling systems and dense GPU clusters.

The Strategic Location: Milam, Texas

Milam County, Texas, has emerged as a destination for large-scale data center projects due to factors like available land, access to power grids, and potentially favorable economic conditions. The scale of this project suggests a long-term commitment by OpenAI to securing dedicated, sovereign compute capacity. This move aligns with a broader industry trend where leading AI labs are moving to control their own infrastructure destiny, reducing reliance on generic cloud providers for core training workloads.

The Infrastructure Arms Race

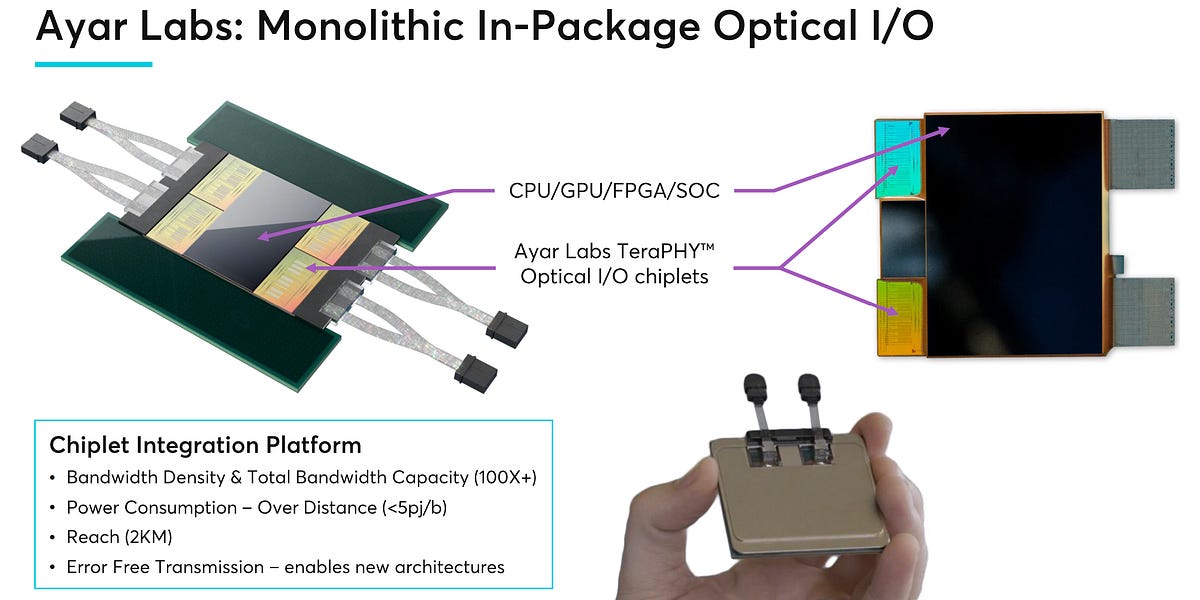

This filing provides a concrete data point in the ongoing AI infrastructure arms race. Building a facility of this scale is not just about adding more servers; it's about creating an optimized environment for the thousands of interconnected AI accelerators (like GPUs or custom ASICs) needed to train frontier models. Power delivery and heat dissipation become primary engineering challenges. A $470 million budget indicates investment in specialized electrical substations and cutting-edge cooling solutions, likely liquid-based, to handle densities far exceeding those of traditional data centers.

gentic.news Analysis

This "Freebird" project is a tangible manifestation of the capital-intensive phase of AI development. It follows a pattern of escalating infrastructure commitments from leading labs. For instance, in late 2024, we reported on Microsoft and OpenAI's rumored "Stargate" supercomputer initiative, projected to cost over $100 billion. While "Freebird" is a separate, smaller-scale project, it represents a critical stepping stone—the kind of owned-and-operated facility where OpenAI can iterate on hardware and software stacks in tandem without external constraints.

The choice of Texas is strategic. The state has been aggressively courting data center investments with its deregulated power market and available land. However, this move also carries operational risks. Texas's independent power grid (ERCOT) has faced reliability challenges during extreme weather events. For an AI lab running continuous, multi-month training jobs worth hundreds of millions of dollars in compute time alone, a stable power supply is non-negotiable. This suggests OpenAI's facility will likely include significant on-site backup generation and potentially direct agreements with power generators.

For AI practitioners, this underscores a shifting landscape. Access to frontier models may become increasingly tied to the infrastructure used to create them. As labs like OpenAI invest billions in custom silicon and optimized data centers, the efficiency gap between their internal cost of training and the external API price could widen, further solidifying the market position of those who control the stack from silicon to model.

Frequently Asked Questions

What is the 'Freebird' data center?

'Freebird' is the reported codename for a large-scale data center project being developed by OpenAI in Milam County, Texas. The first phase of the facility is planned to be 548,950 square feet in size, with an estimated cost of $470 million. It is dedicated to housing the high-performance computing infrastructure needed for AI research and development.

Why is OpenAI building its own data center?

Building its own data center gives OpenAI greater control over the entire compute stack, including power design, cooling systems, networking, and hardware integration. This is critical for optimizing the performance and cost of training massive AI models, which require thousands of specialized accelerators (like GPUs) to work in concert for weeks or months at a time. It reduces reliance on standard cloud offerings and allows for custom engineering.

How does this relate to the rumored 'Stargate' project?

The 'Stargate' project, as reported by various outlets, refers to a speculated multi-year, $100+ billion supercomputing collaboration between OpenAI and Microsoft. The 'Freebird' data center appears to be a separate, earlier-phase infrastructure project owned and developed by OpenAI itself. It can be seen as a foundational piece of infrastructure that supports OpenAI's scaling efforts ahead of or alongside any larger, joint ventures.

What are the main challenges with a data center of this scale?

The primary challenges are power and cooling. A facility packed with AI accelerators will have an enormous and constant power draw, requiring robust connections to the grid and extensive backup systems. The heat generated by this equipment is immense, necessitating advanced cooling solutions—likely direct-to-chip liquid cooling—to prevent hardware failure. Logistics, physical security, and network connectivity for transferring massive datasets are also major operational hurdles.