MLCommons' latest benchmark round expands to include multimodal and video models, but submissions from Nvidia, AMD, and Intel use different configurations and scenarios, preventing clear cross-vendor comparisons.

On April 1, 2026, MLCommons published the results for MLPerf Inference v6.0, the industry's premier benchmark suite for measuring AI inference performance. This round marks a significant expansion, introducing tests for multimodal and video generation models for the first time. While all three major chipmakers—Nvidia, AMD, and Intel—submitted results, each company highlighted different metrics and system configurations, making a straightforward performance ranking impossible. Notably, Google did not submit results for its latest Ironwood-generation TPUs, and inference specialists like Cerebras were absent.

What's New in MLPerf Inference v6.0

Version 6.0 of the benchmark suite adds five new workloads, reflecting the evolving demands of production AI systems:

- DeepSeek-R1 (Interactive Scenario): Features a five-times-higher minimum token generation rate requirement compared to previous text model tests.

- Qwen3-VL-235B: The suite's first multimodal vision-language model.

- GPT-OSS-120B: A new large language model from OpenAI.

- WAN-2.2-T2V: A text-to-video generation model.

- DLRMv3: An updated transformer-based recommendation system benchmark.

Only Nvidia submitted results across all five new models and scenarios.

Nvidia's Strategy: Scale and Software

Nvidia's submissions focused on showcasing the scalability of its Blackwell Ultra architecture, with record claims primarily set using massive configurations like the GB300-NVL72 system with 288 GPUs. The company highlighted performance on the new DeepSeek-R1 and GPT-OSS-120B models.

The more telling story, however, is software. Nvidia claims a 2.7x performance jump on DeepSeek-R1 in server scenarios compared to its submission six months ago, achieved on the same hardware through software optimizations delivered by partner Nebius. The company states this cuts token production costs by over 60%. Similar software gains yielded a 1.5x improvement on the older Llama 3.1 405B model.

Key Software Optimizations

Nvidia detailed several software-level improvements driving these gains:

- Operation Fusion & Speed-up: Basic compute operations were accelerated and fused to reduce GPU overhead.

- Nvidia Dynamo: This open-source framework separates the prefill (input processing) and decoding (token generation) phases of text generation, optimizing each independently.

- Wide Expert Parallel: For mixture-of-experts models like DeepSeek-R1, this technique distributes expert weights across more GPUs to prevent any single card from becoming a bottleneck.

- Multi-Token Prediction: In interactive scenarios with small batch sizes, this method generates multiple tokens in parallel to utilize otherwise idle compute power.

AMD and Intel's Different Battles

The submissions from AMD and Intel targeted different market segments, avoiding a direct clash with Nvidia's scale.

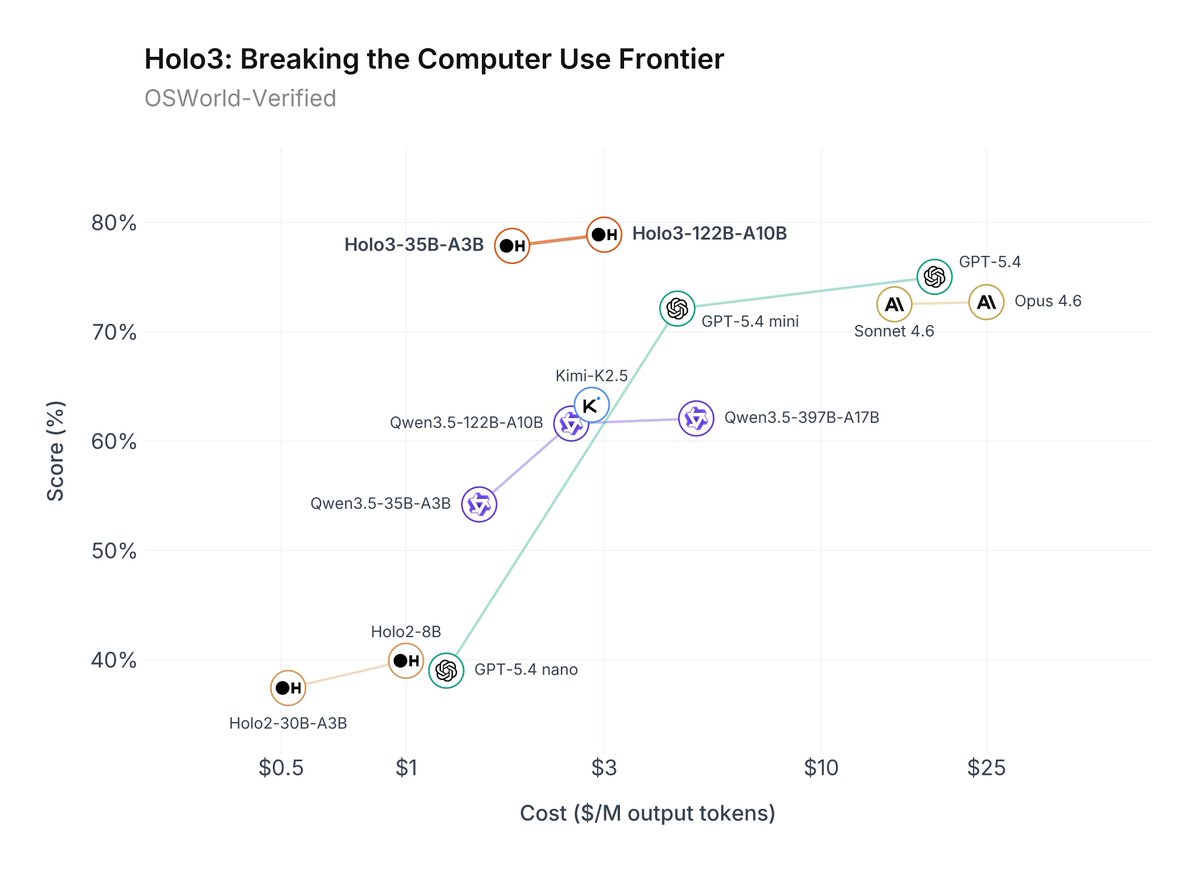

- AMD compared its performance against Nvidia's B200 and B300 GPUs in single-node, eight-GPU configurations. It did not submit results for the new DeepSeek-R1 or Qwen3-VL benchmarks, focusing its competitive claims on more constrained system sizes.

- Intel aimed at the workstation GPU segment, competing in a different tier entirely from the data-center-scale systems Nvidia highlighted.

This strategic fragmentation means buyers must carefully match benchmark scenarios to their own expected deployment environments.

Key Numbers: MLPerf Inference v6.0 Highlights

Nvidia GB300-NVL72 (288 GPUs) DeepSeek-R1 (Server) 2.7x perf gain vs. 6 mo. ago (software-only) Highest throughput across new workloads; all-new model coverage. Nvidia GB300-NVL72 (288 GPUs) GPT-OSS-120B Top throughput result Showcases scale on new OpenAI model. AMD Single-Node (8 GPUs) Various (not DeepSeek-R1/Qwen3-VL) Competitive vs. Nvidia B200/B300 Focuses on smaller, single-node comparisons. Intel Workstation GPUs Various Leadership in segment Targets a different market tier entirely.What This Means in Practice

For AI engineers, the benchmark expansion to multimodal and video models is the most useful outcome, providing new data points for complex workloads. However, the lack of standardized submissions across vendors forces teams to do extra work to translate results to their own infrastructure plans. Nvidia's demonstrated software gains—doubling throughput on unchanged hardware—underline that for existing installations, software and compiler optimizations can be as valuable as a hardware upgrade.

gentic.news Analysis

This MLPerf round continues a trend we've tracked closely: Nvidia leveraging its full-stack advantage—from silicon (Blackwell Ultra) to software (Dynamo, novel parallelism strategies)—to set performance records that competitors struggle to contest on the same terms. The 2.7x software gain on DeepSeek-R1 is particularly significant, following a week of major Nvidia software announcements, including the PivotRL framework that cut agent training costs 5.5x. It demonstrates that Nvidia's moat is as much about its CUDA software ecosystem as its transistor density.

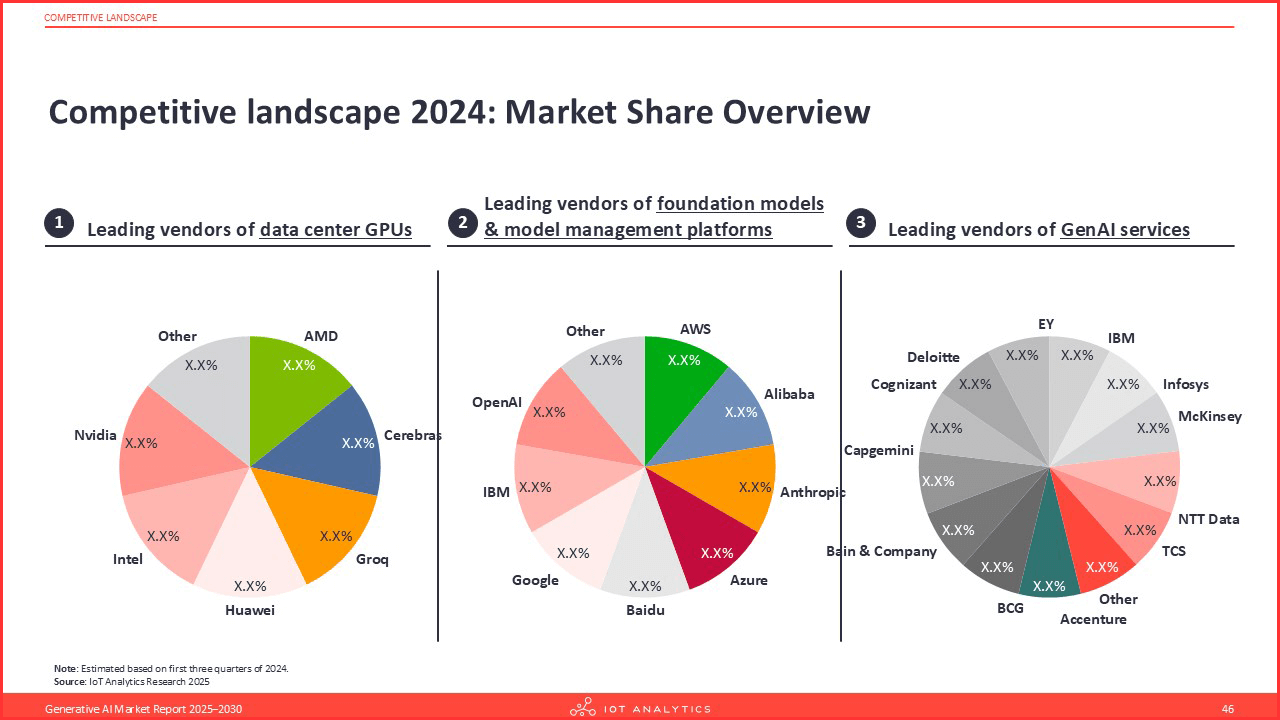

The absence of Google's TPUs is notable. As we covered in our analysis of the TSMC 2nm capacity constraints, the AI chip landscape is facing supply pressures. Google may be prioritizing Ironwood TPU production for its internal cloud and AI services over benchmark submissions. Meanwhile, AMD's and Intel's targeted submissions reflect a pragmatic strategy: compete where you can, avoid a losing battle on Nvidia's chosen terrain of extreme scale.

This fragmentation in benchmarks mirrors the broader competitive landscape. As noted in our entity relationships, Nvidia both partners with and competes against companies like OpenAI and Meta. These MLPerf results, where Nvidia tests OpenAI's GPT-OSS-120B model, exemplify this complex dynamic. The results also arrive as Nvidia's market valuation soars past $3 trillion, driven by relentless AI infrastructure demand that these benchmarks are designed to measure.

Frequently Asked Questions

What is MLPerf Inference?

MLPerf Inference is a suite of benchmarks developed by MLCommons, an open engineering consortium, to measure the performance of AI systems when running trained models (inference). It is considered the industry standard for fair and objective performance comparisons across different hardware and software platforms.

Why can't I directly compare Nvidia's, AMD's, and Intel's MLPerf results?

The vendors submitted results using different system configurations (e.g., 288 GPUs vs. 8 GPUs), different benchmark scenarios (e.g., server vs. offline), and sometimes entirely different models. Each company optimized its submission to highlight its strengths in a specific market segment, making an apples-to-apples comparison across all their claims impossible without careful normalization.

What are the practical implications of Nvidia's 2.7x software gain?

For organizations already operating Nvidia hardware, these software optimizations—likely to be rolled out in future CUDA and framework updates—could effectively double the throughput of their existing infrastructure for models like DeepSeek-R1 without any capital expenditure on new GPUs. This translates directly to lower inference cost per token and increased capacity.

Who uses MLPerf results?

Cloud providers, hardware manufacturers, and large enterprise buyers use MLPerf data to inform purchasing decisions, validate performance claims, and guide system design. Researchers also use it to track the efficiency improvements of AI computing over time.