A significant milestone in the local AI movement is gaining traction, as evidenced by developer adoption. The open-source project GPT4All has now surpassed 77,000 stars on GitHub, signaling strong community interest in running large language models entirely offline, without cloud dependencies or API fees.

The core proposition is straightforward: download a desktop application, select a model, and begin interacting with an LLM. No GPU is required; inference runs on a standard CPU. No internet connection is needed after the initial model download. There is no subscription fee.

What's New: DeepSeek R1 Support and Python SDK

The recent development highlighted by the community is the platform's support for distilled versions of DeepSeek R1. DeepSeek-R1 is a series of reasoning-specialized models that typically require significant computational resources. By running distilled variants locally, GPT4All users can access "reasoning-grade" outputs on consumer hardware.

The practical utility for developers is unlocked through the GPT4All Python SDK. The SDK allows local models to be integrated into custom applications with minimal code. The promoted example is a three-line script to run a Llama 3 model locally, enabling developers to build internal tools, automate workflows, or power applications without incurring per-token costs or hitting API rate limits.

Technical Details and Features

Platform & Models: GPT4All operates as a desktop application (available for Windows, macOS, and Linux) and a software library. It hosts a curated ecosystem of quantized and optimized open-source models from families like Llama, Mistral, and now DeepSeek, which are selected for their ability to run efficiently on CPUs.

LocalDocs Feature: A key privacy-focused feature is LocalDocs. This allows users to create a local vector database from their documents (PDFs, notes, text files). The LLM can then answer questions based solely on this private corpus, with all processing occurring on-device. No document data is ever transmitted to a remote server.

Cost Structure: The model is free and open-source. The primary cost to the user is the electricity and hardware to run the models. There are no fees for the GPT4All software or for inference calls made via the SDK.

How It Compares: Local vs. Cloud AI

The value proposition of GPT4All sits in direct contrast to the dominant cloud API model from providers like OpenAI, Anthropic, or Google.

Cost $0 after setup $0.01 - $0.80+ per 1M tokens Privacy Data never leaves device Data processed on vendor servers Latency Depends on local CPU speed Generally low, consistent latency Rate Limits None (hardware-limited) Strict tier-based limits Model Choice Curated open-source models Proprietary, vendor-locked models Setup Requires model download (~4-8GB) Instant via API keyFor developers building applications where cost, data sovereignty, or unpredictable usage spikes are concerns, local inference presents a compelling alternative. The trade-off is typically in raw performance (speed and, often, output quality) compared to top-tier cloud models, and the responsibility of managing local compute resources.

gentic.news Analysis

This surge in popularity for GPT4All is a clear data point in the ongoing local-first AI trend, a movement we first noted gaining developer mindshare in late 2024. It's a direct response to the economic and control limitations of the cloud API oligopoly. While companies like Meta (with Llama) and Mistral AI have been pushing the frontier of open-weight models, the critical middleware—user-friendly local inference engines—has been the missing link for mainstream developers. GPT4All, alongside projects like Ollama and LM Studio, is filling that gap.

The addition of DeepSeek R1 distillations is particularly noteworthy. As we covered in our analysis of DeepSeek-R1's performance on SWE-Bench, the original models demonstrated strong reasoning capabilities but required substantial GPU memory. Bringing a distilled version into the local CPU ecosystem significantly lowers the barrier to experimenting with chain-of-thought and reasoning techniques, which were previously the domain of expensive cloud APIs. This aligns with a broader industry pattern of model distillation and quantization pushing advanced capabilities onto more constrained hardware, a trend led by research from organizations like Hugging Face and Together AI.

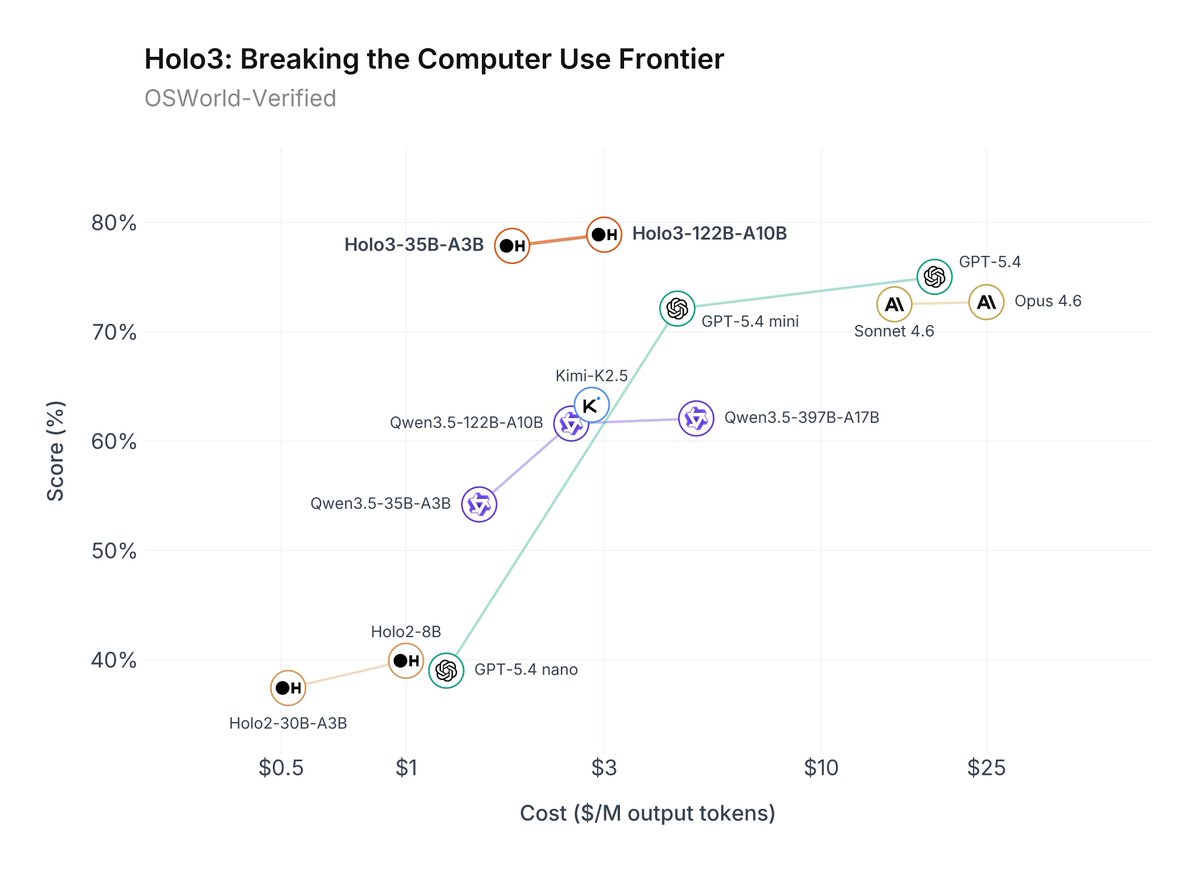

However, practitioners should temper expectations. "Reasoning-grade" from a heavily distilled model running on a laptop CPU is not equivalent to the performance of a full DeepSeek-R1-67B on an H100. The appeal here is accessibility and cost-structure, not state-of-the-art benchmark dominance. For many internal business applications—document Q&A, basic code generation, text summarization—the quality is often "good enough," especially when weighed against infinite, free scalability and perfect privacy. GPT4All's 77,000 stars suggest a large cohort of developers have reached that same conclusion.

Frequently Asked Questions

What is GPT4All?

GPT4All is an open-source ecosystem for running large language models locally on your computer's CPU. It includes a desktop chat application and a Python software development kit (SDK) that allows developers to integrate local LLMs into their own applications without any internet connection or API costs.

Is GPT4All really free?

Yes, the GPT4All software is free and open-source. You can download and use it without paying any license or subscription fees. The only costs are the electricity to run your computer and the hardware itself. The models it runs are also free, open-weight models from communities like Meta (Llama) and DeepSeek.

How does GPT4All's performance compare to ChatGPT?

Performance depends on the specific local model you choose and your hardware. Generally, the largest, most capable local models (e.g., a 7B parameter model) will be slower and may produce less refined or accurate outputs than GPT-4 or Claude 3.5, especially on complex reasoning tasks. The trade-off is complete privacy, zero operational cost, and no usage limits. For many straightforward tasks like text summarization or basic coding help, the difference may be negligible for users.

What do I need to run GPT4All?

You need a desktop computer (Windows, macOS, or Linux) with a relatively modern CPU and at least 8-16 GB of RAM. A dedicated GPU is not required, as the software is optimized for CPU inference. You'll also need sufficient storage space to download the model files, which typically range from 4 to 8 gigabytes each.