The Escalating Conflict

The U.S. Department of Defense is considering a dramatic escalation in its ongoing dispute with AI company Anthropic, potentially requiring defense contractors to certify they are not using Anthropic's Claude AI models in their work for the Pentagon. According to reporting from The Wall Street Journal, this move comes after contract renewal negotiations between Anthropic and the Pentagon stalled over demands for additional safeguards against using Claude for surveillance and weapons applications.

The conflict represents a significant clash between military AI adoption priorities and corporate ethical frameworks, with potentially far-reaching implications for how advanced AI systems are integrated into national security infrastructure. The Pentagon's consideration of contractor certification requirements suggests the dispute has moved beyond simple contract negotiations into broader policy territory.

Background: The Claude Constitution and Ethical Framework

At the heart of this conflict lies Anthropic's "Claude Constitution"—the ethical framework governing its AI assistant that was recently discussed publicly by AI researcher Ethan Mollick. This constitution establishes guardrails that prevent Claude from being used for certain military applications, particularly surveillance and weapons development. These restrictions appear to conflict directly with the Pentagon's interest in leveraging cutting-edge AI capabilities across its operations.

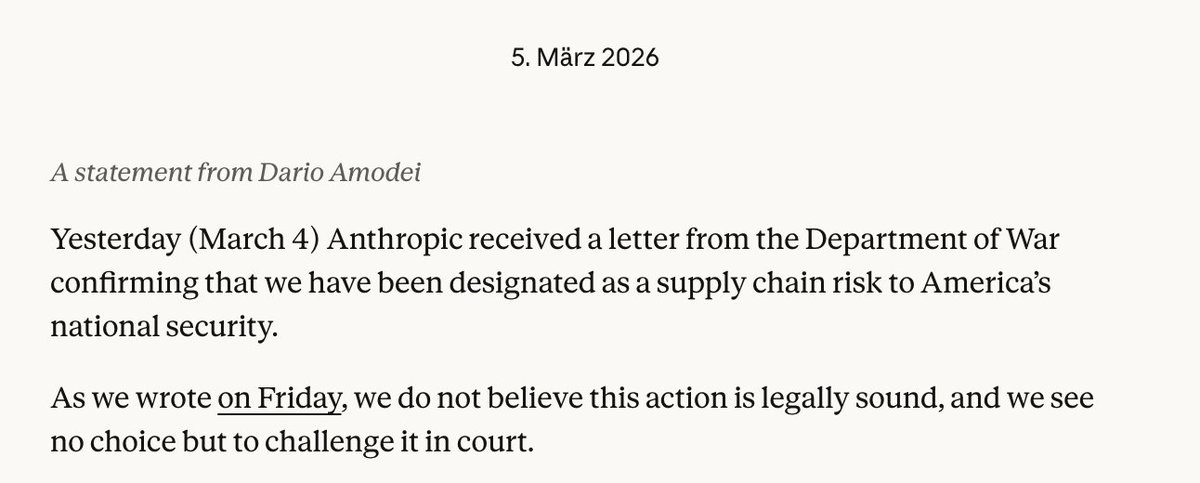

Anthropic, founded by former OpenAI researchers Dario and Daniela Amodei, has positioned itself as a leader in AI safety and ethical AI development. The company's public commitment to these principles has now created a fundamental incompatibility with certain defense applications. As CEO Dario Amodei recently acknowledged, there is growing tension between safety principles and commercial pressure—a tension now playing out in the high-stakes arena of national security.

The Stalled Negotiations

Contract renewal negotiations between Anthropic and the Pentagon stalled on February 16, 2026, specifically over the Pentagon's demands for additional safeguards against using Claude for surveillance and weapons applications. This suggests the military sought either modifications to Claude's ethical restrictions or workarounds that Anthropic was unwilling to provide.

The timing is particularly significant given Anthropic's recent strategic moves. Just days before the negotiations stalled, the company announced a strategic partnership with Infosys to develop custom AI agents for enterprise markets and received investment from Abu Dhabi's MGX. These developments indicate Anthropic is pursuing commercial growth while maintaining its ethical stance—a balancing act that appears increasingly difficult in the defense sector.

Implications for Defense Contractors

If implemented, the Pentagon's proposed certification requirement would create significant compliance challenges for defense contractors. Companies working with the Department of Defense would need to audit their AI usage and potentially restructure their technology stacks to exclude Claude AI. This could impact everything from administrative automation to more sensitive applications, depending on how broadly the restriction is applied.

The move also raises questions about how contractors might verify compliance. Would simple declarations suffice, or would the Pentagon require more rigorous auditing? And how would this interact with contractors' existing commitments to ethical AI use? These practical considerations could create substantial administrative burdens for defense firms.

Broader Industry Implications

This conflict represents more than just a contract dispute—it highlights fundamental questions about AI governance in sensitive sectors. As AI capabilities advance, tensions between commercial AI providers' ethical frameworks and government agencies' operational needs are likely to become more common.

Anthropic's position in this dispute may influence how other AI companies approach defense contracts. Companies like OpenAI, which competes directly with Anthropic, may face similar pressures to modify their ethical guidelines for government work. Alternatively, they might see an opportunity to capture defense market share by offering more flexible terms.

The situation also raises questions about the future of "ethical AI" as a competitive differentiator. If companies with strong ethical frameworks cannot work with major government clients, will this create market pressure to soften ethical stances? Or will it create specialized "defense-ready" AI providers with different ethical standards?

National Security Considerations

From a national security perspective, the Pentagon faces a difficult balancing act. On one hand, restricting access to cutting-edge AI like Claude could put the U.S. at a technological disadvantage relative to adversaries with fewer ethical constraints. On the other hand, using AI systems with built-in restrictions against certain military applications could create operational vulnerabilities.

The Department of Defense has previously regulated foreign AI companies like Alibaba, showing willingness to restrict AI access on security grounds. However, restricting a domestic AI leader like Anthropic represents a different kind of challenge—one that pits security needs against domestic innovation and ethical considerations.

The Future of Military-Civilian AI Collaboration

This dispute may force a reevaluation of how military and civilian AI ecosystems interact. Several potential paths forward exist:

- Specialized Military AI Development: The Pentagon might accelerate development of its own AI capabilities, reducing reliance on commercial providers with ethical restrictions.

- Modified Ethical Frameworks: AI companies might develop specialized versions of their models with different ethical guidelines for government use.

- Regulatory Solutions: New regulations or certification processes could emerge to bridge the gap between commercial AI ethics and government needs.

- International Competition: Other nations without similar ethical constraints might gain advantages in military AI applications.

Conclusion: A Defining Moment for AI Ethics

The Pentagon's potential move to restrict Claude AI usage represents a defining moment in the intersection of AI ethics, commercial interests, and national security. As AI systems become increasingly capable and integrated into critical infrastructure, such conflicts are likely to become more frequent and consequential.

Anthropic's willingness to potentially walk away from defense contracts over ethical principles sets an important precedent for the AI industry. However, the practical implications for national security and technological competitiveness cannot be ignored. How this conflict resolves—whether through compromise, regulation, or separation of military and civilian AI ecosystems—will shape the future of AI development and deployment for years to come.

The coming weeks will be crucial as both sides weigh their options. Will the Pentagon implement the certification requirement? Will Anthropic modify its stance? Or will a third path emerge that satisfies both security needs and ethical principles? The answers to these questions will reverberate far beyond this specific dispute, influencing the entire landscape of AI governance and military technology adoption.