Perplexity AI reported a 3x throughput improvement for 70B-parameter large language models running on Nvidia Blackwell GPUs. The gain, achieved through FP4 quantization and custom memory scheduling, targets latency-sensitive search workloads where every millisecond matters.

Key facts

- 3x throughput gain claimed for 70B models on Blackwell.

- FP4 quantization and custom memory scheduling cited as methods.

- Perplexity operates its own inference stack.

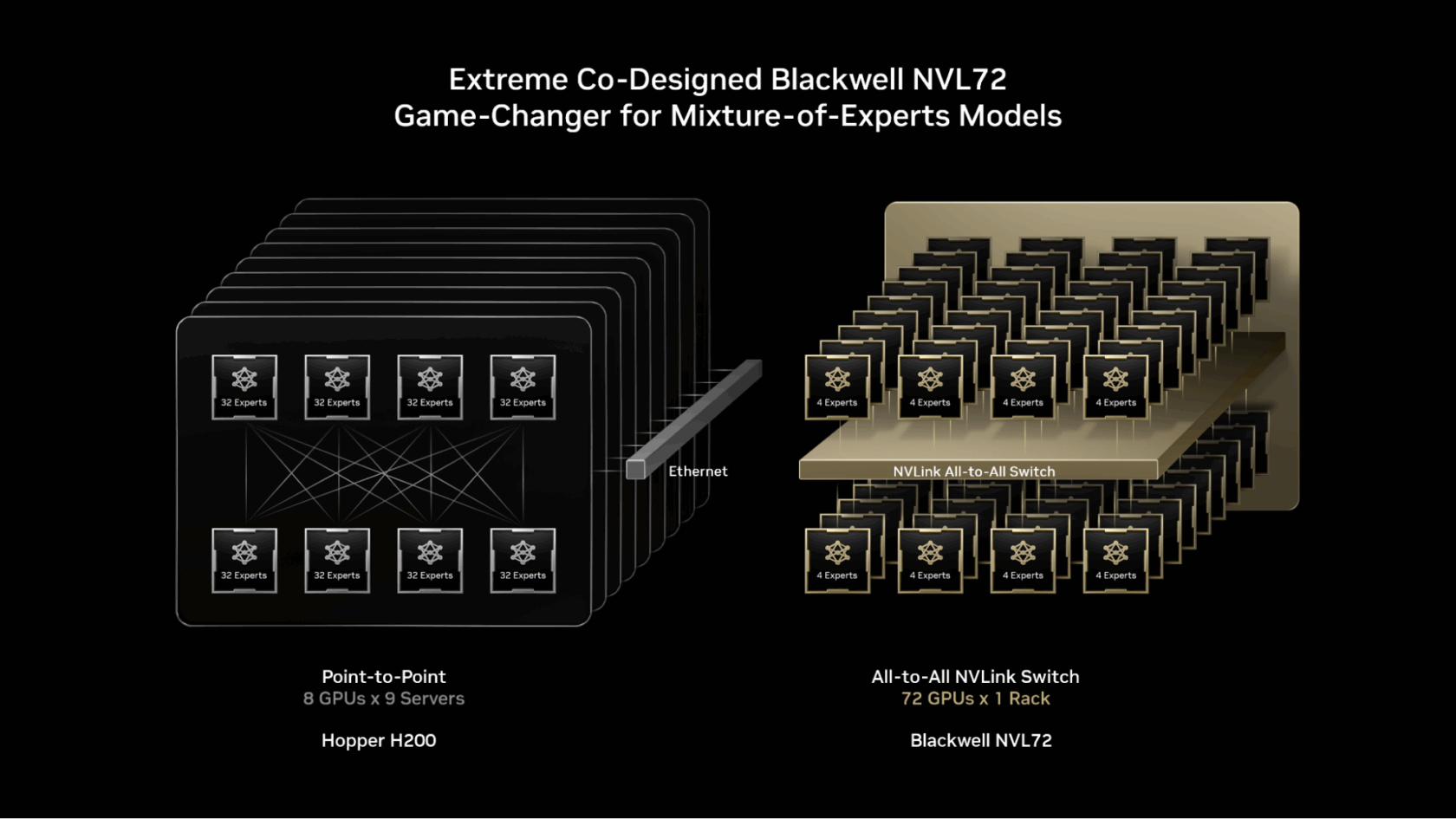

- Nvidia markets Blackwell at 2x over Hopper for transformers.

- Perplexity did not disclose GPU count or capex.

Perplexity AI claims a 3x inference throughput improvement for 70B-parameter models on Nvidia Blackwell GPUs, according to a company announcement highlighted by TipRanks. The gains come from a combination of FP4 quantization and custom memory scheduling that reduces KV-cache pressure, enabling higher batch sizes without degrading latency.

Why this matters more than the press release suggests

Perplexity operates its own inference stack rather than relying solely on third-party APIs like OpenAI or Anthropic. This infrastructure bet is central to its competitive positioning: the company has long argued that vertical integration from search index to model inference lets it optimize across the stack. The Blackwell numbers are the first concrete evidence that thesis is paying off.

The 3x figure is notable because it exceeds typical Blackwell uplift claims. Nvidia itself has marketed Blackwell as delivering 2x inference performance over Hopper for transformer models [per Nvidia's GTC 2026 presentations]. Perplexity's additional 1x suggests software-level optimizations — likely the FP4 support and custom scheduling — that go beyond what Nvidia's reference stack provides.

Perplexity did not disclose the specific number of Blackwell GPUs deployed or the total capital expenditure for the rollout. The company has previously stated it runs its own GPU clusters, but has not published cluster sizes or utilization rates.

Context and comparisons

The announcement comes amid a broader infrastructure push by AI-native search companies. Google Cloud, which competes with Perplexity through its Vertex AI and Gemini APIs, has also highlighted Blackwell-based gains for its own models [per Google Cloud's May 2026 blog posts]. But Google's advantage is scale and proprietary TPU hardware; Perplexity's is lean optimization for a single use case.

Perplexity's approach mirrors what companies like Groq and Cerebras have done with custom inference hardware, but on Nvidia's general-purpose GPUs. The question is whether the 3x gain holds at production scale across diverse query patterns, not just benchmark workloads.

What to watch

Watch for independent benchmarks from MLPerf Inference or similar third-party evaluations. Perplexity has not submitted to MLPerf for its Blackwell deployment. Also watch for whether Perplexity discloses GPU counts and utilization rates in its next quarterly update — those numbers would reveal whether the throughput gain translates to real cost savings or is a peak-performance lab result.

What to watch

Watch for MLPerf Inference submissions from Perplexity for Blackwell, which would provide third-party validation. Also watch for whether Perplexity discloses GPU cluster size and utilization in its next public update — key to assessing whether the 3x gain is real-world or lab-only. Competitors like Groq and Cerebras may respond with their own Blackwell benchmarks.