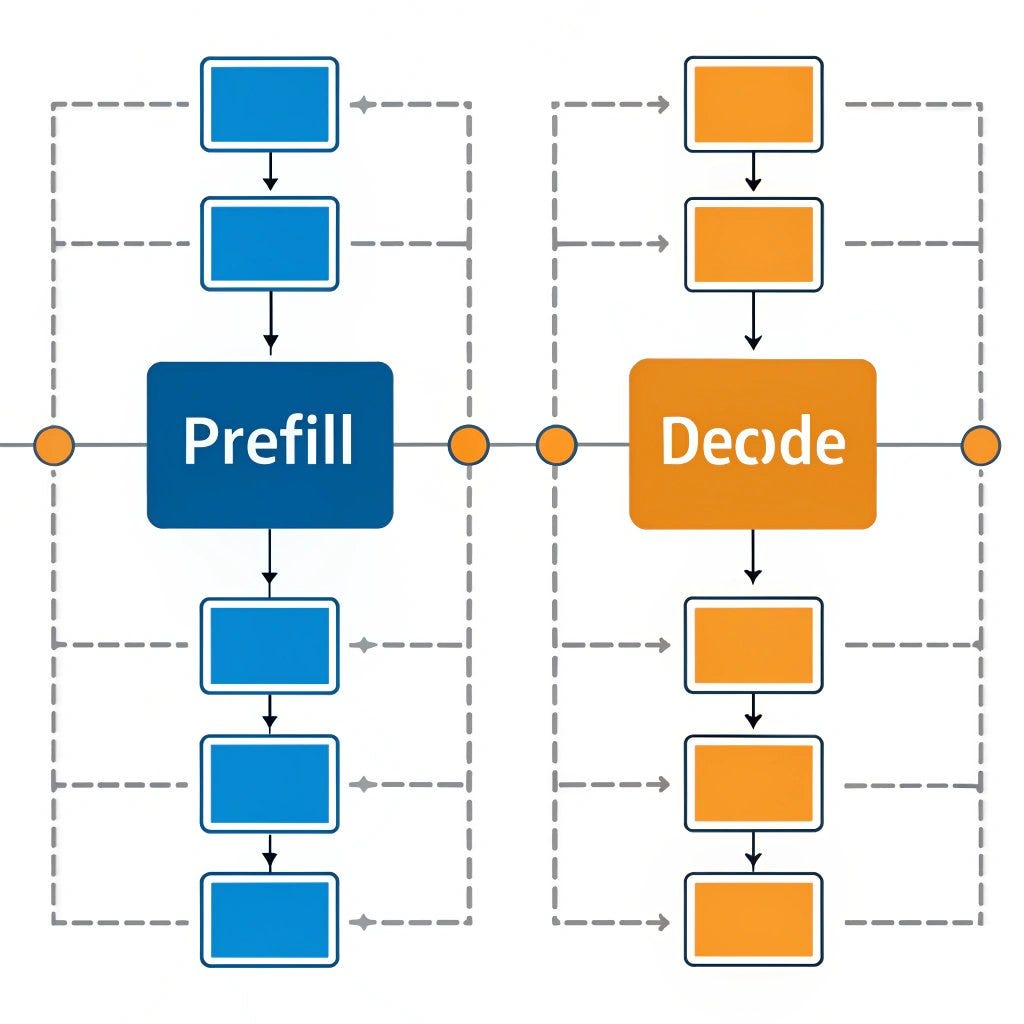

A new research paper, highlighted by AI researcher Rohan Paul, makes a significant claim: the heaviest part of large language model (LLM) inference, the prefill stage, may become portable through a novel "Prefill-as-a-Service" (PaaS) architecture. The core proposal is to decouple the computationally intensive prefill phase from the token-by-token decoding phase, potentially revolutionizing how LLMs are deployed for latency-sensitive applications.

Key Takeaways

- A research paper proposes a 'Prefill-as-a-Service' architecture to separate the heavy prefill computation from the lighter decoding phase in LLM inference.

- This could enable new deployment models where resource-constrained devices handle only the decoding step.

What Happened: Decoupling the Inference Bottleneck

The source is a retweet referencing a research paper. The key claim centers on the concept of "Prefill-as-a-Service." In standard autoregressive LLM inference (like ChatGPT's text generation), there are two main phases:

- Prefill Phase: The model processes the entire input prompt (user query + context) in one forward pass through the neural network. This requires holding the entire prompt's key-value (KV) cache in memory and performing a large, single batch computation. It's memory-bound and computationally heavy.

- Decoding Phase: The model generates tokens one at a time, performing a much smaller forward pass for each new token, conditioned on the cached KV states from the prefill. This is less computationally intensive but latency-critical.

The paper's central hypothesis is that these two phases can be architecturally separated. A powerful, centralized service (the "Prefill-as-a-Service" provider) would handle the initial heavy lifting—processing the prompt and computing the initial KV cache. This computed state could then be transmitted to a client device (a phone, laptop, or edge device), which would only need to run the lightweight, iterative decoding phase to produce the final output.

The Technical Promise: Portability and Lower Latency

The proposed separation targets the fundamental bottleneck in low-latency LLM serving. Today, to get fast token generation, the entire model (often tens or hundreds of billions of parameters) must be served on high-end GPUs with massive memory bandwidth. The PaaS model suggests you could keep those expensive resources solely for the prefill step, which is inherently batchable and less latency-sensitive. The decoding, which demands ultra-low latency for a good user experience, could be offloaded to cheaper, more distributed hardware.

Potential implications of this architecture include:

- Portable High-Performance LLMs: A smartphone could generate text at speeds rivaling a cloud API, after receiving a pre-computed KV cache from a central service.

- Reduced Cloud Compute Cost: Cloud providers could optimize infrastructure specifically for the prefill workload, potentially improving overall hardware utilization.

- New Privacy Paradigms: Sensitive prompts could be prefilled on a trusted device, with only the encrypted KV cache sent to a cloud service for decoding, or vice-versa, depending on the trust model.

Key Unanswered Questions and Challenges

The source material is a tweet, not the full paper, so critical technical details are absent. The feasibility of PaaS hinges on several major engineering and research challenges:

- KV Cache Size & Transfer Latency: The KV cache for a long context (e.g., 128K tokens) can be enormous (gigabytes). Transmitting this cache over a network could introduce latency that negates any decoding speed gains. The paper would need to propose novel compression techniques for the KV cache.

- Decoupling Overhead: The prefill and decoding phases are tightly integrated in current systems. Separating them cleanly may introduce significant synchronization and state management overhead.

- Batch Efficiency vs. Latency: Cloud prefill services rely on batching many user requests to be efficient. This batching inherently increases latency for individual requests, conflicting with the goal of fast interactive systems.

gentic.news Analysis

This proposal sits at the intersection of two dominant trends in the 2025-2026 AI infrastructure landscape: specialized inference optimization and the push toward edge AI. It directly confronts the problem highlighted by companies like Groq with their LPU-based systems and MatX with their attention-specialized hardware—the memory bandwidth wall for sequential decoding. If viable, PaaS would be a software-level solution to a hardware problem, similar in spirit to speculative decoding but targeting system architecture rather than the algorithm itself.

The concept aligns with a broader industry movement to decompose monolithic AI workloads. We've seen this in training with frameworks like DeepSpeed and its Zero Redundancy Optimizer (ZeRO), and in inference with the separation of embedding lookups, MoE routing, and now potentially prefill/decode. This paper's claim, if substantiated, would be a logical next step: treating different computational graphs within inference as independent microservices.

However, it directly challenges the prevailing direction of on-device LLMs championed by Apple (with its AXE framework), Google (Gemini Nano), and Qualcomm. Their bet is on fitting entire, smaller models onto devices. PaaS argues for a hybrid approach: heavy lifting in the cloud, lightweight streaming on device. The winner will be determined by the trade-off between KV cache transfer size and the cost/performance of shipping ever-larger parameter counts to edge devices. This paper is likely an early academic probe into that design space, and its real-world impact will depend on solving the formidable data transfer problem.

Frequently Asked Questions

What is the "prefill" phase in LLM inference?

The prefill phase is the initial, single forward pass of the entire input prompt through the large language model. It computes the initial context and populates the Key-Value (KV) cache, which stores intermediate states for all prompt tokens. This phase is computationally intensive and memory-heavy because it processes the full context in parallel.

How does "Prefill-as-a-Service" differ from a standard LLM API?

A standard LLM API (like OpenAI's) performs both the prefill and decoding phases on the same centralized servers. With Prefill-as-a-Service, only the prefill phase is done centrally. The resulting computational state (KV cache) is sent to a client, which locally handles the lighter, sequential decoding phase to generate the final output tokens.

What is the biggest technical hurdle for Prefill-as-a-Service?

The primary challenge is the size of the Key-Value (KV) cache that must be transferred from the service to the client. For models with long context windows, this cache can be multiple gigabytes. Network transfer latency for this amount of data could easily outweigh the benefits of fast local decoding, unless highly efficient cache compression techniques are invented.

Could this make running LLMs on phones faster?

In theory, yes. If a phone receives a pre-computed KV cache from a cloud service, it could generate subsequent tokens very quickly using its local NPU or GPU, as it would only be running the small decoding step. The speed gain would depend entirely on how quickly the cache can be delivered versus the time saved in local generation.