A new player in the AI inference space is making waves with claims of unprecedented performance on Apple's proprietary hardware. RunAnywhere, a Y Combinator W26 startup founded by Sanchit and Shubham, has developed MetalRT—a custom inference engine that reportedly outperforms established solutions like llama.cpp, Apple's MLX, Ollama, and sherpa-onnx across multiple AI modalities.

The Performance Breakthrough

According to benchmark tests conducted on an M4 Max with 64GB RAM, MetalRT delivers impressive speed improvements across three critical AI workloads:

Large Language Model Inference:

- Qwen3-0.6B: 658 tokens/second (1.19x faster than MLX, 2.23x faster than llama.cpp)

- Qwen3-4B: 186 tokens/second (1.09x faster than MLX, 2.14x faster than llama.cpp)

- LFM2.5-1.2B: 570 tokens/second (1.12x faster than MLX, 1.53x faster than llama.cpp)

- Time-to-first-token: Just 6.6 milliseconds

Speech-to-Text Processing:

MetalRT achieves what the developers call "714x real-time" transcription—processing 70 seconds of audio in just 101 milliseconds. This represents a 4.6x speed improvement over mlx-whisper.

Text-to-Speech Synthesis:

At 178 milliseconds for synthesis, MetalRT performs 2.8x faster than both mlx-audio and sherpa-onnx.

The Technical Approach: Going Straight to Metal

What sets MetalRT apart is its architectural approach. Rather than building on existing frameworks, the team went "straight to Metal"—Apple's low-level graphics and compute API. By writing custom Metal shaders and eliminating framework overhead, they've created an inference engine specifically optimized for Apple Silicon's unified memory architecture and GPU capabilities.

"We built this because demoing on-device AI is easy but shipping it is brutal," the founders explain in their launch announcement. "Voice is the hardest test: you're chaining STT, LLM, and TTS sequentially, and if any stage is slow, the user feels it."

RCLI: The Complete On-Device Voice AI Pipeline

To demonstrate their technology's capabilities, RunAnywhere has open-sourced RCLI (RunAnywhere Command Line Interface), which they describe as "the fastest end-to-end voice AI pipeline on Apple Silicon." The tool enables microphone-to-spoken-response interactions entirely on-device, requiring no cloud services or API keys.

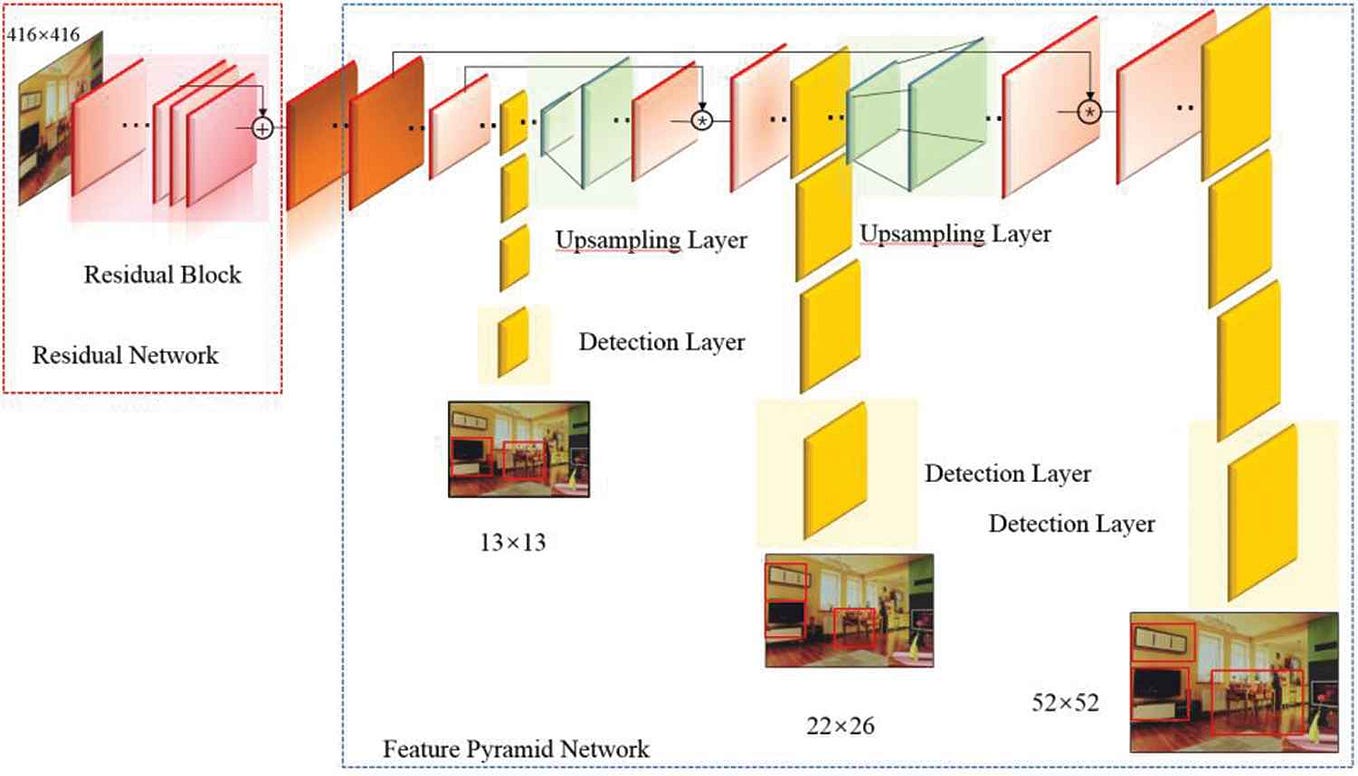

RCLI implements a sophisticated pipeline architecture:

- Voice Activity Detection using Silero

- Speech-to-Text with Zipformer streaming plus Whisper/Parakeet offline capabilities

- LLM Processing with Qwen3/LFM2/Qwen3.5 models featuring KV cache continuation and Flash Attention

- Text-to-Speech with double-buffered sentence-level synthesis

- Tool Calling with native LLM tool call formats

- Multi-turn Memory with sliding window conversation history

The system supports 43 macOS actions via voice commands and includes local RAG (Retrieval-Augmented Generation) capabilities for querying documents. Users can interact through push-to-talk, continuous listening, or text-based commands.

The Latency Challenge in Voice AI

The founders highlight a critical insight that drove their development: "The thing that's hard to solve is latency compounding. In a voice pipeline, you're stacking three models in sequence. If each adds 200ms, you're at 600ms before the user hears a word, and that feels broken."

This compounding effect explains why many teams "fall back to cloud APIs not because local models are bad, but because local inference infrastructure is" inadequate for delivering responsive user experiences. By optimizing every stage of the pipeline and running them concurrently on Metal GPU with three concurrent threads, RunAnywhere achieves sub-200ms end-to-end latency.

Getting Started with RCLI

Installation is straightforward via either Homebrew or a direct shell script:

# Homebrew method

brew tap RunanywhereAI/rcli https://github.com/RunanywhereAI/RCLI.git

brew install rcli

rcli setup # downloads ~1 GB of models

rcli # interactive mode with push-to-talk

# Direct installation

curl -fsSL https://raw.githubusercontent.com/RunanywhereAI/RCLI/main/install.sh | bash

The setup process downloads approximately 1GB of models. The system requires macOS 13+ running on Apple Silicon (M1 or later processors).

Implications for On-Device AI Development

RunAnywhere's breakthrough comes at a pivotal moment in AI development. As privacy concerns grow and cloud costs remain significant, on-device AI offers compelling advantages. However, until now, performance limitations have constrained practical applications, particularly for latency-sensitive use cases like voice interfaces.

MetalRT's performance claims, if validated by independent testing, could accelerate the shift toward edge computing for AI applications. The technology demonstrates that with proper hardware-specific optimization, Apple Silicon devices—from MacBooks to future iPhone and iPad models—could become powerful AI inference platforms without relying on cloud services.

The Competitive Landscape

RunAnywhere enters a competitive field that includes:

- llama.cpp: The widely-used C++ implementation of Facebook's LLaMA model

- Apple MLX: Apple's own machine learning framework for Apple Silicon

- Ollama: A popular tool for running large language models locally

- sherpa-onnx: An open-source speech recognition toolkit

What distinguishes MetalRT is its singular focus on Apple hardware optimization. While other solutions aim for cross-platform compatibility, RunAnywhere has sacrificed generality for maximum performance on a specific hardware platform—a strategy that appears to be paying dividends in benchmark results.

Looking Ahead

The open-sourcing of RCLI represents both a demonstration of capability and an invitation to the developer community. By providing a complete, working implementation of their technology, RunAnywhere enables developers to experience the performance improvements firsthand while potentially building their own applications on the MetalRT engine.

As AI continues its trajectory toward ubiquity, breakthroughs in inference efficiency like those claimed by RunAnywhere could prove as important as advances in model architecture. Faster, more efficient inference enables new applications, reduces costs, and brings sophisticated AI capabilities to devices without constant internet connectivity.

For now, developers interested in on-device AI for Apple platforms have a new tool to explore—one that promises to make "talk to your Mac" experiences not just possible, but pleasantly responsive.