A new, unidentified large language model with approximately 100 billion parameters has been listed on the model aggregation platform OpenRouter. The model's provider and official name are not disclosed, leading to immediate speculation within the AI community about its origin.

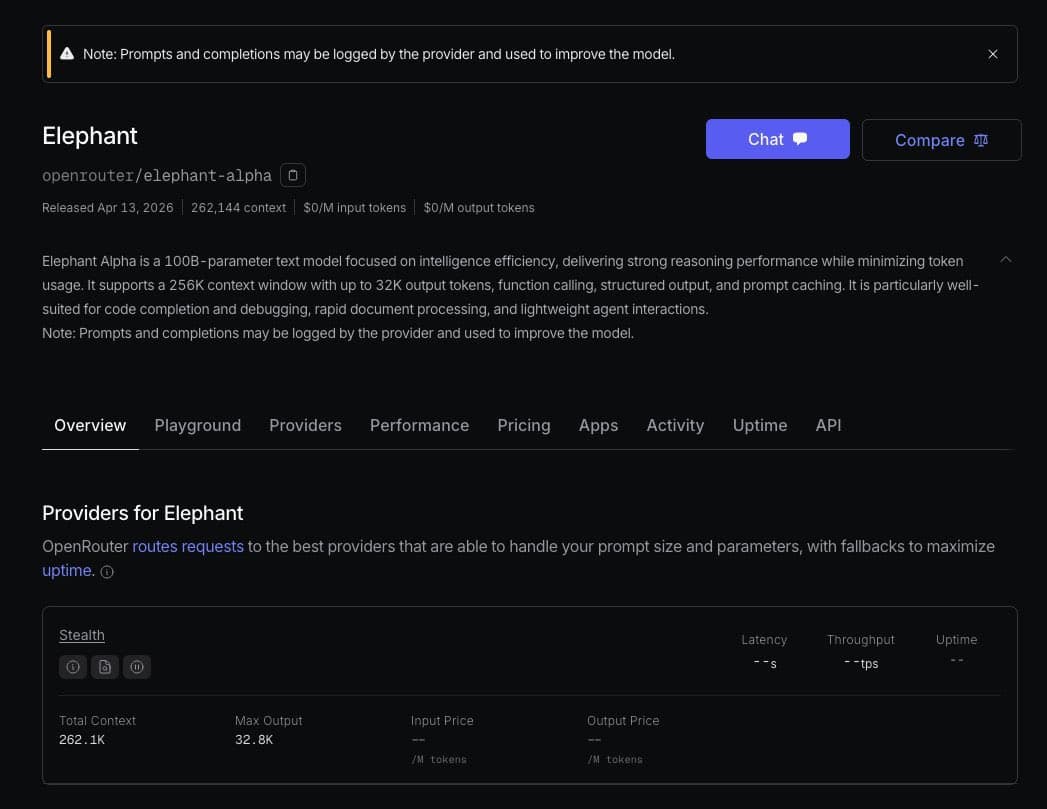

The model is accessible via the OpenRouter API under a generic identifier. Its sudden appearance, without any accompanying announcement from a major AI lab, is unusual and has fueled theories that it could be a test deployment or a "stealth" launch.

Key Takeaways

- A new, unannounced 100-billion-parameter AI model has appeared on the OpenRouter API platform.

- Its origin is unknown, but observers speculate it could be a variant from DeepSeek or an update to Kimi's code model.

What Happened

On April 15, 2026, the model was added to OpenRouter's roster of available inference endpoints. The platform's listing shows key specifications, including its ~100B parameter size and context window, but lacks attribution. This has led industry observers and tracking accounts like @intheworldofai to hypothesize about its source.

The primary speculation centers on two potential origins:

- DeepSeek V4 Lite: A potentially smaller, more efficient variant of DeepSeek's flagship DeepSeek-V3 model, which we covered at its launch in DeepSeek-V3 Launches with 671B Parameters and MoE Architecture. A "Lite" version would align with a trend of releasing cost-optimized models for API distribution.

- Kimi K2.6 Code: An updated iteration of Moonshot AI's Kimi series, potentially with enhanced coding capabilities. This would follow Kimi's established focus on long-context applications.

Context

OpenRouter has become a critical hub for comparing and accessing a wide array of proprietary and open-weight models from different vendors. The appearance of unlabeled models is rare but not unprecedented; it sometimes precedes an official announcement as companies gauge performance or conduct limited testing.

The 100B parameter class is a highly competitive segment, sitting between more capable but expensive massive models (like 400B+ parameters) and smaller, faster models (like 7B-70B parameters). It offers a balance of strong reasoning and manageable inference cost, making it a key battleground for API providers.

gentic.news Analysis

The stealth listing is a notable data point in the ongoing, rapid-fire competition among Chinese AI labs. If the model is from DeepSeek, it would represent a strategic flanking maneuver. DeepSeek's parent company, DeepSeek (深度求索), has been aggressively pursuing the open-source and API markets since the release of DeepSeek-V3. Releasing a 100B "Lite" version would allow them to compete directly in the premium API tier against offerings like Claude 3.5 Sonnet and GPT-4o-mini, but at a potentially lower cost. This aligns with their stated goal of making high-performance AI more accessible, a theme we explored in DeepSeek Coder V2 Challenges GPT-4o on HumanEval.

If the model originates from Moonshot AI (creator of Kimi), it signals a continued expansion beyond their long-context niche into general capability and code-specific performance. The mention of "K2.6 code" suggests a direct iteration on their Kimi-Coder line. This would place them in closer competition with DeepSeek Coder and Qwen2.5-Coder, intensifying the fight for developer mindshare. The activity around Kimi has been trending upward (📈), with increased model releases and API availability throughout early 2026.

Regardless of origin, the move highlights the strategic importance of the OpenRouter platform as a neutral ground for model discovery and benchmarking. For AI labs, it serves as a low-friction channel to deploy and test models with real users before a full marketing push. For developers, it underscores the benefit of abstraction layers that allow quick switching between emerging, unidentified models.

Frequently Asked Questions

What is OpenRouter?

OpenRouter is an API aggregation platform that provides unified access to dozens of large language models from different companies, including Anthropic, Google, Meta, and various AI labs. It allows developers to query multiple models using a single API key and format, simplifying comparison and integration.

Who are the likely candidates behind this stealth model?

Based on community speculation and the current competitive landscape, the two most cited candidates are DeepSeek (potentially releasing a "DeepSeek V4 Lite") and Moonshot AI (potentially releasing a "Kimi K2.6 Code" model). Both companies are Chinese AI labs known for releasing high-performance models and have a history of deploying on OpenRouter.

How can I try or identify this model?

The model is listed on the OpenRouter platform. Developers with an OpenRouter API key can query it directly. However, without official documentation, its capabilities, optimal prompts, and limitations are unknown and must be discovered through experimentation. Its generic name on the platform makes positive identification difficult without metadata from the provider.

Why would a company release a model stealthily?

Stealth or unannounced releases allow companies to gather real-world performance data, monitor system stability under load, and gauge user interest without the pressure of a full public launch. It can also create buzz and speculation within the technical community, as is happening now, serving as an organic marketing tactic.