A new research paper introduces Stepwise, a neuro-symbolic proof generation framework designed to automate the verification of critical systems using interactive theorem provers (ITPs) like Isabelle. The system addresses a core bottleneck in formal verification: the highly manual construction of proof scripts, which limits scalability for large projects like operating system kernels.

Published on arXiv, the paper demonstrates that Stepwise proves up to 77.6% of theorems on the FVEL seL4 benchmark—a formal verification of the seL4 microkernel. This performance substantially surpasses previous LLM-based approaches and the standalone Isabelle automation tool Sledgehammer.

What the Researchers Built

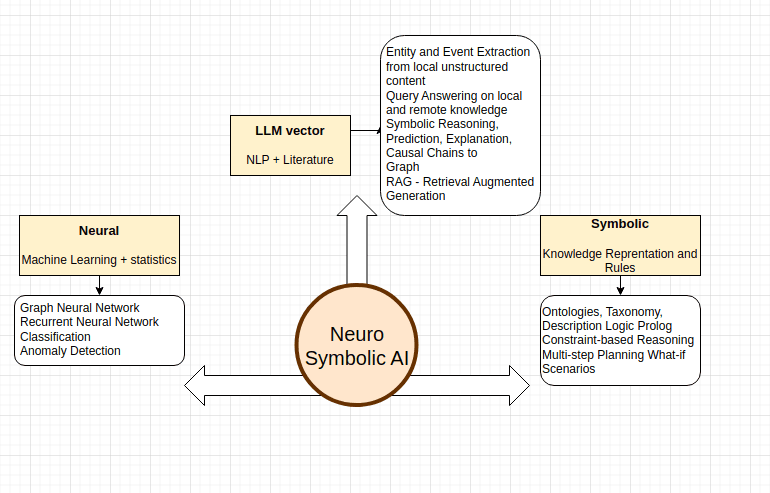

The team developed a framework that performs a best-first tree search over proof states. At each node in the search tree (representing a current proof state), the system queries a fine-tuned large language model (LLM) to propose the next candidate proof step. This creates a neuro-symbolic loop:

- Neural Component: LLMs are fine-tuned on datasets of proof state-step pairs extracted from existing Isabelle developments.

- Symbolic Component: A suite of ITP tools is integrated to repair rejected steps, filter and rank proof states, and automatically discharge subgoals when search progress stalls.

This synergy aims for data-efficient LLM adaptation and semantics-informed pruning of the search space. The framework is implemented on a new Isabelle REPL that exposes fine-grained proof states and automation tools to the LLM.

Key Results

The primary evaluation was conducted on the FVEL seL4 benchmark, a collection of 1,968 theorems from the formal verification of the seL4 microkernel. The researchers compared Stepwise against several baselines:

Stepwise achieved a relative improvement of 33.3% over Sledgehammer and 51.4% over the best LLM-only baseline (GPT-4). Crucially, the system solved significantly more multi-step proofs, which are common in real verification projects and challenging for single-step LLM invocation.

Additional benchmarks on other Isabelle developments (including HOL-Library and HOL-Analysis) demonstrated strong generalization, with Stepwise proving 71.8% and 68.9% of theorems respectively.

How It Works

The Stepwise framework operates through a structured search process:

- State Encoding: The current proof state from the Isabelle REPL is encoded into a text prompt, including relevant local hypotheses, the current goal, and available background facts.

- Step Generation: A fine-tuned LLM (the paper uses CodeLlama 34B as a base) generates multiple candidate next steps (tactics in Isabelle).

- Step Validation & Repair: Each candidate step is sent to Isabelle for execution. If it fails, symbolic repair tools attempt to fix it—for example, by adding missing assumptions or splitting conjunctions.

- State Expansion & Pruning: Successful steps create new child proof states. A best-first search heuristic, informed by both neural confidence scores and symbolic metrics (like goal size reduction), selects which state to expand next.

- Stall Recovery: If search on a branch stalls, auxiliary symbolic provers (integrated via Sledgehammer) attempt to automatically discharge the remaining subgoal.

The training data for the LLM consists of (proof_state, next_step) pairs mined from Isabelle theory files. This allows the model to learn the style of Isabelle proofs, not just the syntax.

Why It Matters

Formal verification is essential for safety-critical systems in aerospace, automotive, and infrastructure software, but its adoption is gated by expert labor. Automating proof construction has been a long-standing challenge. Traditional automated theorem provers (ATPs) struggle with the high-level reasoning often required, while LLMs alone lack the precision and reliability needed for verification.

Stepwise demonstrates that a tight integration of neural and symbolic methods can overcome the limitations of each. The LLM provides creative, high-level proof strategy, while the symbolic tools ensure correctness, handle low-level reasoning, and provide a safety net. The 77.6% success rate on seL4 is not just an incremental improvement; it suggests the approach is viable for assisting with large-scale, real-world verification efforts, potentially reducing expert time and increasing confidence in system correctness.

gentic.news Analysis

Stepwise represents a meaningful evolution beyond the initial wave of "LLMs for code" applications into the more rigorous domain of formal proof. The key insight isn't just using an LLM, but architecting a system where the LLM acts as a strategic generator within a correctness-ensuring symbolic loop. This mirrors a successful pattern from other domains like AlphaCode (LLM + filtering) and AlphaGeometry (LLM + symbolic deduction), now applied to interactive theorem proving.

Practically, the most significant result may be its performance on multi-step proofs. Standalone LLMs or Sledgehammer often fail on these because they require sustained, coherent reasoning across multiple inferences. Stepwise's search-based approach inherently tackles this by maintaining and exploring a proof tree. For verification engineers, this translates to the system being useful on more interesting, non-trivial lemmas, not just simple, one-line properties.

However, the 22.4% of seL4 theorems it couldn't prove highlight the remaining frontier. These likely involve deep, specialized mathematical reasoning or creative lemma discovery that falls outside the patterns in its training data. The next challenge will be closing this gap, possibly by incorporating more advanced symbolic reasoning engines or enabling the system to propose and prove its own auxiliary lemmas mid-search.

Frequently Asked Questions

What is the seL4 benchmark used in this paper?

The FVEL seL4 benchmark is a collection of 1,968 theorems from the formal verification of the seL4 microkernel. seL4 is a high-assurance, secure operating system kernel whose functional correctness has been mathematically proven, making its proof corpus a gold-standard, real-world testbed for automated proof search tools. Success on this benchmark indicates a method's potential for practical systems verification.

How does Stepwise differ from just using an LLM like GPT-4 to write proofs?

Using an LLM in isolation ("LLM-only") involves asking it to generate a complete proof script in one go, which is error-prone and offers no guarantee of correctness. Stepwise integrates the LLM into a correct-by-construction search process. The LLM only suggests individual steps, each of which is immediately validated by the Isabelle prover. Failed steps are repaired symbolically, and the search can backtrack. This ensures every explored path is syntactically and semantically valid, a critical requirement for verification.

What interactive theorem prover (ITP) does Stepwise work with?

The current implementation of Stepwise is built for Isabelle/HOL, a popular ITP used for both software and hardware verification. The framework is built on a new Isabelle REPL (Read-Eval-Print Loop) interface created by the authors. In principle, the neuro-symbolic architecture could be adapted to other ITPs like Coq or Lean, provided a similar fine-grained state interface and symbolic tool integration can be established.

Is the code for Stepwise publicly available?

The arXiv paper lists access to code and data via CatalyzeX and Hugging Face, standard repositories for AI research artifacts. Researchers and practitioners interested in replicating the results or applying the framework to their own Isabelle projects should be able to find the implementation through these links on the paper's abstract page.