The Mental Model That Changes Everything

When Anthropic introduced Agent Skills in October 2023, they called the core design principle "progressive disclosure." The term stuck — it's in the official docs, engineering blog posts, and every tutorial. But there's a better mental model that makes Skills work more intuitively: "progressive discovery."

This isn't semantic nitpicking. The distinction fundamentally changes how you think about Skills and, more importantly, how you write them.

What Actually Happens When Claude Uses a Skill

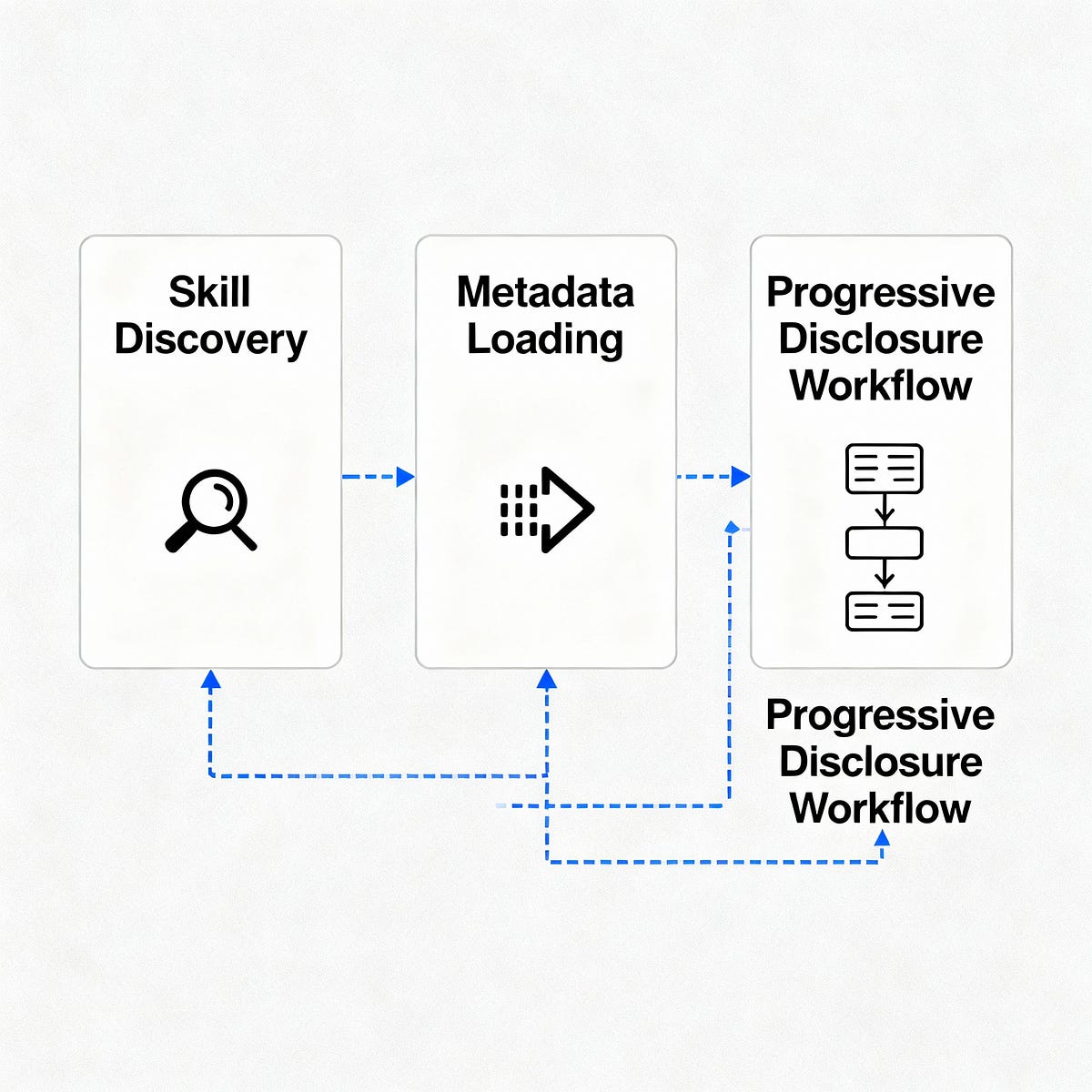

At runtime, here's what Claude Code does:

- Scans metadata — Reads the name and description of every installed Skill

- Reasons about relevance — Decides if any Skill might help with your current task

- Reads SKILL.md — If a Skill looks promising, Claude loads the full documentation

- Explores supplementary files — Only if the task demands more depth does Claude look for references, scripts, or assets

Claude might stop at step 2 if the metadata is enough to determine irrelevance. It might stop at step 3 if SKILL.md provides sufficient guidance. The progression isn't predetermined — it's Claude reasoning its way deeper, one conditional step at a time.

The key insight: Claude is the active party. The Skill is passive bytes on disk, waiting to be found.

Why "Disclosure" Leads You Astray

"Disclosure" implies an active subject making decisions about what to reveal and when. In UX design (where the term comes from), this makes sense — Gmail discloses advanced settings when you click "Show more." The interface is doing something.

When you apply this model to Skills, you unconsciously start thinking the Skill is the active party. You might design elaborate reveal sequences, worrying about "what the Skill should surface when." This leads to over-engineering and misaligned expectations.

Worse, it makes the actual mechanics harder to picture. If you think the Skill is "disclosing" itself, you're picturing the wrong actor doing the work.

How "Discovery" Changes Your Skill Writing

Swap the term and everything becomes clearer:

- Claude discovers metadata

- Claude discovers SKILL.md content

- Claude discovers supplementary files only if needed

The progression belongs to Claude's reasoning chain, not to your Skill's structure.

This mental shift changes the questions you ask as a Skill author:

Instead of: "What should this Skill disclose at each layer?"

Ask: "Can Claude find what it needs here, and does it have enough information to decide whether to go deeper?"

Practical Changes to Your SKILL.md Files

With the "discovery" model, optimize for Claude's discovery process:

1. Write Metadata That Gets Found

# deploy-to-vercel

Deploy static sites and Node.js applications to Vercel with zero configuration

Not:

# deployment

A tool for deploying things

2. Structure SKILL.md for Progressive Discovery

Start with the most critical information — what Claude needs to decide if this Skill is relevant and whether to explore further:

# Deploy to Vercel

## When to Use This Skill

- Deploying static sites (HTML, CSS, JS)

- Deploying Node.js applications

- Setting up automatic deployments from GitHub

## What You Need First

- A Vercel account (free tier available)

- Your project in a Git repository

- Node.js installed (for Node projects)

## Quick Start

`vercel deploy --prod`

[Detailed configuration follows...]

3. Layer Information for Discovery, Not Disclosure

Think: "What does Claude need to discover first to make the next decision?"

Bad (disclosure thinking): "First I'll hide the advanced options, then reveal them later..."

Good (discovery thinking): "Claude needs to discover the basic workflow first, then it can decide if advanced configuration is necessary."

The Evidence Is in Anthropic's Own Writing

Even in Anthropic's engineering blog post that introduced "progressive disclosure," the actual descriptions use discovery language:

- "Claude triggers the Skill..."

- "Claude determines what to load..."

- "Claude loads additional resources..."

The community does the same. Popular tutorials describe Skills as "dynamically discovered and loaded" even while using the "disclosure" terminology.

The instinct is right — the label just hasn't caught up.

What This Means for Your Skills Today

- Audit your existing Skills — Are you writing them as if they're "disclosing" themselves? Rewrite with discovery in mind.

- Simplify your structure — Remove artificial reveal sequences. Present information in the order Claude needs to discover it.

- Test with real queries — Ask Claude to use your Skill with various prompts. Does it discover the right information at the right time?

Progressive discovery isn't just a better term — it's a better model. Claude is the subject, working incrementally through a passive resource. Your Skill's job is to be found, at every layer, by an agent actively reasoning its way through.

Once you see it this way, building effective Skills becomes significantly more intuitive.