Key Takeaways

- The article identifies a common failure mode for CI agents in production: they can get stuck in infinite loops or make excessive tool calls.

- It proposes implementing stop conditions—step/time/tool budgets and no-progress termination—as a solution.

- This is a critical engineering insight for deploying reliable AI agents.

What Happened

A new technical article from Towards AI, titled "The 100th Tool Call Problem: Why Most CI Agents Fail in Production," diagnoses a critical failure mode for AI agents operating in Continuous Integration (CI) environments. The core problem is that agents, when tasked with automating code integration, testing, and deployment workflows, can enter infinite loops or make an excessive number of tool calls (like the titular "100th tool call") without achieving their goal. This leads to runaway costs, stalled pipelines, and unreliable production systems.

The article argues that the primary cause is a lack of robust stop conditions. Without predefined limits, an agent tasked with fixing a build error might recursively try and fail the same action, or get stuck in a planning loop without executing. The proposed solution is to implement a multi-layered safety net:

- Step/Time/Tool Budgets: Hard caps on the number of reasoning steps, total execution time, or tool invocations.

- No-Progress Termination: A mechanism to detect when the agent is stuck in a loop or making redundant calls without advancing the task, and to halt execution.

This follows Towards AI's recent pattern of publishing deep, production-focused technical guides, such as their March 29 article on the modern RAG stack for 2026 and their April 3 guide on four critical observability layers for production AI agents.

Technical Details

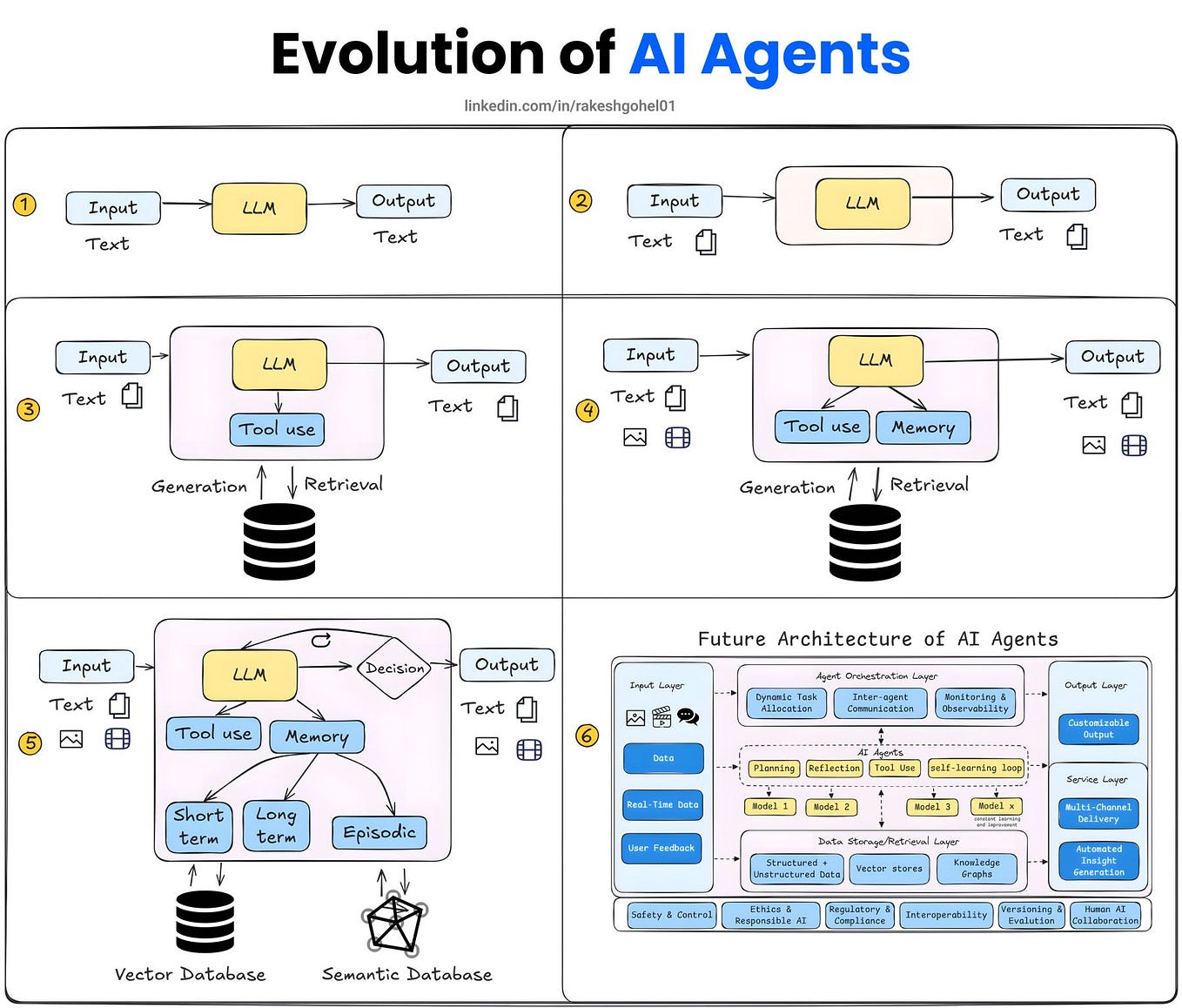

The "100th Tool Call Problem" is a symptom of insufficient agent governance. In a CI context, an agent might have access to tools like git, build_system, test_runner, and deploy_api. A naive agent architecture might allow the agent to call test_runner indefinitely if tests keep failing, or to enter a loop of git checkout -> build -> test without a convergence condition.

The article's recommended stop conditions are not just arbitrary limits but should be informed by the specific task's SLOs (Service Level Objectives). For example, a code review agent might have a step budget of 50, while a complex deployment rollback agent might have a longer time budget but a strict no-progress window.

Implementing no-progress termination requires defining a measurable state or outcome. This could be a hash of the agent's last N actions, checking if the state of the code repository or build system has changed, or monitoring for a reduction in error counts. When no forward progress is detected within a defined window, the agent is stopped, and the task is escalated to a human or a fallback routine.

Retail & Luxury Implications

While the article uses CI/CD as its domain example, the core failure mode and solution are universally applicable to any production AI agent system in retail and luxury. The industry is actively exploring agents for:

- Automated Visual Merchandising: An agent tasked with generating a new homepage layout could get stuck in a loop of generating and rejecting similar images if it lacks a step budget.

- Dynamic Pricing Orchestration: An agent monitoring competitors and adjusting prices could make excessive, unprofitable API calls if not governed by a call budget and no-progress check on margin targets.

- Personalized Styling Assistants: A conversational agent that fetches product recommendations might enter an infinite planning cycle if it cannot decide between similar items for a client, requiring a time budget to fall back to a curated list.

- Supply Chain Anomaly Resolution: An agent diagnosing a logistics delay could recursively check the same tracking endpoint without progressing to initiate a resolution workflow.

The gap between a prototype agent that works in a demo and a production-grade agent that operates reliably at scale is precisely this kind of operational rigor. A luxury brand cannot afford a customer-facing styling agent that "hangs" during a high-value consultation or a pricing agent that triggers a costly, infinite loop of micro-adjustments.

Implementation Approach

Integrating these stop conditions requires engineering effort at the agent orchestration layer, not the core LLM. Frameworks like LangChain, LlamaIndex, and Microsoft's AutoGen provide hooks for defining callbacks and middleware where budgets and progress checks can be enforced.

- Define SLOs per Agent Type: A customer service agent may need a 30-second time budget, while a back-office inventory reconciliation agent could have a 10-minute budget.

- Instrument the Orchestrator: Implement counters for steps, time, and tool calls. Use a persistent context (like a Redis cache) to track state across tool calls for no-progress detection.

- Design Fallbacks: Decide what happens when a budget is exceeded or no progress is made. This could be a handoff to a simpler rule-based system, a default safe action, or a human-in-the-loop alert.

- Monitor and Iterate: As highlighted in Towards AI's April 3 article on observability, these budgets must be monitored and tuned. An agent consistently hitting its step limit may be under-powered or given an impossible task.

Governance & Risk Assessment

Maturity Level: Medium. The concept is well-understood in traditional software (e.g., circuit breakers in microservices), but applying it to non-deterministic AI agents is an emerging practice.

Primary Risk: Agent Stall. The main risk mitigated is operational failure—agents consuming resources without delivering value, leading to financial loss and broken automated processes.

Secondary Risk: Over-Constraint. Setting budgets too aggressively could cause agents to fail prematurely on complex but solvable tasks. This requires careful calibration and A/B testing.

Privacy & Bias: This governance layer is largely orthogonal to data privacy and model bias concerns, though a stalled agent could theoretically fail to invoke a bias-mitigation tool. The focus here is on reliability and cost control.