The relentless advancement of frontier artificial intelligence models—the most powerful systems like GPT-4, Claude 3, and Gemini—depends on a critical but often overlooked component: Reinforcement Learning (RL) environments. These are not mere datasets but sophisticated setups comprising specific tasks, reference solutions, and, crucially, strict verification systems designed to evaluate a model's performance and prevent it from "reward hacking"—finding shortcuts that achieve high scores without genuinely solving the problem.

Currently, according to industry analysis, the creation of these environments is dominated by a small number of boutique contracting firms. This centralized model has served early scaling efforts but is now revealing severe limitations. Small, centralized teams simply cannot scale to provide the vast and diverse domain expertise required to train models that are genuinely advanced across mathematics, biology, law, coding, and countless other specialized fields. This bottleneck threatens to slow the pace of improvement for the very models aiming to achieve artificial general intelligence (AGI).

The Proposed Solution: A Distributed Bounty System

The path forward, as outlined in emerging discourse, is a fundamental shift in how these training environments are created. Instead of relying on a handful of contractors, AI labs are poised to transition to a distributed bounty system. This model would involve crowdsourcing environment creation to a global network of thousands of specialized experts.

Imagine a platform where an AI lab can post a bounty: "Create a high-quality RL environment for evaluating protein-folding reasoning in novel contexts." This bounty would be picked up not by a generalist AI firm, but potentially by a consortium of computational biologists from around the world. The same platform could host bounties for advanced theorem proving, nuanced legal analysis, or obscure creative writing tasks. This taps directly into the distributed intelligence and niche expertise of the global professional and academic community.

Ensuring Quality in a Distributed World

Crowdsourcing complex, technical work inevitably raises questions about quality control and security—especially when these environments are used to train multi-billion-dollar models. The proposed solution is a robust, multi-tiered verification funnel:

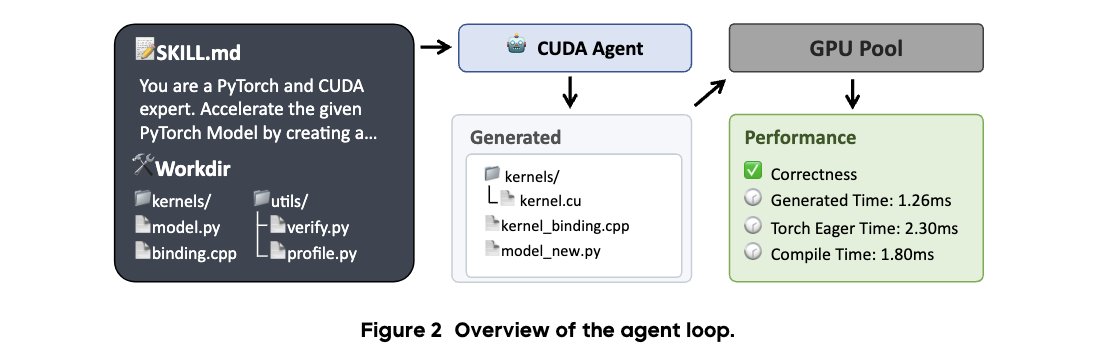

- Automated Structural Checks: Initial submissions would pass through automated filters to ensure they meet basic formatting, syntax, and task definition requirements.

- Adversarial Stress-Testing by LLMs: The core innovation. Other AI models, potentially the very ones being trained, would be deployed to attack the new environment, trying every conceivable method to "reward hack" or find flaws in its evaluation logic. This automated red-teaming is scalable and rigorous.

- Final Human Expert Review: The environments that pass the automated gauntlet would undergo a final review by vetted human experts in the relevant domain, providing the essential layer of nuanced, contextual judgment.

This funnel aims to combine the scale of automation with the irreplaceable discernment of human expertise, creating a pipeline for high-integrity training environments.

Implications: Lower Costs, Broader Capabilities, and a New Competitive Edge

The implications of this shift are profound. First, it promises to drastically lower the cost per environment. By creating a competitive marketplace and utilizing global talent pools where appropriate, labs can move away from expensive, exclusive contracts.

Second, and more importantly, it will radically expand domain coverage. Models will no longer be limited by the knowledge areas of a few contracting firms. They can be trained and evaluated on tasks reflecting the true breadth and depth of human knowledge and professional practice, leading to more robust and generally capable AI.

Finally, this creates a new axis of competition. The AI labs that successfully operationalize this distributed workforce model will gain a massive structural advantage. Their development cycles will accelerate, their models will be more versatile, and their innovation flywheel will spin faster. The race to advanced AI may increasingly be determined not just by compute power and algorithms, but by who can best organize and verify human-machine collaborative intelligence at scale.

This evolution mirrors broader trends in the digital economy—from open-source software to gig platforms—but applied to the foundational infrastructure of AI training itself. It suggests that the future of building superintelligent machines may depend on our ability to intelligently harness the collective expertise of humanity.

Source: Analysis based on discourse from @rohanpaul_ai regarding bottlenecks and innovations in AI training infrastructure.