The LLM Evaluation Problem Nobody Talks About

A pervasive and critical issue in the development and benchmarking of Large Language Models (LLMs) is the contamination of evaluation datasets. As detailed in the source article, this problem arises when the data used to train a model inadvertently includes examples from the very benchmarks—like MMLU, HellaSwag, or GSM8K—that are later used to evaluate its performance. This creates a significant validity crisis: a model's high score may reflect its memorization of test questions rather than its genuine reasoning or knowledge capabilities. The article frames this as a foundational flaw that undermines trust in published model capabilities and hampers true progress in the field.

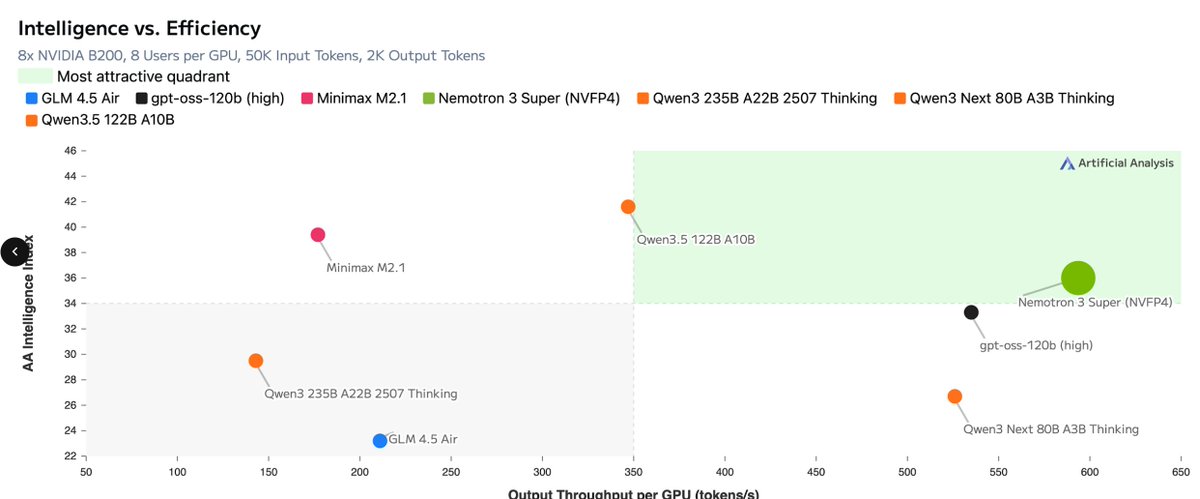

NVIDIA's Open-Source Fix: Nemotron 3 Super

The proposed solution, as outlined, is NVIDIA's release of Nemotron 3 Super, an open-source family of models specifically designed to generate high-quality, uncontaminated synthetic data for evaluation. The core idea is to break the cycle of data contamination by creating a fresh, clean evaluation suite. Instead of relying on static, potentially compromised benchmarks, developers can use these models to produce new, diverse, and challenging test questions that the model being evaluated has never seen before.

This approach aims to provide a more accurate and fair assessment of a model's true zero-shot or few-shot reasoning abilities. By generating synthetic data, it also allows for the creation of tailored evaluation sets that can stress-test specific capabilities relevant to different applications, moving beyond one-size-fits-all benchmarks.

Technical Details & The Broader Context

The release of Nemotron 3 Super fits into NVIDIA's broader strategy of providing a full-stack AI ecosystem, from hardware (like the anticipated Blackwell and Rubin platforms) to foundational software. This tool addresses a key pain point in the model development lifecycle: reliable validation. It is part of a growing industry recognition that robust, uncontaminated evaluation is as important as training scale.

The knowledge graph context shows NVIDIA's intense focus on advancing AI infrastructure, with recent events including the upcoming GTC 2025 keynote and the release of other specialized models (like a brain MRI generator). Nemotron 3 Super can be seen as a strategic software tool that complements its hardware dominance, enabling developers to build and validate better models, which in turn drives demand for more powerful computing platforms.

Retail & Luxury Implications

For AI practitioners in retail and luxury, the implications of this evaluation problem and its proposed fix are indirect but profoundly important. The reliability of any LLM deployed in a customer-facing or operational role hinges on a truthful understanding of its capabilities.

1. Trust in Third-Party Models: When evaluating off-the-shelf or fine-tuned models for applications like personalized marketing copy, customer service chatbots, or product description generation, benchmark scores are a primary filter. If those benchmarks are contaminated, a luxury brand could select a model that appears superior on paper but fails to generate brand-appropriate, nuanced, or factually consistent language in practice. Nemotron 3 Super's methodology offers a blueprint for creating internal, domain-specific evaluation suites—for instance, generating synthetic customer queries about product heritage, material care, or style advice—to test models more rigorously before deployment.

2. Developing Proprietary Models: For houses investing in custom LLMs trained on their unique corpus of historical archives, design notes, and client communications, clean evaluation is paramount. They need to measure true understanding of brand ethos and aesthetic language, not memorization. Using synthetic data generation techniques inspired by this approach, internal AI teams could create secure, brand-specific evaluation sets that are guaranteed to be uncontaminated, ensuring that model improvements are genuine.

3. The Shift to Synthetic Data: The core concept of using high-quality synthetic data for evaluation mirrors a larger trend of using synthetic data for training in sensitive industries. Luxury, with its emphasis on exclusivity and confidentiality, can leverage similar techniques to create robust AI training and testing environments without exposing real client data or proprietary information.

In essence, the article highlights a fundamental issue of measurement integrity in AI. For luxury retailers, where brand voice, accuracy, and client trust are non-negotiable, ensuring that the AI tools they adopt are evaluated against meaningful, uncontaminated standards is a critical step in responsible and effective implementation.