Key Takeaways

- An AI engineer details how building a robust fine-tuning system for a specific task was a significant technical achievement.

- However, the subsequent release of a newer, more capable foundation model outperformed their custom solution, dramatically reducing the project's return on investment and questioning the long-term value of certain fine-tuning efforts.

What Happened

In a candid personal account, an AI practitioner has detailed a common but painful scenario in modern machine learning operations. The author successfully built a production-grade fine-tuning pipeline for a large language model, investing considerable time and engineering effort to tailor the model for a specific, high-value task. This process involved data curation, experiment tracking, infrastructure setup, and validation—a non-trivial undertaking that represents a core competency for many AI teams.

The project was initially deemed a success, delivering improved performance over the base model for its targeted use case. However, the return on this investment was abruptly challenged not by a competitor's product, but by a fundamental shift in the AI landscape: the release of a newer, more powerful foundation model. This next-generation model, available "off-the-shelf," demonstrated superior performance on the very task the custom fine-tuned model was built for, effectively obliterating the unique value proposition and ROI of the bespoke solution. The author's experience underscores a volatile truth: the ground is constantly shifting beneath AI projects, and a solution's competitive edge can be ephemeral.

Technical Details: The Fine-Tuning Dilemma

Fine-tuning is the process of taking a pre-trained foundation model (like GPT-4, Claude, or Llama) and further training it on a specialized dataset to excel at a particular task or adopt a specific style. It's a primary method for customization, allowing companies to inject domain knowledge, brand voice, or procedural expertise into a general-purpose AI.

The engineering challenge is substantial. It requires:

- Data Pipeline: Curating, cleaning, and formatting high-quality training data.

- Infrastructure: Securing and managing GPU clusters (like H100s, referenced in our prior coverage) for training runs.

- Methodology: Choosing techniques like QLoRA (Quantized Low-Rank Adaptation) to make training efficient and cost-effective.

- Validation: Rigorously testing the tuned model against the base model to prove its added value.

This story highlights the inherent risk in this approach: model obsolescence. The rapid pace of foundational model development means that a model fine-tuned on "Model-N" can be outclassed in six months by the general capabilities of "Model-N+1," even without any task-specific tuning. The investment in customization can be devalued overnight by upstream progress.

Retail & Luxury Implications

For retail and luxury AI leaders, this narrative is a critical strategic parable. Many are investing in fine-tuning for key applications:

- Customer Service Agents: Tuned on historical chat logs to handle luxury product inquiries, returns, and concierge-style requests.

- Product Description Generators: Trained on brand catalogs to produce on-brand, evocative copy for new collections.

- Personal Stylist Chatbots: Customized with fashion knowledge and customer purchase history.

- Internal Knowledge Assistants: Fine-tuned on internal manuals, design briefs, and supply chain documents.

The core question this story forces to the forefront is: Are you building a durable competitive advantage, or a temporary performance bump that will be commoditized by the next model release?

If a team spends six months and significant budget fine-tuning a model for customer email summarization, and a new foundation model released next quarter does it just as well with simple prompting, the ROI calculus collapses. The strategic implication is that competitive differentiation may need to shift from the model itself to the proprietary data, the unique user experience, or the seamless integration into business workflows—assets that are harder for a new base model to replicate.

Implementation Approach & Risk Assessment

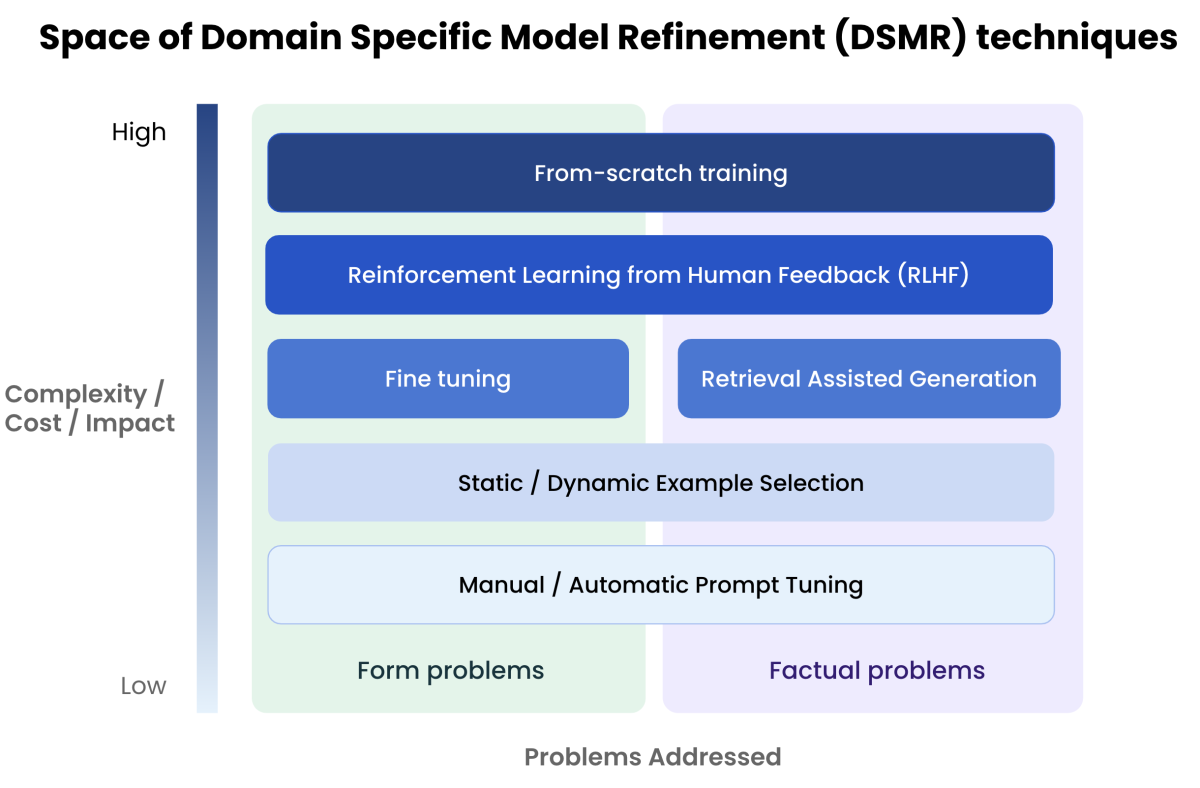

This does not mean fine-tuning is obsolete. It means its application must be more judicious and its value hypothesis more robust.

A Revised Implementation Checklist:

- Task Criticality: Only fine-tune for tasks where performance gaps in base models are large and directly tied to revenue or brand integrity.

- Data Moat: The training data should be highly proprietary, unique, and not easily learnable from public corpora (e.g., nuanced brand guidelines, decades of designer notes).

- Architecture Decoupling: Build your application logic and user experience in a way that is agnostic to the underlying model, allowing you to swap in newer base models or re-tune with less friction.

- Continuous Benchmarking: Establish automated benchmarking that continuously compares your tuned model against the latest frontier models. Treat fine-tuning as a continuous process, not a one-off project.

Governance & Risks:

- Financial Risk: The primary risk is sunk cost in engineering effort and compute resources for a solution that becomes obsolete.

- Strategic Risk: Over-investing in a tuning strategy while under-investing in data asset creation or workflow innovation.

- Technical Debt: A highly customized model can become a legacy system that is difficult to update or maintain.

The maturity level of fine-tuning as a practice is high, but its value stability is now low to medium, heavily dependent on the accelerating pace of foundational AI research.