Key Takeaways

- A first-person technical blog chronicles rebuilding a vector store index on GCP, exposing a 'semantic void' where embeddings fail to capture meaning.

- This serves as a cautionary tale for any RAG implementation, including retail chatbots and product search.

What Happened

In a recent Medium post titled The Semantic Void — A RAG Detective Story, author S.R. Feinstein recounts a first-hand debugging session with a Retrieval-Augmented Generation (RAG) pipeline. The narrative begins with a routine rebuild of a vector store index deployed on Google Cloud Platform (GCP). A multimodal ingestion script was used — indicating the system processes both text and images, likely for a product catalog or document search application.

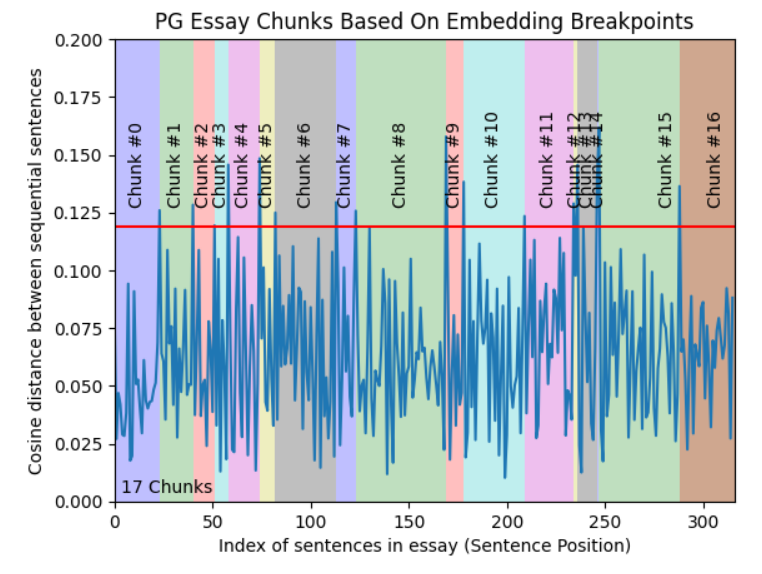

The “semantic void” refers to a situation where the embedding model fails to capture meaningful relationships between queries and stored documents, causing retrieval to return empty or irrelevant results. Feinstein describes the detective work of tracing through the pipeline — chunking strategy, embedding tooling, index configuration, and query preprocessing — to locate the root cause. The article is written as a cautionary tale for engineers who blindly trust their vector databases, highlighting how subtle misconfigurations or mismatched embedding functions can silently cripple a RAG system.

While the exact fix was not detailed in the snippet, the emphasis is on systematic debugging: checking cosine similarity thresholds, verifying that multimodal embeddings are aligned across modalities, and ensuring the index rebuild actually persisted correctly.

Technical Details

RAG systems are notoriously brittle in production. The “semantic void” builds on a known failure mode: when the query embedding lies in a region of the vector space that has no densely populated neighbours — effectively a hole in the coverage. This can happen when:

- Chunk size is too aggressive – Important context is split across chunks, causing each to be too sparse.

- Embedding model drift – The model used at indexing time differs from the one at query time (even subtle version changes).

- Missing cross-modal alignment – In a multimodal pipeline, text and image embeddings may not be mapped to a shared latent space properly.

- Index persistence bugs – Rebuilding on GCP might fail if the new index file is not correctly uploaded or if the endpoint loads a stale snapshot.

The blog serves as a practical case study for MLOps teams. It underscores that monitoring retrieval quality (e.g., hit rate, relevance scores) is just as critical as monitoring LLM output.

Retail & Luxury Implications

For retailers and luxury houses operating customer-facing chatbots, virtual assistants, or internal knowledge bases, RAG reliability is paramount. A “semantic void” in a product search could cause a user query like “black leather tote bag under $2,000” to return no results — eroding trust and losing sales. The same void could cripple an internal assistant designed to answer employees’ questions about inventory policies or store procedures.

The debugging techniques described in the article are directly transferable to retail AI teams. Key lessons:

- Guard against multimodal mismatch – Luxury catalogs use both text descriptions and high-res images. If your RAG pipeline embeds images separately, ensure alignment with textual features.

- Monitor retrieval coverage – Implement dashboards showing the percentage of queries that return at least one relevant document. A sudden drop signals a semantic void.

- Test with edge-case queries – Brand names (e.g., “Bottega Veneta intrecciato”) or very specific product attributes can fall into unseen embedding regions. Pre-deployment testing should include these.

While the blog’s setting is a GCP-deployed RAG, the principles apply to any vector store — Pinecone, Weaviate, Chroma — and any embedding provider like OpenAI’s text-embedding-3 models or open-source alternatives. Retail AI leaders should use this as a prompt to review their own RAG monitoring and alerting practices.

gentic.news Analysis

This article is a timely reminder that the “age of RAG” is still early in terms of production maturity. Most retaIL AI teams are racing to deploy RAG for customer support and product discovery, but few have robust observability for the retrieval layer. While the source is a personal blog, it aligns with a growing body of evidence from conferences (e.g., QCon, MLOPs meetups) that embedding pipeline health is the single most underestimated operational risk in LLM applications.

For luxury retail specifically, the tolerances are lower. A chatbot that can’t find a product or answers with “I don’t know” frustrates high-net-worth customers who expect flawless service. The ‘semantic void’ concept should become a standard checklist item in any RAG architecture review.

We recommend that retaIL AI teams:

- Run periodic emptiness tests (synthetic queries designed to probe the embedding space)

- Use embedding-based alerts (e.g., when the average maximum cosine similarity drops below a threshold)

- Consider hybrid search (keyword + vector) as a fallback to mitigate void-related failures.

The blog’s detective approach — systematic elimination of variables — is a model for how to debug these systems without causing downtime. It’s a must-read for any team operating RAG in production.

Note: The original article is behind a Medium paywall; our analysis is based on the publicly available snippet and general knowledge of RAG systems.